Insights

Articles organized around the themes that define the platform: AI in real application development, architecture trade-offs, spec-driven development, and system behavior.

Topic Clusters

These clusters group the archive into deeper themes so related articles reinforce each other instead of sitting in one flat list.

AI & Data

Applied AI, machine learning, data analysis, and the practical limits of intelligent systems.

34 articles

Development

.NET development, API integration, implementation patterns, and the trade-offs that show up in working code.

29 articles

DevSpark

Spec-driven development, AI-assisted delivery workflows, governance, and the DevSpark toolkit.

26 articles

Case Studies

Project writeups, Spark ecosystem applications, content workflows, and lessons from shipped systems.

32 articles

Delivery

Project Mechanics, leadership judgment, delivery accountability, and team operating models.

12 articles

All Articles

Showing 133 articles

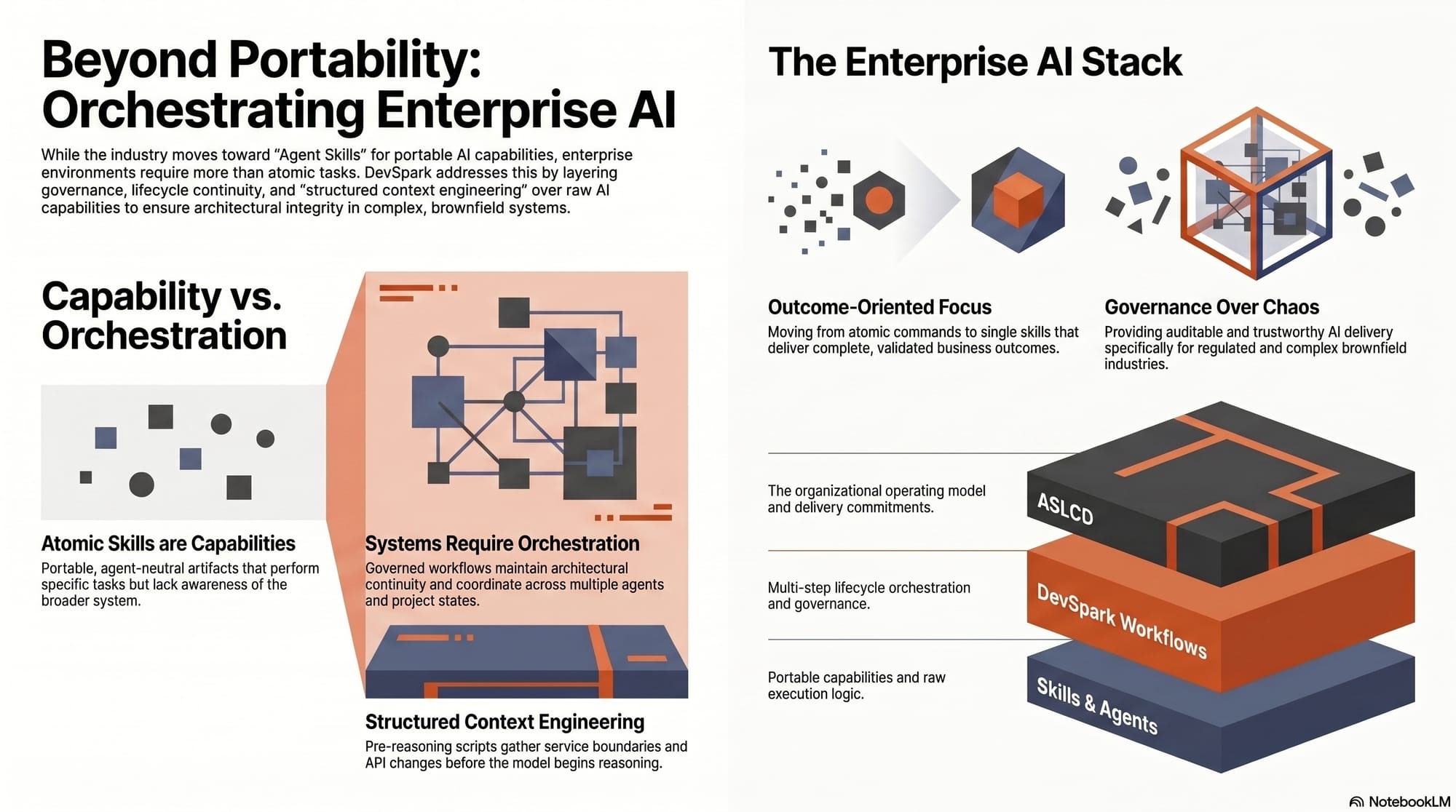

DevSpark and Agent Skills: Beyond Portable AI Capabilities

The Agent Skills specification is a welcome step toward portable, reusable AI capabilities. But enterprise software delivery needs more than portable skills — it needs orchestration, governance, and lifecycle continuity. This article traces where DevSpark sits in that emerging landscape and why the distinction matters.

Stop Digging Through Logs. Start Designing for Learning.

Analytics and development teams operate on the same data with different goals, different time horizons, and different definitions of what matters. This is about that structural gap — and the analytic contract that makes both teams' assumptions visible before something breaks.

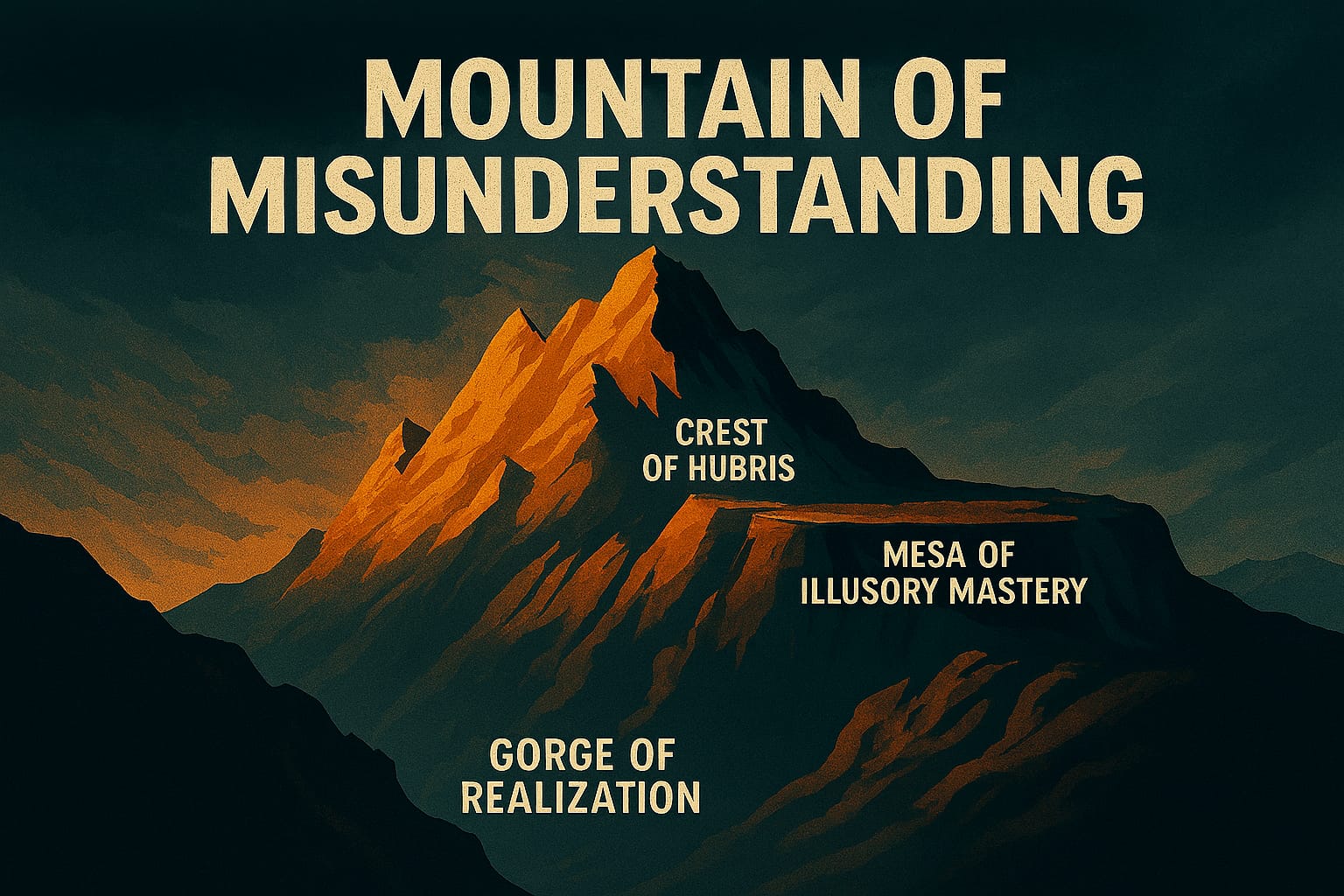

Mountains of Misunderstanding: The AI Confidence Trap

The Mountains of Misunderstanding map the gap between what we think we know and what we actually know — a gap that AI widens by packaging fluency as expertise. A year after writing this article, spec-driven development became my structural answer to staying off the mesa.

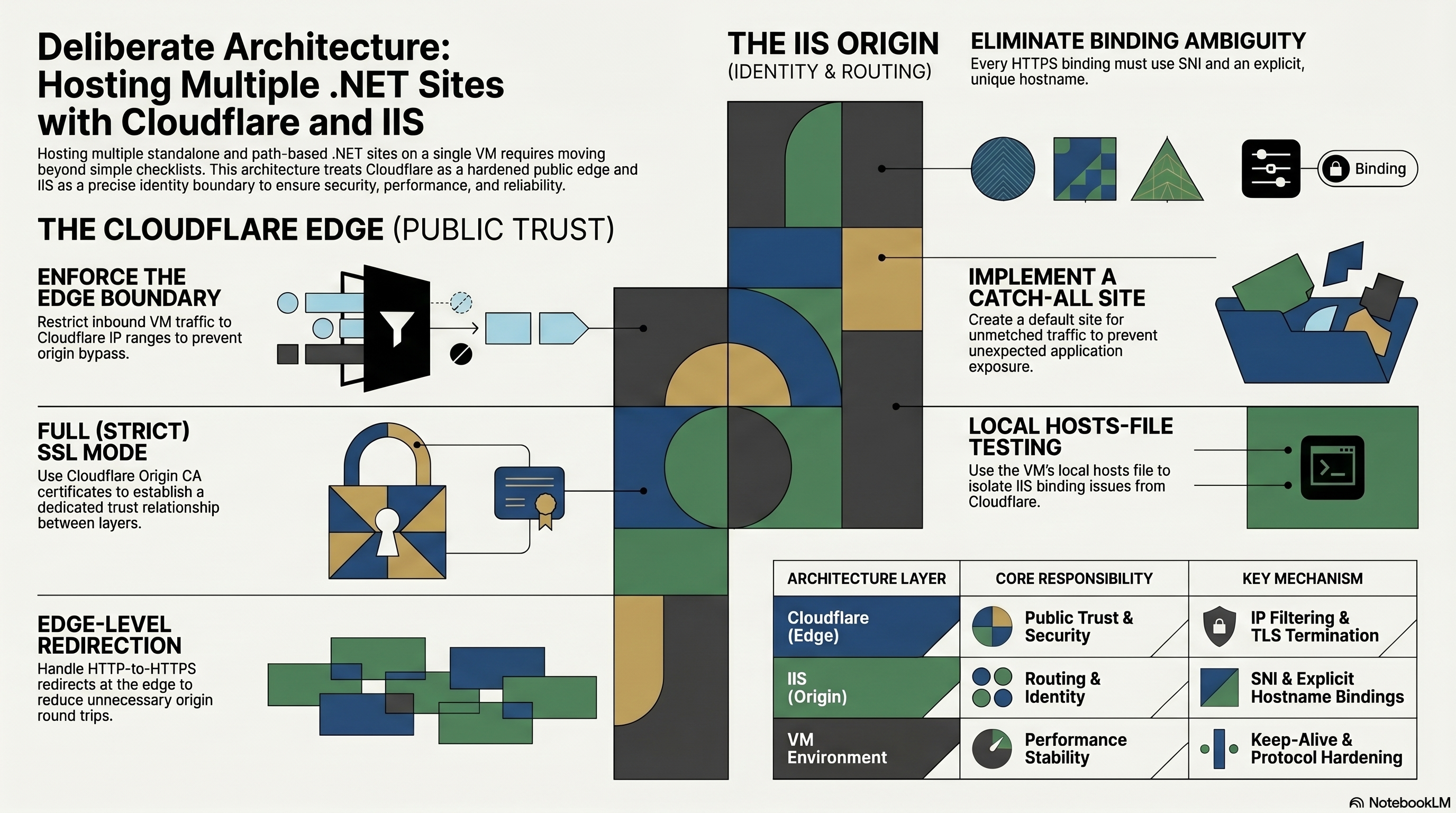

Cloudflare and IIS: Hosting My .NET Sites on One VM

Getting one .NET site online behind Cloudflare is manageable. Hosting several low-traffic demonstration sites on one Windows VM to keep cost and maintenance low forced me to think less about hosting checklists and more about blast radius, boundaries, and what I would do if one of them ever outgrew this setup.

DevSpark Blogging Workflow: How I Built Better Articles

Writing the Cloudflare and IIS article made me realize I needed the same kind of governed workflow for content that I expect from code. This is how I layered write-article, critique, editorial, and SEO prompts on top of DevSpark so a rough idea could become a stronger, more publishable article through deliberate iteration.

Closing the Loop: Automating Feedback with Suggest-Improvement

How the suggest-improvement workflow alias captures developer friction in context and closes the loop between daily use and framework evolution.

Designing the DevSpark CLI UX: Commands vs Prompts

How DevSpark's CLI evolved from slash commands to a full subcommand tree — and what those design choices reveal about structure vs. flexibility in AI tooling.

The Alias Layer: Masking Complexity in Agent Invocations

How DevSpark's shim architecture and workflow aliases reduce cognitive overhead — from agent-specific boilerplate to a single semantic command.

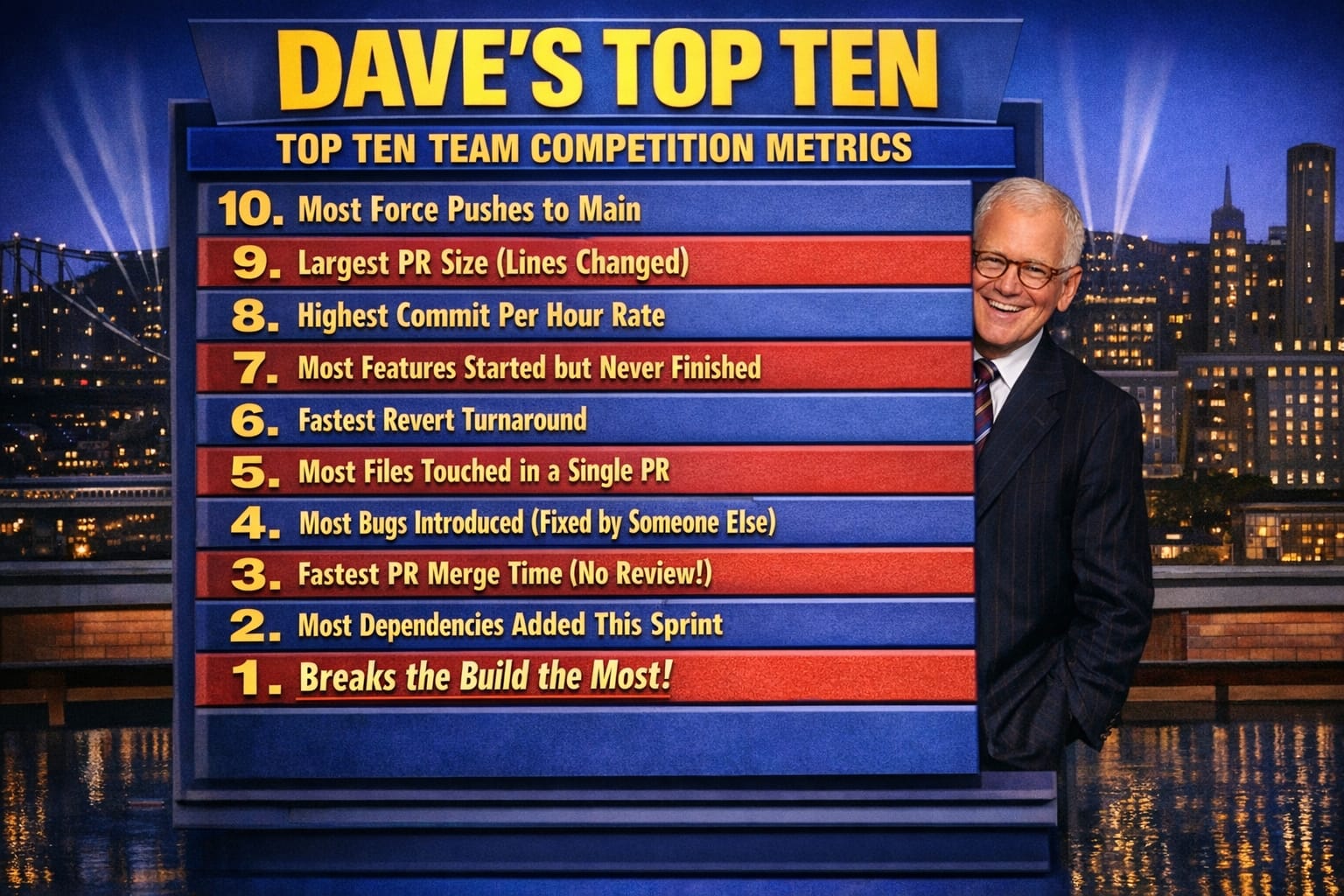

Dave's Top Ten: Git Stats You Should Never Track

Born from a Friday afternoon joke, this Letterman-style top ten list of terrible git competition metrics evolves into a serious look at what git-spark and github-stats-spark actually measure — and why honesty beats authority.

Dogfooding DevSpark: Building the Plane While Flying It

A first-person look at what it's actually like to dogfood DevSpark — using a prompt tool to refine a prompt tool — anchored in an old EDS Super Bowl commercial about building a plane mid-flight.

Workflows as First-Class Artifacts: Defining Operations for AI

How DevSpark's Harness Runtime turns ad-hoc AI interactions into version-controlled, validated, reproducible workflow specs — and what changed.

Observability in AI Workflows: Exposing the Black Box

How DevSpark run artifacts, JSONL event logs, and telemetry make AI workflow debugging tractable — turning non-deterministic failures into diagnosable events.

Autonomy Guardrails: Bounding Agent Action Safely

How DevSpark's act/plan execution modes and per-step tool scoping let me expand agent autonomy incrementally — starting with review, earning toward execution.

Bring Your Own AI: DevSpark Unlocks Multi-Agent Collaboration

DevSpark's latest release rebuilds the framework's core to be completely AI-agnostic. The new Centralized Agent Registry — a single agents-registry.json file — strips every hardcoded 'if Copilot do this, if Claude do that' decision out of the framework scripts and replaces it with dynamic configuration. Adding support for tomorrow's newest AI tool is now a one-line registry entry. More practically: the same Markdown spec that one developer refines with Copilot in VS Code can be picked up and implemented by a colleague using Claude Code in the terminal, then reviewed by a tech lead in Cursor — without the framework skipping a beat.

The DevSpark Tiered Prompt Model: Resolving Context at Scale

How DevSpark's cascading prompt hierarchy — framework defaults, project overrides, user personalization — injects the right context without repetition.

A Governed Contribution Model for DevSpark Prompts

How DevSpark's tiered ownership model lets improvements flow from individual discovery to shared framework — without bottlenecks, without chaos.

Prompt Metadata: Enforcing the DevSpark Constitution

How frontmatter-driven contracts and spec lifecycle enforcement keep the DevSpark constitution non-negotiable — from initial specification through PR review.

DevSpark Monorepo Support: Governing Multiple Apps in One Repository

Monorepos give teams atomic commits and unified history, but they introduce governance problems: mixed review rules, scope ambiguity, and AI agents that can't tell one app from another. DevSpark's multi-app support solves this with an explicit application registry, layered governance that can't weaken repo-wide rules, and dependency-aware scope analysis — all backward-compatible and opt-in.

DevSpark v0.1.0: Agent-Agnostic, Multi-User, and Built for Teams

DevSpark v0.1.0 introduces two reinforcing design pillars — agent-agnostic architecture and multi-user personalization — that solve a tension every team with AI coding agents faces: how to share standards without forcing uniformity. Canonical prompts live in one place, thin shims adapt them per platform, and /devspark.personalize lets each developer tailor commands without affecting anyone else. The result is a model where teams commit personalized prompts to git, making individual workflow choices visible, reviewable, and shareable.

DevSpark in Practice: A NuGet Package Case Study

The DevSpark series describes the methodology. This article shows it. Four consecutive feature specifications on WebSpark.HttpClientUtility — a production .NET NuGet package — covering a documentation site, compiler warning cleanup, a package split, and a new batch execution feature. Each spec illuminated something different about what spec-driven development costs, what it saves, and what it preserves.

DevSpark: From Fork to Framework — What the Commits Reveal

Writing about building something and actually building it are two different activities. This article uses the DevSpark commit history as primary source material — tracking what got built, when, and why from the first fork through the many iterations that produced DevSpark v0.1.0. The result is a practitioner's record of how an idea becomes a tool through persistence, iteration, and a willingness to throw things out and start again.

DevSpark: Months Later, Lessons Learned

After months of using DevSpark across real projects, the theory met reality. This article is a practitioner's check-up — what survived contact with production, what surprised me, and the lessons I didn't expect about AI confidence, adversarial review, and the economics of doing it right.

RESTRunner: Building a DIY API Load Testing Tool

A technical retrospective on RESTRunner — built when three strict criteria demanded it. Covers the concurrency and telemetry decisions that shaped it, the mistakes embedded in its history, and a framework for knowing when your team should build its own tools.

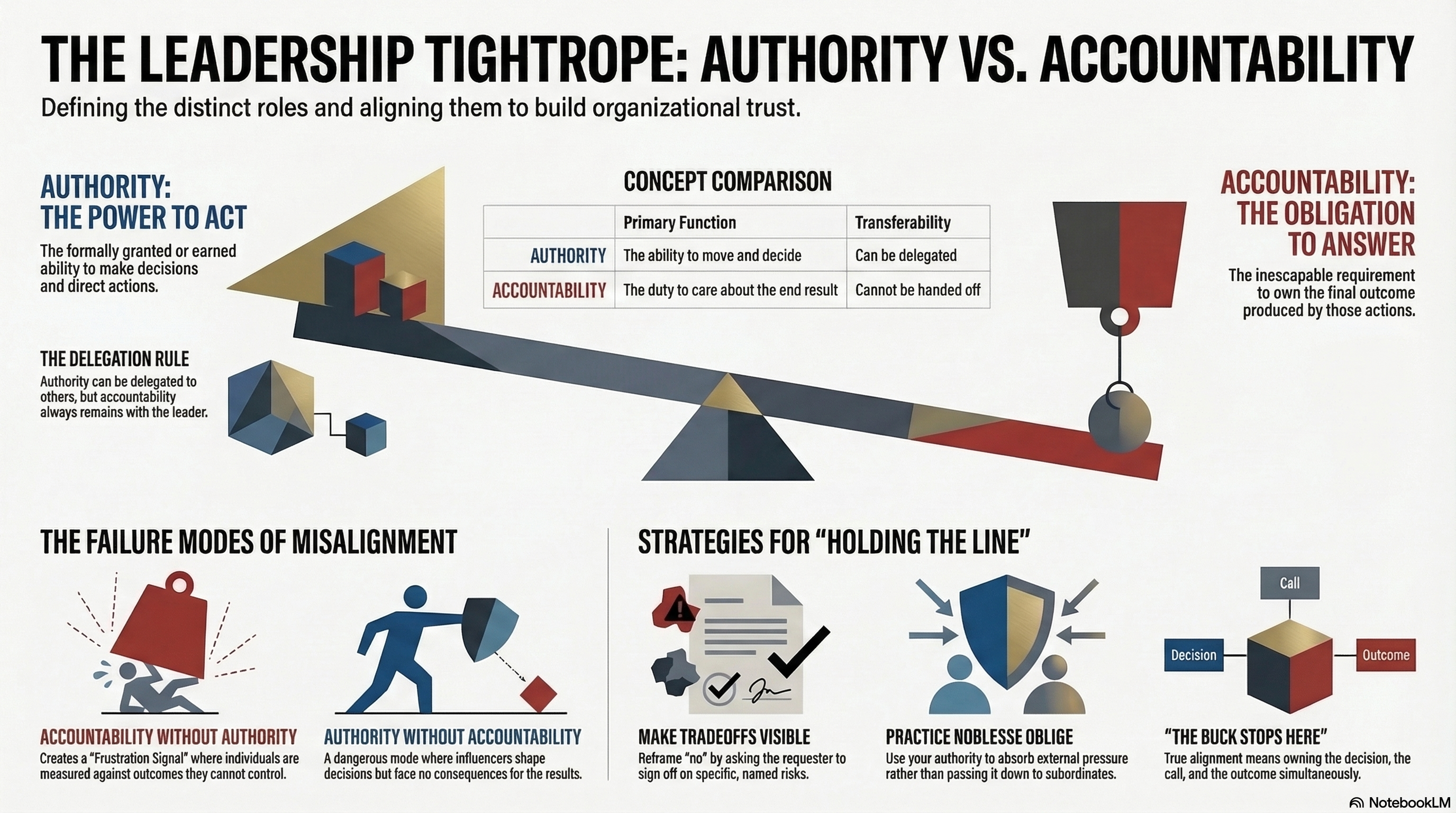

Accountability and Authority: Walking the Tightrope

Dave went home at 5:30 Friday with a clean fix and a fast-follow plan. But the story wasn't over. Monday morning, the VP of Sales showed up with a calendar invite and a commitment already made to a key account. What happened in that meeting is a live demonstration of authority, accountability, and what it actually looks like when a leader holds the line.

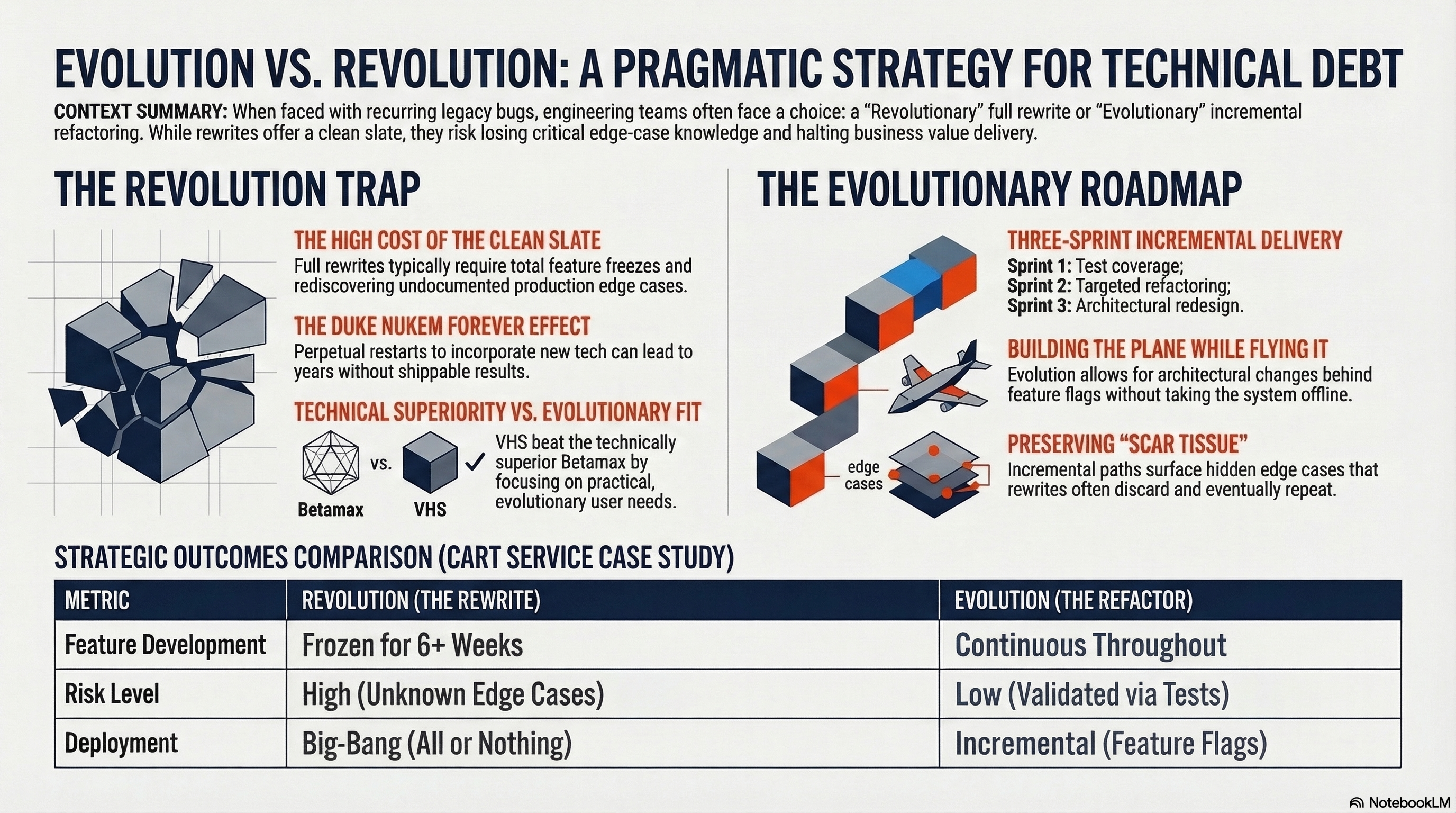

Evolution over Revolution: A Pragmatic Approach

After the third caching bug in six months, Dave arrives at the sprint retrospective with a proposal — a full rewrite of the cart service. The frustration is legitimate. But Jordan, a senior developer, asks one question that changes everything — "What does rewrite actually mean, exactly?" What follows is a whiteboard conversation, a cautionary tale from Duke Nukem Forever, and three sprints that delivered more than a rewrite would have.

From Features to Outcomes: Keeping Your Eye on the Prize

Features are easy to count. Outcomes are harder to measure but they're the only thing that actually matters. This article examines the distinction between what a project delivers and what it achieves, why that gap is where most project value gets lost, and what it looks like in practice to keep your team focused on the prize rather than the checklist.

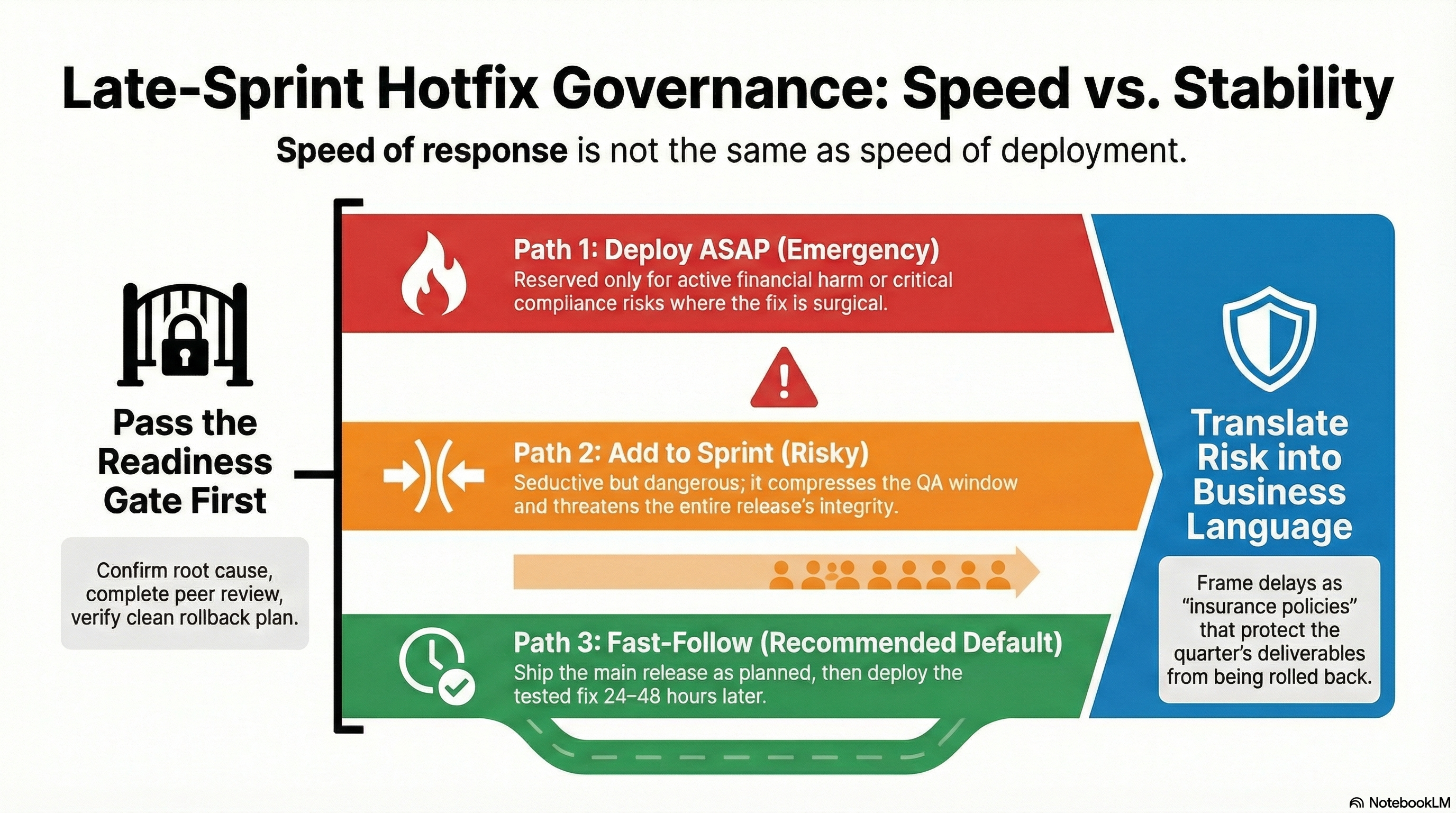

When the Pressure is On - Late Sprint Hotfix Governance

Late-sprint defects create intense pressure to rush fixes into production. But speed of response isn't the same as speed of deployment. This article explores a governance framework that balances customer impact, release stability, and team sanity—introducing the various drivers and gates that make late-sprint hotfix decisions defensible, repeatable, and rational.

SupportSpark: A Lightweight Support Network Without the Noise

SupportSpark is a lightweight, privacy-focused web application that asks a simple question — do we really need social media to keep a support network informed? Built with React 19, Express 5, and TypeScript, it strips away ads, algorithms, and noise to provide a clean process for sharing updates during difficult times.

DevSpark: The Evolution of AI-Assisted Software Development

DevSpark evolved from a greenfield planning tool into a governance framework for AI-assisted development. This overview tracks the progression from requirements-first principles through constitution-based PR reviews, brownfield discovery, adaptive lifecycle management, and automated upstream sync.

Fork Management: Automating Upstream Integration

When you fork an open-source project to add significant enhancements, staying synchronized with upstream improvements while preserving your innovations is a classic dilemma. DevSpark solves this with automated upstream synchronization using intelligent scripts, decision criteria frameworks, and AI-assisted integration planning.

From Oracle CASE to Spec-Driven AI Development

From Oracle CASE repositories in the 90s to AI-powered DevSpark today, this is a personal journey through four decades of model-driven development. Learn how the industry cycled from structure to speed and back to synthesis, and what Monday-morning practices you can adopt now.

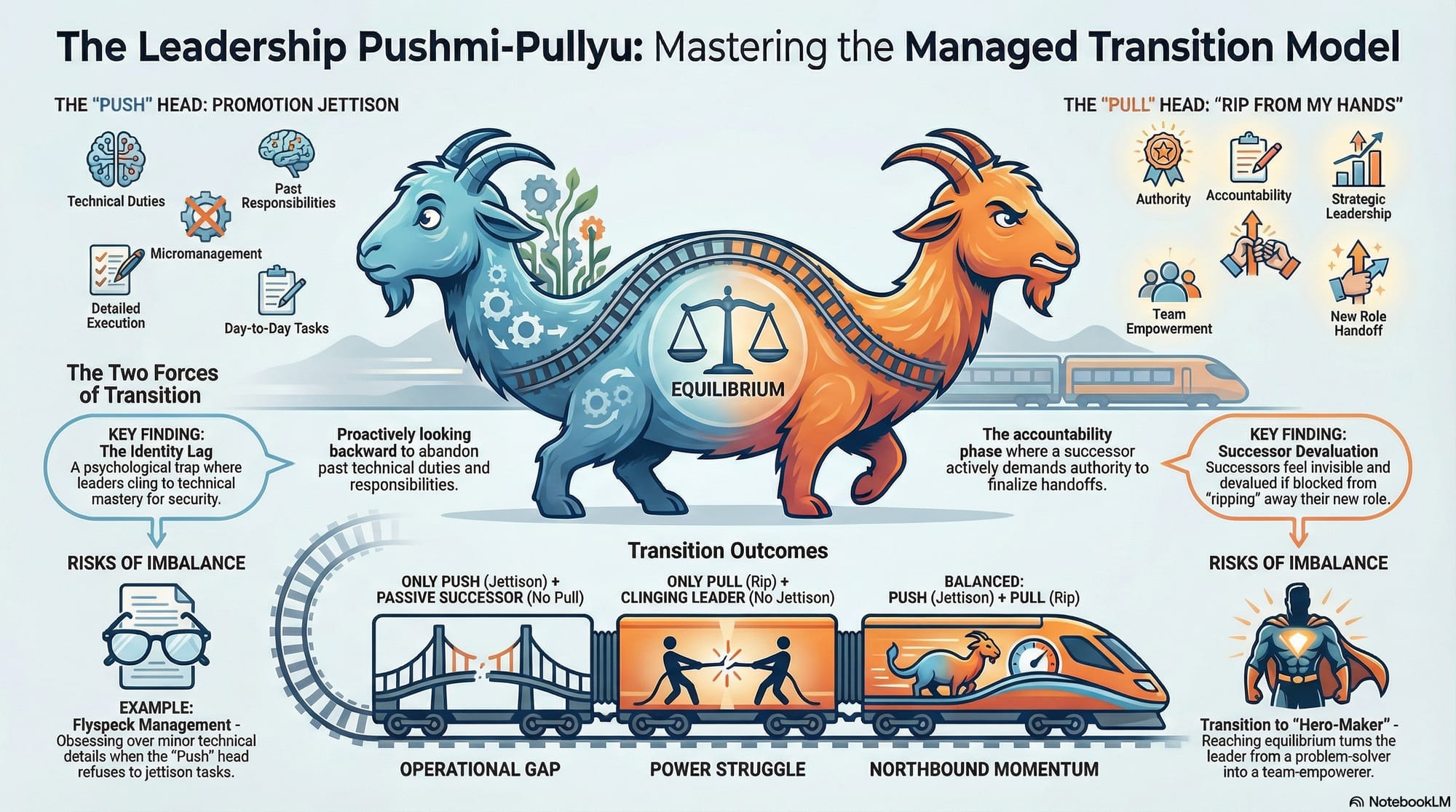

The Managed Transition Model: Leadership Promotion as Power Exchange

Leadership promotions are often celebrated as milestones, but the real work begins in the transition of authority, identity, and responsibility. The Managed Transition Model, grounded in MEMC's Model-Netics, reframes promotion as a coordinated exchange of power between outgoing and incoming roles.

UISampleSpark: Modern DevOps as a Living Reference

Writing code is only half the story. This final article in the UISampleSpark series traces the operational journey from manual builds to fully automated pipelines spanning Docker containerization, three-workflow CI/CD, security scanning, and multi-platform cloud deployment.

UISampleSpark: Seven UI Paradigms, One Backend

Most tutorial projects demonstrate one way to build a web interface. UISampleSpark asks a different question — what if we demonstrated all of them? Seven radically different frontend approaches, the same backend API, the same data model, compared side by side.

UISampleSpark: Constitution-Driven Development

For nearly seven years, UISampleSpark operated on implicit rules. In February 2026, a constitution-driven approach powered by AI agents analyzed the codebase, surfaced unwritten conventions, formalized 11 principles with 30 enforceable requirements, and resolved three critical compliance gaps.

UISampleSpark: Seven Years of .NET Modernization

Since Microsoft unified .NET under a single platform, a new major version ships every November. UISampleSpark adopted every release deliberately, documenting the friction points and upgrade strategies that real-world teams encounter across seven major migrations.

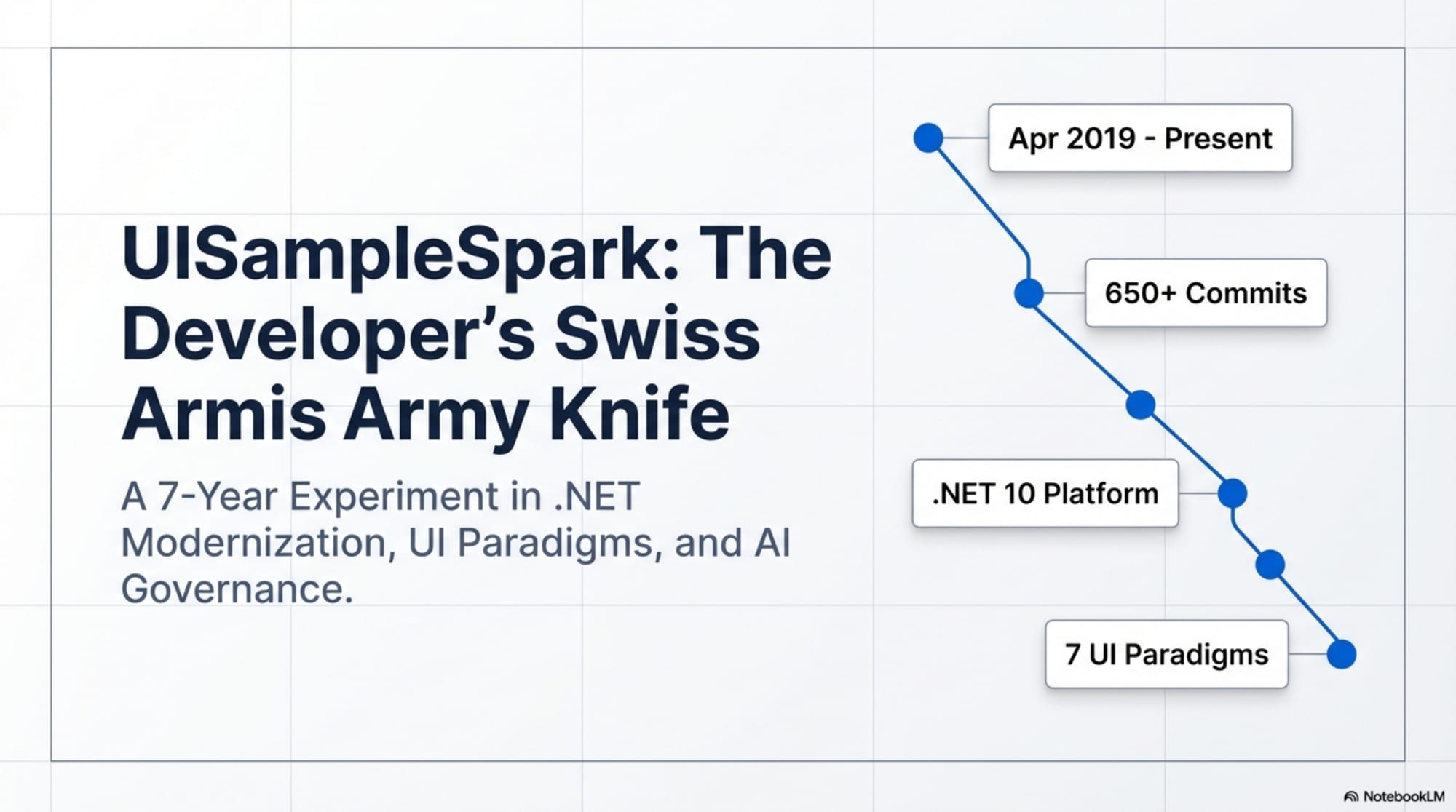

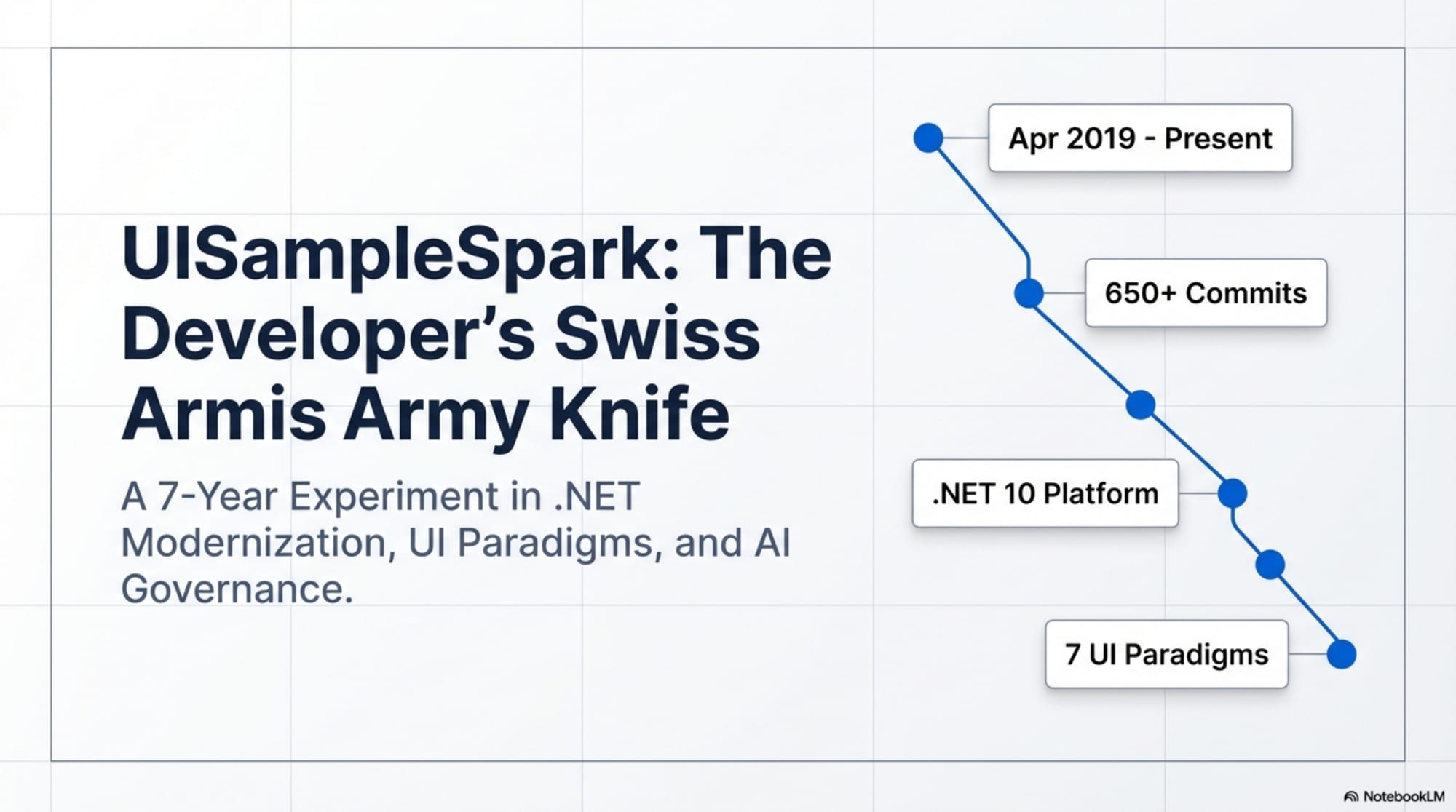

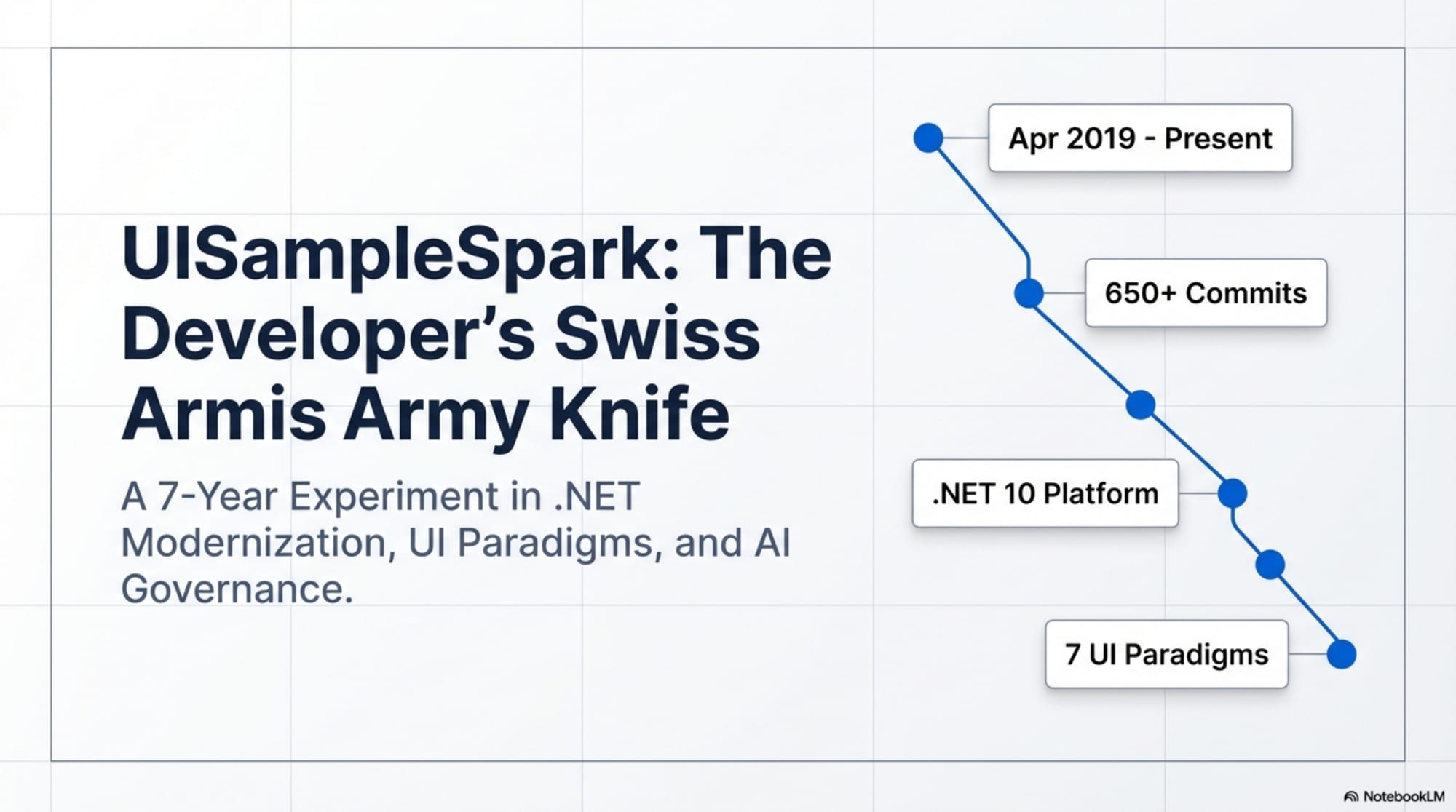

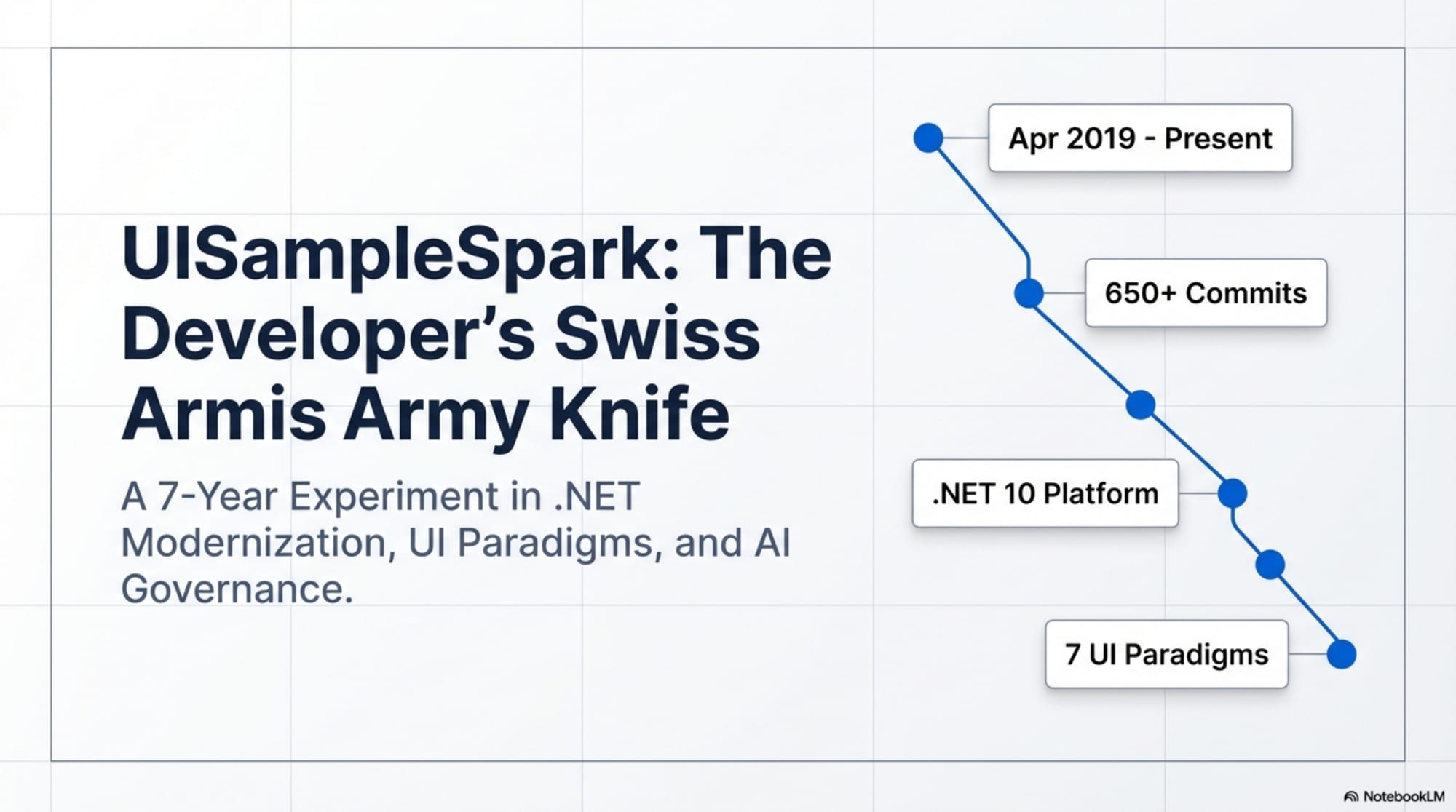

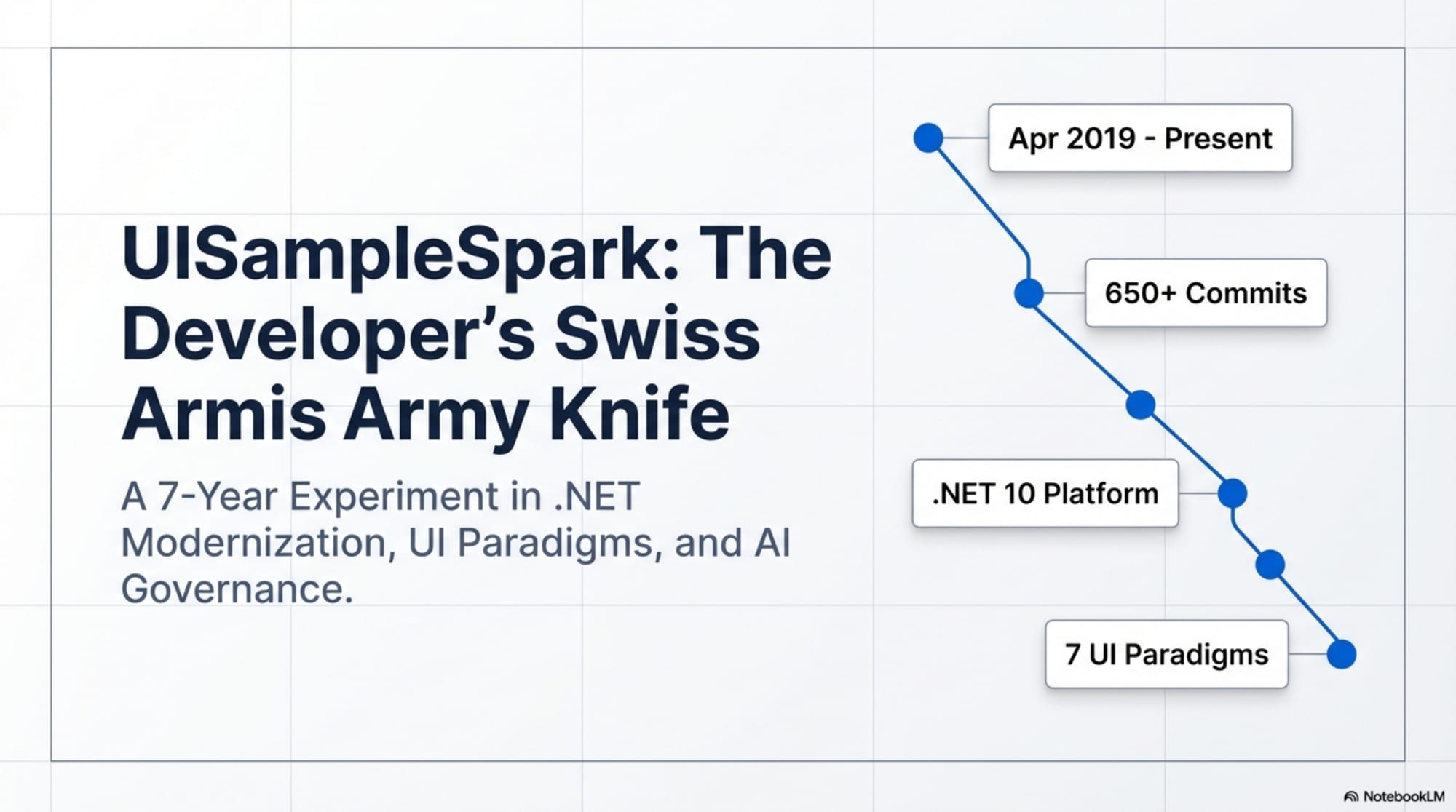

UISampleSpark: A Developer's Swiss Army Knife

In April 2019, the first commit established a simple CRUD reference project originally called SampleMvcCRUD. Over seven years and more than 650 commits, it evolved into UISampleSpark — a comprehensive educational platform spanning seven UI paradigms, cloud-native architecture, and AI-assisted governance.

Taking DevSpark to the Next Level

From EDS mainframes to AI coding agents—introducing the Adaptive System Lifecycle Development Toolkit that bridges rigorous enterprise methodology with modern AI-assisted development. Learn how to balance structure with innovation, maintain quality without rigidity, and make your project constitution valuable throughout the entire development lifecycle.

Why I Built DevSpark

An exploration of why I built DevSpark — driven by the personal struggle of keeping existing codebases aligned with architectural standards long after the initial specification phase.

Thinking About Stack Overflow Made Me Ponder the Real Lessons of Disruption

Thinking about Stack Overflow made me ponder the deeper lessons of how organizations respond to market disruption. Stack Overflow did pivot—it just didn't pivot in a way that preserved the developer Q&A community that defined its cultural relevance.

DevSpark: Constitution-Based Pull Request Reviews

Every mature codebase accumulates institutional knowledge that lives in scattered places. This article explores how to use DevSpark to perform AI-powered pull request reviews that validate changes against a project constitution—a living document capturing architectural principles, anti-patterns, and non-negotiable standards.

Safely Launching a New MarkHazleton.com

A detailed account of migrating MarkHazleton.com to a modern React-based static site, solving critical SEO crawlability issues, implementing build tracking, and safely switching production domains between Azure Static Web Apps.

Building MuseumSpark - Why Context Matters More Than the Latest LLM

A deep dive into building MuseumSpark, showing how a modular, context-first architecture with smart caching reduced LLM costs by 67% while improving accuracy from 29% to 95%. Learn why gathering evidence before asking LLMs to judge beats trying to use them as researchers.

Building a Quick Estimation Template When You Have Almost Nothing to Go On

A three-pillar framework — Innovation, Scope, and People — for estimating quickly when requirements are vague and deadlines are tight.

Getting Started with DevSpark: Requirements Quality Matters

Bad requirements produce bad code—this was true with humans and is exponentially worse with AI. Vague prompts force AI to guess at thousands of unstated constraints, generating code that looks right but fails under real-world conditions. DevSpark addresses this through structured phases: Constitution guardrails, mandatory clarification loops, discrete pipeline gates, and human verification. Requirements quality matters more than coding speed.

Building Git Spark: My First npm Package Journey

Creating git-spark, my first npm package, from frustration to published tool. Learn Git analytics limits and the value of honest metrics.

Measuring AI's Contribution to Code

Artificial Intelligence is reshaping the software development landscape by enhancing productivity, improving code quality, and fostering innovation. This article delves into the metrics and tools used to measure AI's impact on coding.

Modernizing Client Libraries in a .NET 4.8 Framework Application

Modernizing client libraries in a .NET 4.8 framework application is essential for maintaining performance, security, and compatibility. This article provides a step-by-step guide to updating and optimizing your codebase.

Creating a PHP Website with ChatGPT

Discover how to create a PHP website with ChatGPT integration. This guide covers setup, API access, and frontend interaction to enhance user engagement.

Evolving PHP Development

PHP has been a cornerstone of web development for decades. This article explores its evolution, highlighting significant advancements and emerging trends that keep PHP relevant.

Hotfix Prioritization Matrix & Decision Framework

In software development, addressing bugs quickly is vital. This article introduces a Hotfix Prioritization Matrix and Decision Framework to help prioritize critical issues efficiently.

TailwindSpark: Ignite Your Web Development

TailwindSpark is your ultimate guide to mastering Tailwind CSS and Spark frameworks. Learn how to enhance your web development skills and create stunning, responsive designs with this powerful combination.

The Building of React-native-web-start

React-native-web-start is designed to streamline web and mobile app development using React Native. This article explores its creation, challenges, and benefits.

Mastering LLM Prompt Engineering

Unlock the full potential of Large Language Models like ChatGPT, Claude, and Gemini by mastering prompt engineering, context strategies, and best practices for AI-powered conversations and code generation.

Mastering Blog Management Tools

I built a custom CMS after repetitive publishing tasks kept stealing time from writing. This article walks through the architecture and trade-offs, then connects them to lessons from Web Project Mechanics.

Harnessing the Power of Caching in ASP.NET

Caching is essential for optimizing ASP.NET applications. This article explores how to use MemoryCacheManager to implement effective caching strategies, improving performance and scalability.

Exploring Microsoft Copilot Studio

Microsoft Copilot Studio enters the no-code AI assistant category with full Microsoft 365 integration. Real value only becomes clear after hands-on testing against concrete use cases.

English: The New Programming Language of Choice

English has always shaped how we write software, but with LLMs it now directly shapes what software gets produced. This article explores why prompt and context engineering are practical language skills, not just AI buzzwords.

ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados

Explore how the integration of ChatGPT and C# creates a unique trivia experience using Jeopardy questions. This project blends data analysis with interactive quizzes, showcasing the power of .NET.

My Journey as a NuGet Gallery Developer and Educator

Spending time in NuGet from both sides — publishing WebSpark.HttpClientUtility and teaching others how to package well — changed how I think about what "good" looks like for a small library. The lessons are less about packaging mechanics and more about empathy for the consumer.

NuGet Packages: Benefits and Challenges

NuGet packages are essential for .NET developers, offering ease of integration and robust community support. However, they come with challenges like dependency management and security risks. This article explores these aspects in detail.

Sidetracked by Sizzle: Staying Focused on True Value

Sidetracked by Sizzle" is a phrase I use as a personal compass — a reminder that the most impressive-looking solution and the most valuable one are often different things. This article explains where the phrase comes from, what it means in practice, and why I keep it in my professional profiles as a standing commitment to evaluate technology on outcomes rather than appeal.

Building TeachSpark: AI-Powered Educational Technology for Teachers

TeachSpark was built to reduce worksheet-preparation time while keeping instructional intent in the teacher's hands. This article breaks down the architecture, integration approach, and implementation trade-offs.

AI Observability Is No Joke

How a simple AI joke request revealed critical observability gaps, why transparency matters in AI systems, and practical steps to implement better monitoring in your AI agents.

Architecting Agentic Services in .NET 9: Semantic Kernel

This guide explores the architecture of agentic AI services using .NET 9 and Microsoft Semantic Kernel. Learn about instruction engineering, security patterns, and enterprise-ready strategies.

Building ArtSpark: Where AI Meets Art History

Discover how ArtSpark combines AI and art history, allowing users to interact with artworks through a platform built with .NET 9, Microsoft Semantic Kernel, and GPT-4 Vision. This article explores the creation, challenges, and future developments of ArtSpark.

TaskListProcessor - Enterprise Async Orchestration for .NET

Explore TaskListProcessor, an enterprise-grade .NET 10 library for orchestrating asynchronous operations. Learn about circuit breakers, dependency injection, interface segregation, and building fault-tolerant systems with comprehensive telemetry.

From README to Reality: Teaching an Agent to Bootstrap a UI Theme

A smart NuGet README and VS Code's agent mode can collapse what was a tedious manual setup — install package, register services, scaffold layout, swap themes — into a single intent expressed in plain English. WebSpark.Bootswatch is a working example of what that looks like end to end.

The New Era of Individual Agency: How AI Tools Empower Self-Starters

Artificial intelligence is transforming individual agency by making advanced capabilities accessible to all. This article explores how AI tools empower self-starters.

ReactSpark: A Comprehensive Portfolio Showcase

ReactSpark is a modern, responsive portfolio website built using React 19 and TypeScript. It demonstrates contemporary web development best practices including strong typing, component-based architecture, and API integration within the WebSpark ecosystem.

Pedernales Cellars Winery in Texas Hill Country

Located in Stonewall, Texas, Pedernales Cellars is known for crafting award-winning Spanish and Rhône-style wines from 100% Texas-grown grapes. Run by sixth-generation Texans, the winery blends traditional values with modern environmental responsibility.

The Impact of Input Case on LLM Categorization

Large Language Models (LLMs) are sensitive to the case of input text, affecting their tokenization and categorization capabilities. This article delves into how input case impacts LLM performance, particularly in NLP tasks like Named Entity Recognition and Sentiment Analysis, and discusses strategies to enhance model robustness.

AI-Assisted Development: Claude and GitHub Copilot

Claude and GitHub Copilot can improve development speed, but they introduce different risks and workflow trade-offs. This article compares where each tool helps and where stronger review discipline is required.

AI and Critical Thinking in Software Development

The most useful thing about AI tooling in software development is also the most worth watching carefully — it makes the work feel easier. But easier isn't always the same as better, and the cognitive habits that produce good judgment don't stay sharp on their own. This article explores the paradox at the center of AI-augmented development and what intentional augmentation actually looks like in practice.

DevSpark: Constitution-Driven AI for Software Development

DevSpark aligns AI coding agents with project architecture and governance through a constitution-driven toolkit for the full software development lifecycle.

The Creation of ShareSmallBiz.com: A Platform for Small Business Success

In today's competitive market, small businesses often struggle to keep up with larger corporations due to limited resources and marketing budgets. Enter ShareSmallBiz.com, a revolutionary platform designed to level the playing field by offering collaborative marketing tools and shared resources. This article delves into the creation and impact of ShareSmallBiz.com, exploring how it empowers small businesses to achieve success.

Kendrick Lamar's Super Bowl LIX Halftime Show

Kendrick Lamar's Super Bowl LIX halftime performance was a profound societal commentary delivered through metaphorical visuals and thought-provoking stage design.

Riffusion AI: Revolutionizing Music Creation

Riffusion AI shows how diffusion models can support composition workflows by turning text prompts into musical structure. This article examines where it helps, where it falls short, and what that means in day-to-day music work.

Harnessing NLP: Concepts and Real-World Impact

A deep exploration of Natural Language Processing—its core techniques, the distinction between NLP and LLMs, real-world applications across industries, and a timeline of key milestones from the Turing Test to GPT-4.

Computer Vision in Machine Learning

Computer vision is reshaping industries by enabling machines to interpret visual data. This article explores its applications, challenges, and future potential.

Generate Wiki Documentation from Your Code Repository

Creating detailed documentation is crucial for any code repository. This guide will walk you through the process of generating wiki documentation directly from your code repository, enhancing project transparency and collaboration.

Decorator Design Pattern - Adding Telemetry to HttpClient

Master the Decorator Pattern to enhance HttpClient functionality with telemetry, logging, and caching capabilities while maintaining clean, maintainable code architecture in ASP.NET Core.

Getting Started with PUG: History and Future

PUG, a high-performance template engine for Node.js, has a rich history and a promising future. This article delves into its origins, features, and community, providing insights into its ongoing development and future prospects.

Adapting with Purpose: Lifelong Learning in the AI Age

Lifelong learning is less about collecting credentials and more about adapting to real workflow change. This article examines how AI supports that process and where caution is still required.

Understanding Neural Networks

Neural networks are a cornerstone of modern artificial intelligence, mimicking the way human brains operate to process information. This guide aims to introduce the basic concepts of neural networks, their architecture, and their applications.

Creating a Law & Order Episode Generator

Law & Order has run for so long that fans on Reddit have effectively annotated the entire pattern of an episode. PromptSpark made it possible to feed that community knowledge into a GPT model and see whether it could capture what makes the format work.

The Transformative Power of MCP

The Model Context Protocol (MCP) is a groundbreaking framework that enables artificial intelligence systems to adapt dynamically to various contexts. This adaptability is crucial in transforming repetitive tasks and enhancing business intelligence processes.

OpenAI Sora: First Impressions and Impact

OpenAI Sora is a groundbreaking platform that uses AI to simplify video generation. This article explores its features and potential impact on creative industries.

A Full History of the EDS Super Bowl Commercials

As a former EDS employee, I have a personal appreciation for the Super Bowl commercials from the early 2000s. They captured core IT project pressures through humor and memorable metaphors.

Using NotebookLM, Clipchamp, and ChatGPT for Podcasts

Creating a podcast can be a daunting task, but with the right tools, it becomes a seamless and enjoyable experience. In this guide, we will explore how to use NotebookLM, Microsoft Clipchamp, and ChatGPT to produce high-quality podcast episodes for your Deep Dive playlist.

Workflow-Driven Chat Applications Powered by Adaptive Cards

Explore how to design workflow-driven chat applications using Adaptive Cards to enhance AI interactivity and structured conversations. Discover the benefits and implementation strategies.

Interactive Chat in PromptSpark With SignalR

In this guide, we will explore how to implement a real-time, AI-driven chat application using PromptSpark. By leveraging ASP.NET SignalR and OpenAI's GPT via Semantic Kernel, you can create a dynamic and interactive chat experience.

Building Real-Time Chat with React and SignalR

Learn how to build a dynamic chat application using React, SignalR, and Markdown streaming. This guide covers setting up the environment, integrating real-time messaging, and rendering Markdown content.

Windows to Mac: Broadening My Horizons

Switching from Windows to macOS can be a transformative experience. This article delves into my journey of learning to use a MacBook Pro and enhancing my tech skills, offering insights into the benefits and challenges of making the switch.

Adding Weather Component: A TypeScript Learning Journey

Wiring a weather forecast and map feature into a React Native app turned into a useful drill in TypeScript fundamentals — typed components, error handling, and the small frictions that surface when types meet real APIs.

Building My First React Site Using Vite

In this guide, we will walk you through the process of building and deploying a React site using Vite and GitHub Pages. We'll cover setup, deployment, and troubleshooting common issues like CORS.

Canonical URL Troubleshooting for Static Web Apps

Canonical URLs are crucial for SEO in static web apps. This guide explores how to manage them using Azure and Cloudflare, ensuring your content is properly indexed.

Developing MarkHazleton.com: Tools and Approach

A personal site that doubles as a portfolio has to do two jobs at once — publish content well and demonstrate the engineering choices behind it. The development of MarkHazleton.com leans on a deliberate stack and a small set of conventions that have held up over multiple rebuilds.

Exploratory Data Analysis with Python

Exploratory Data Analysis (EDA) is a crucial step in the data science process, allowing analysts to uncover patterns, spot anomalies, and test hypotheses. This guide delves into the techniques and tools used in EDA, with a focus on Python's capabilities.

Exploring Nutritional Data Using K-means Clustering

Nutritional data is a useful playground for unsupervised learning - dozens of nutrient dimensions, no obvious labels, and a real question worth answering: what natural groupings emerge when you let the math sort foods rather than the food pyramid?

Python: The Language of Data Science

Python has become integral to data science due to its simplicity and powerful libraries. This article explores its history, key libraries, and why it's favored by developers.

Fixing a Runaway Node.js Recursive Folder Issue

Node.js applications can sometimes create infinite recursive directories due to improper recursion handling. This article provides solutions to fix the issue and includes a C++ program for cleanup.

Troubleshooting and Rebuilding My JS-Dev-Env Project

Rebuilding a JavaScript dev environment from scratch is one of those exercises that feels wasteful until something breaks badly enough to force the issue. Going through it with Node.js, Nodemon, ESLint, Express, and Bootstrap surfaced the small assumptions that had quietly drifted out of date.

Data Science for .NET Developers

In today's tech landscape, data science is crucial for developers. This article explores why a .NET developer pursued UT Austin's AI/ML program and its impact.

Syntax Highlighting with Prism.js for XML, PUG, YAML, and C#

Syntax highlighting is a crucial aspect of code readability and presentation. In this guide, we will explore how to implement syntax highlighting for XML, PUG, YAML, and C# using the powerful Prism.js library. Additionally, we will delve into automating the bundling process with render-scripts.js to streamline your workflow.

Automate GitHub Profile with Latest Blog Posts

Keeping your GitHub profile updated with the latest content can be a tedious task. However, with the power of GitHub Actions and Node.js, you can automate this process, ensuring your profile always reflects your most recent blog posts.

The Brain Behind JShow Trivia Demo

The JShow Trivia Demo on WebSpark is powered by the innovative J-Show Builder GPT, an AI tool that simplifies the creation of engaging trivia games. Discover its development journey and impact on the platform.

Migrating to MarkHazleton.com: A Comprehensive Guide

Moving a blog from one domain to another is mostly a DNS exercise — until it isn't. Migrating from markhazleton.controlorigins.com to markhazleton.com on Azure Static Web Apps with Cloudflare DNS surfaced the small details that decide whether a cutover is clean or quietly breaks SEO.

The Singleton Advantage: Managing Configurations in .NET

In the world of software development, managing configurations efficiently is crucial for application performance and security. This article delves into the advantages of using the singleton pattern in .NET Core for configuration management. We will explore techniques such as lazy loading, ensuring thread safety, and securely accessing Azure Key Vault.

Building Resilient .NET Applications with Polly

Network communication is inherently unreliable — timeouts, transient faults, downstream services that hiccup at exactly the wrong moment. Polly with HttpClient turns retries, timeouts, and circuit breakers from one-off code into a composable resilience pattern.

Accelerate Azure DevOps Wiki Writing

In the fast-paced world of software development, maintaining up-to-date and comprehensive documentation is crucial. Azure DevOps wikis serve as a central repository for project documentation, but writing and maintaining these wikis can be time-consuming. Enter Azure Wiki Expert GPT, a powerful tool designed to streamline the process of creating and updating Azure DevOps wiki content.

WebSpark: Transforming Web Project Mechanics

WebSpark, developed by Mark Hazleton, is revolutionizing web project mechanics by providing a suite of applications that enhance digital experiences. This article explores how WebSpark streamlines web development processes and improves user engagement.

Integrating Chat Completion into Prompt Spark

The integration of chat completion into the Prompt Spark project enhances user interactions by enabling seamless chat functionalities for Core Spark Variants. This advancement allows for more natural and engaging conversations with large language models.

Using Large Language Models to Generate Structured Data

Large language models like GPT-4 are transforming data structuring by automating processes and ensuring accuracy. This article explores their application in JSON recipe formatting, highlighting benefits such as enhanced productivity and cost-effectiveness.

Prompt Spark: Revolutionizing LLM System Prompt Management

In the rapidly evolving field of artificial intelligence, managing and optimizing prompts for large language models (LLMs) is crucial for maximizing performance and efficiency. Prompt Spark emerges as a groundbreaking solution, offering a suite of tools designed to streamline this process. This article delves into the features and benefits of Prompt Spark, including its variants library, performance tracking capabilities, and innovative prompt engineering strategies.

Taking FastEndpoints for a Test Drive

FastEndpoints offers a simplified approach to building ASP.NET APIs, enhancing efficiency and productivity. This article explores its features and benefits.

Embracing Azure Static Web Apps for Static Site Hosting

Static websites are gaining traction due to their speed, security, and simplicity. Azure Static Web Apps offers an efficient solution for hosting these sites, providing integrated CI/CD, global reach, and built-in authentication.

Creating a Key Press Counter with Chat GPT

A key press counter sounds trivial — until you start asking what the data is for, who can see it, and where the line sits between productivity tooling and surveillance. Building one with ChatGPT made both the technical setup and the ethics surprisingly concrete.

From Concept to Live: Unveiling WichitaSewer.com

Creating a website involves meticulous planning and execution. This article explores the journey of WichitaSewer.com from concept to live launch, highlighting key insights and lessons learned.

The Balanced Equation: Crafting the Perfect Project Team Mix

In today's fast-paced business environment, assembling the right project team is crucial for success. The perfect mix of internal employees and external consultants can lead to innovative solutions and efficient project execution. This article explores how to achieve this balance and why it's essential.

Fire and Forget for Enhanced Performance

The Fire and Forget technique is a powerful method to enhance API performance by allowing tasks to proceed without waiting for a response. This approach is particularly beneficial in scenarios like Service Bus updates during user login, where immediate feedback is not required, thus improving overall system efficiency.

The Art of Making Yourself Replaceable: A Guide to Career Growth

A third-grader once asked me if I couldn't keep a job. After laughing, I explained that making yourself replaceable is how you stay ready for the next big challenge — and why the greatest compliment a developer can receive is hearing that something they built is still running years later.

Guide to Redis Local Instance Setup

Setting up a Redis local instance can significantly enhance your application's performance. This guide walks you through the process, ensuring you configure Redis for maximum efficiency and reliability.

Concurrent Processing in C#

Learn concurrent processing through hands-on C# development. Explore SemaphoreSlim, task management, and best practices for building scalable multi-threaded applications.

Understanding System Cache: A Comprehensive Guide

System cache is crucial for speeding up processes and improving system performance. This guide explores its types, functionality, and benefits, along with management tips.

The Power of Lifelong Learning

The field keeps moving. The technologies shift, the practices evolve, the problems get more complex. Lifelong learning isn't a scheduled activity — it's the posture that keeps you genuinely useful over time. This article explores what that looks like in practice, across the different modes of learning that have mattered most in a long career in software.

Mastering Git Repository Organization

Efficient Git repository organization is crucial for successful software development. This article covers strategies to improve collaboration, manage projects, and reduce errors.

Mastering Web Project Mechanics

Web projects are integral to modern business success. This guide explores the essential strategies for managing and executing web projects effectively, ensuring your projects achieve their objectives.

Mastering Data Analysis Techniques

Data analysis is a critical skill in today's data-driven world. This article explores essential techniques for analyzing data and provides practical demonstrations on how to visualize data effectively.

CancellationToken for Async Programming

Asynchronous programming allows tasks to run without blocking the main thread, but managing these tasks efficiently is crucial. CancellationToken provides a robust mechanism for task cancellation, ensuring resources are not wasted and applications remain responsive.

Git Flow Rethink: When Process Stops Paying Rent

After months of solo-maintaining a corporate API, the ceremony of Git Flow became visible as ceremony — steps without payoff. Vincent Driessen retracted his own methodology in 2020. This is what it took for me to admit he was right.

Using ChatGPT for C# Development

How ChatGPT can help you write, refactor, and document C# code more effectively, with practical examples and integration strategies.

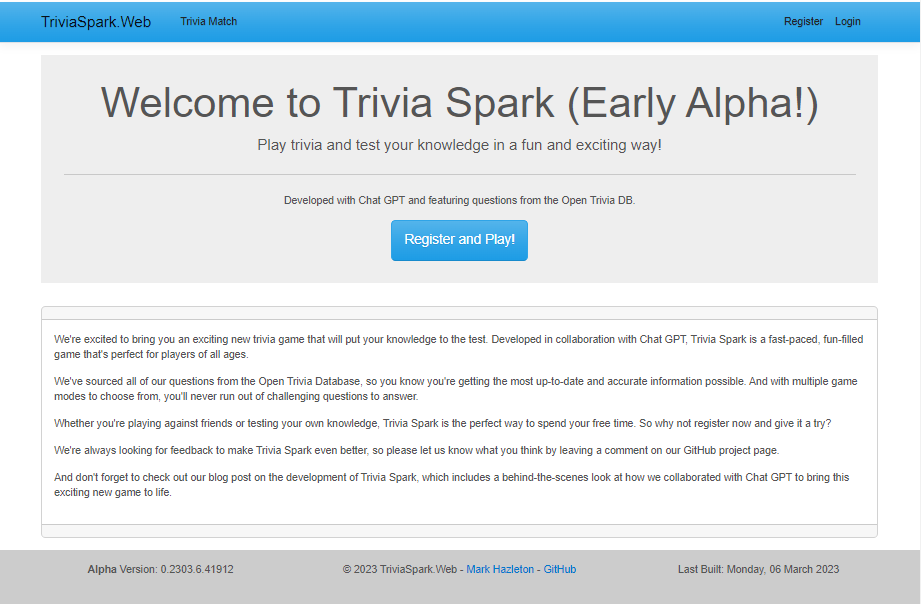

Trivia Spark: Building a Trivia App with ChatGPT

TriviaSpark started as an experiment in using ChatGPT to build a multiplayer trivia application. This article documents the collaboration, architectural decisions, and practical lessons learned from pairing with AI tools during development.

Project Mechanics

A complete project management methodology developed over 20+ years — covering the full project life cycle, portfolio governance, leadership, change management, and conflict resolution. Not blog posts; a structured, interconnected framework.