Using Large Language Models to Generate Structured Data

Large language models like GPT-4 are transforming data structuring by automating processes and ensuring accuracy. This article explores their application in JSON recipe formatting, highlighting benefits such as enhanced productivity and cost-effectiveness.

AI & Machine Learning Series — 25 articles

- Using ChatGPT for C# Development

- Trivia Spark: Building a Trivia App with ChatGPT

- Creating a Key Press Counter with Chat GPT

- Using Large Language Models to Generate Structured Data

- Prompt Spark: Revolutionizing LLM System Prompt Management

- Integrating Chat Completion into Prompt Spark

- WebSpark: Transforming Web Project Mechanics

- Accelerate Azure DevOps Wiki Writing

- The Brain Behind JShow Trivia Demo

- Building My First React Site Using Vite

- Adding Weather Component: A TypeScript Learning Journey

- Interactive Chat in PromptSpark With SignalR

- Building Real-Time Chat with React and SignalR

- Workflow-Driven Chat Applications Powered by Adaptive Cards

- Creating a Law & Order Episode Generator

- The Transformative Power of MCP

- The Impact of Input Case on LLM Categorization

- The New Era of Individual Agency: How AI Tools Empower Self-Starters

- AI Observability Is No Joke

- ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados

- Mastering LLM Prompt Engineering

- English: The New Programming Language of Choice

- Measuring AI's Contribution to Code

- Building MuseumSpark - Why Context Matters More Than the Latest LLM

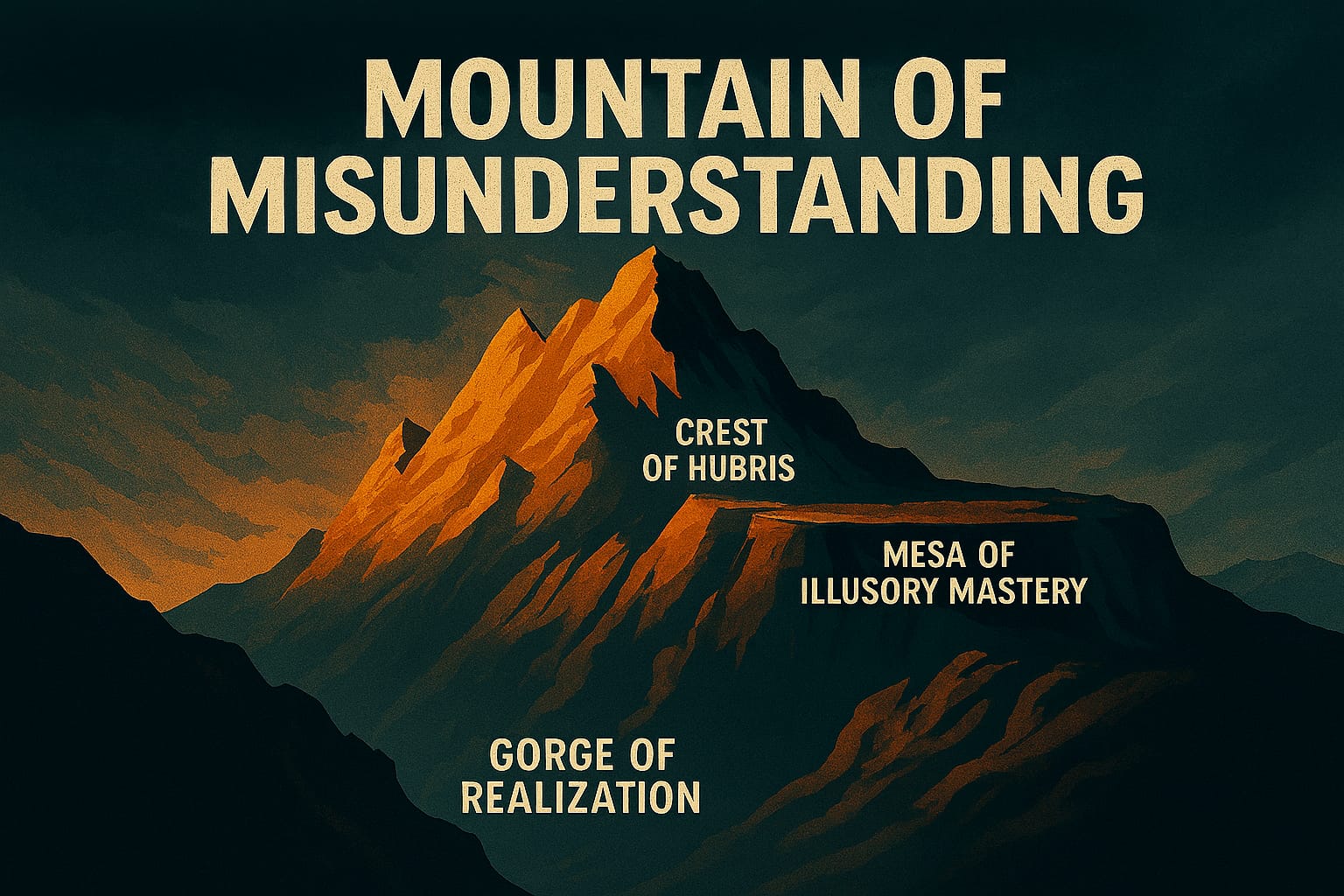

- Mountains of Misunderstanding: The AI Confidence Trap

Using Large Language Models to Generate Structured Data

Using Large Language Models to Generate Structured Data

What I Learned Using GPT-4 for Recipe JSON

On a recent project, I spent the better part of two days hand-formatting recipe data into JSON before it finally occurred to me that GPT-4 could handle it in seconds. The first attempt looked promising — until I noticed the model had invented a field called serving_suggestion_note that appeared in roughly 40% of outputs but never in my schema. That was the moment I understood the real shape of this problem: GPT-4 is genuinely good at structured output, but "good" is not the same as "consistent," and the gap between those two words is where the actual engineering work lives.

The Role of GPT-4 in Data Structuring

GPT-4 excels at generating structured data formats like JSON — JavaScript Object Notation, a lightweight interchange format that's readable by both humans and machines. What I found useful isn't just that GPT-4 knows JSON syntax; it's that the model can infer reasonable field values from unstructured source text, which removes a whole category of tedious extraction work. The catch is that "infer reasonable values" and "respect your schema exactly" are two different skills, and getting both at once requires deliberate prompt engineering.

Case Study: Mechanics of Motherhood

I worked with Mechanics of Motherhood, a platform built around structured recipes, to automate the generation of JSON-formatted recipe data using GPT-4. The goal was straightforward: take unstructured recipe content and produce consistent, machine-readable JSON at scale. Here's what I actually learned doing it — including where it failed.

The first generation pass produced plausible-looking JSON, but plausible isn't valid. The model occasionally hallucinated fields, merged ingredient quantities into the wrong array elements, and — on longer recipes — started truncating output near the context window limit. None of these were showstoppers, but each required a specific fix: tighter schema definitions in the prompt, explicit negative instructions ("do not add fields not present in the schema"), and chunking logic for longer inputs.

What I didn't expect was where the real time savings came from. It wasn't raw generation speed — it was the reduction in validation overhead once I got the prompt structure right. Early on, I was spending almost as much time QA-ing GPT-4 output as I'd spent formatting by hand. Once I locked down the prompt with a concrete schema example and added a lightweight JSON Schema validation step, the pipeline became genuinely fast. The trade-off: that upfront prompt engineering and validation scaffolding took real time to build. Anyone expecting to drop GPT-4 into a data pipeline without a validation layer is going to have a bad afternoon.

Where I've Hit Walls

Accuracy sounds straightforward until GPT-4 invents a prep_time_emoji field you never asked for. In my experience, hallucinated fields are the most common failure mode — the model is trying to be helpful and adds context it thinks belongs there. The fix is explicit: show the model your exact schema, tell it what fields are required, and tell it directly that no other fields should appear.

The other wall I've hit is cost at scale. Running thousands of recipes through GPT-4 is not free, and the economics only work if the manual alternative is genuinely expensive. On the Mechanics of Motherhood project, the math favored automation clearly — but I've seen situations where a smaller dataset and a simpler schema made hand-formatting the cheaper option. The trade-off here is real: AI structuring saves time on volume, but the upfront engineering cost and per-token expense mean it's not automatically the right choice for every project.

Context window limits bite on long-form content. Recipes are usually short enough to fit comfortably, but I've worked with product descriptions and technical documents where a single record approached the limit. Chunking solves this, but it adds pipeline complexity and introduces seam problems where fields split awkwardly across chunks.

Benefits of Using AI for Structured Data

- Enhanced Productivity: What I've found is that AI models process and organize data faster than manual methods — but only after the prompt and validation infrastructure is solid. The first hour is slower than doing it by hand.

- Improved Data Quality: Consistent formatting and reduced human error lead to higher quality data, assuming the validation layer catches the model's mistakes before they propagate downstream.

- Cost-Effectiveness: Automation reduces manual labor costs at volume. The break-even point depends on schema complexity and how much validation work the output requires.

Future of AI in Data Structuring

As AI models continue to improve, I expect the validation overhead to shrink — not disappear, but shrink. The direction I'm watching is better tool-use and function-calling interfaces, where the model is constrained to output that passes schema validation before it ever reaches my pipeline. That changes the economics meaningfully. The same pipeline architecture I built for Mechanics of Motherhood should handle increasingly complex structuring tasks as those interfaces mature, without fundamental redesign.

Conclusion

How I've seen GPT-4 change the way I approach data structuring: it removed the tedious extraction and formatting work and pushed the engineering effort upstream into prompt design and schema definition. That's a genuine shift in where time gets spent — not a free lunch, but a different kind of work that scales better. The limitations I've discovered — hallucinated fields, context window ceilings, validation costs — are real constraints that any honest assessment has to include. Get those right and the pipeline is fast, consistent, and worth building.

"AI is not just a tool; it's a partner in innovation, transforming how we interact with data." – Mark Hazleton

For more insights on AI and data structuring, visit Mechanics of Motherhood.

Reflections on AI-Driven Data Structuring

Working with GPT-4 for structured data generation revealed an interesting dynamic: the model's ability to produce well-formed JSON consistently was impressive, but the real productivity gain came from iteration speed. What would have taken hours of manual formatting and validation could be accomplished in minutes, with the model handling the tedious structural work while I focused on data quality and edge cases.

The cost-effectiveness and accuracy improvements are meaningful, but what I find most significant is how this approach scales. As models continue to improve, the same pipeline architecture handles increasingly complex data structuring tasks without fundamental redesign.

Explore More

- English: The New Programming Language of Choice -- How English is Transforming Software Development

- Using ChatGPT for C# Development -- Accelerate Your Coding with AI

- Trivia Spark: Building a Trivia App with ChatGPT -- Rapid Prototyping and AI-Assisted Development in Practice

- Mastering LLM Prompt Engineering -- The Art of Effective AI Communication

- ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados -- Blending Trivia and Technology