Prompt Spark: Revolutionizing LLM System Prompt Management

In the rapidly evolving field of artificial intelligence, managing and optimizing prompts for large language models (LLMs) is crucial for maximizing performance and efficiency. Prompt Spark emerges as a groundbreaking solution, offering a suite of tools designed to streamline this process. This article delves into the features and benefits of Prompt Spark, including its variants library, performance tracking capabilities, and innovative prompt engineering strategies.

AI & Machine Learning Series — 25 articles

- Using ChatGPT for C# Development

- Trivia Spark: Building a Trivia App with ChatGPT

- Creating a Key Press Counter with Chat GPT

- Using Large Language Models to Generate Structured Data

- Prompt Spark: Revolutionizing LLM System Prompt Management

- Integrating Chat Completion into Prompt Spark

- WebSpark: Transforming Web Project Mechanics

- Accelerate Azure DevOps Wiki Writing

- The Brain Behind JShow Trivia Demo

- Building My First React Site Using Vite

- Adding Weather Component: A TypeScript Learning Journey

- Interactive Chat in PromptSpark With SignalR

- Building Real-Time Chat with React and SignalR

- Workflow-Driven Chat Applications Powered by Adaptive Cards

- Creating a Law & Order Episode Generator

- The Transformative Power of MCP

- The Impact of Input Case on LLM Categorization

- The New Era of Individual Agency: How AI Tools Empower Self-Starters

- AI Observability Is No Joke

- ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados

- Mastering LLM Prompt Engineering

- English: The New Programming Language of Choice

- Measuring AI's Contribution to Code

- Building MuseumSpark - Why Context Matters More Than the Latest LLM

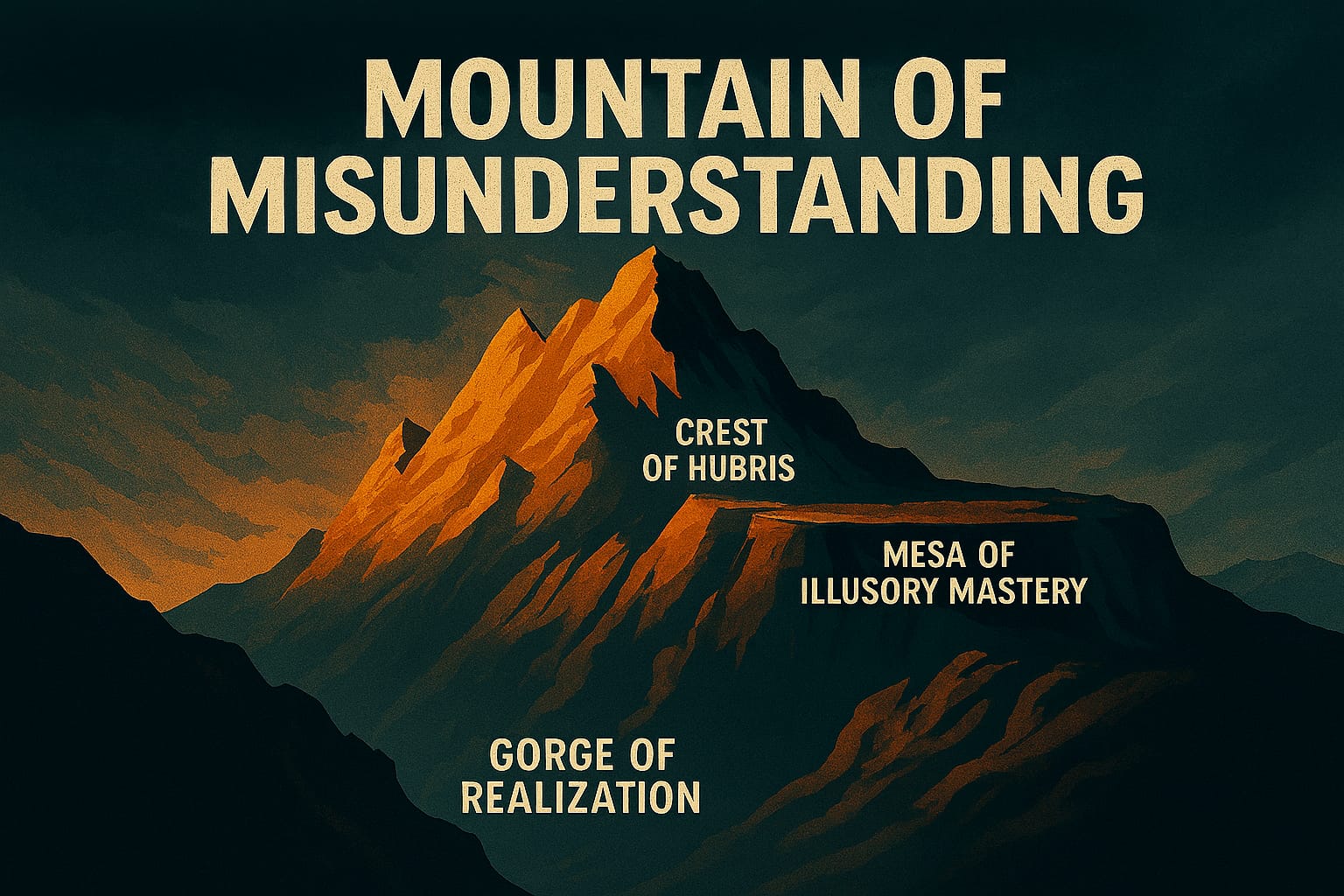

- Mountains of Misunderstanding: The AI Confidence Trap

Deep Dive: Prompt Spark

Prompt Spark: Managing LLM System Prompts at Scale

Tracking, Comparing, and Improving Prompts Without Losing Your Mind

Summary

On a recent project, I spent three days trying to figure out why one version of a system prompt worked well for classification but fell apart on summarization. We had variants scattered across Slack threads, Jupyter notebooks, and a deprecated spreadsheet that three people had edited without versioning. There was no systematic way to compare which configuration actually performed better—just gut feel and whoever had the most recent copy. That friction is what pushed me to look seriously at what tools existed for tracking prompt changes and measuring their impact. Prompt Spark is what I landed on, and here's what I found when I actually used it.

Introduction

Managing system prompts in large language models isn't technically hard—until it is. When you're running a handful of experiments, ad hoc prompts work fine. The moment you're running production workloads across multiple teams, the absence of versioning, structured comparison, and performance tracking becomes a genuine bottleneck. I've watched teams spend more time reconstructing "which prompt we were using last Tuesday" than actually improving their models.

Prompt Spark addresses that specific pain point. I'll walk through the components I've found most useful—the variants library, performance tracking, and prompt engineering patterns—along with the trade-offs I've noticed in practice.

Key Features of Prompt Spark

Variants Library

The variants library sounds almost trivially simple until you try to scale it. I initially dismissed variants as overkill—just tweak the temperature and roll with it. That assumption caught up with me on the classification project. I ran 15 variants against a holdout test set and found that the median performer (temperature=0.7, top_p=0.8) beat my hand-tuned version by 4%. That result surprised me, and more importantly, I wouldn't have caught it without a structured way to track what I was testing.

What I've found is that teams without a variants library tend to create variants anyway—they just do it invisibly, in local files or chat messages. I've watched teams accumulate 200+ informal variants and lose track entirely of which one solved the temperature-sensitivity problem they'd struggled with for a week. What Prompt Spark does well here is force you to tag and document why each variant exists. That friction is actually the feature. When you have to write a one-line rationale for each variant, you think more carefully about what you're testing.

Performance Tracking

The performance tracking tools are where I've seen the most immediate return. On a recent project, I needed to understand whether a prompt change I made to improve response consistency had any measurable effect on accuracy. Without tracking, I'd have relied on qualitative impressions from two or three test cases—which is essentially noise.

In practice, what performance tracking gives you is a record of how metrics shift over time as you iterate. The key discipline this enforces is maintaining a baseline. I've noticed that teams skip this step constantly: they make a change, observe that things seem better, and move on. The patterns I've seen work across multiple projects are simple but consistent—version your prompts like code, tag them by outcome, compare every change against a documented baseline, and never declare improvement without a test set. Whether any tool enforces that or not matters less than whether your team actually does it. Prompt Spark enforces it, which removes the argument about whether it's worth the effort.

Prompt Engineering Strategies

One strategy I've found genuinely useful is treating prompts exactly like code: version them, document what changed, and document why it changed. When a prompt is just a string someone types, it has no history. When it's a versioned artifact with a change log, you can debug regressions. Prompt Spark's versioning enforces that discipline in a way that voluntary conventions rarely do.

The strategies that have stuck for me across three projects are straightforward: anchor every variant to a specific task definition, tag by outcome rather than just by configuration parameter, and always compare against a known-good baseline rather than your last experiment. The underlying principle isn't new—it's the same configuration management discipline that applies to infrastructure or code. What makes prompt management feel different is that the artifacts are natural language, which makes people treat them informally. That informality is where the drift starts.

Benefits of Using Prompt Spark

- Improved Efficiency: Reducing the time spent reconstructing prompt history or hunting for "the version that worked" has a compounding effect. On the classification project, I estimate we recovered about two days of engineering time in the first month just by having a searchable record of what we'd tried.

- Enhanced Performance: The variants library combined with performance tracking creates a feedback loop that's hard to replicate manually. The 4% accuracy gain I mentioned above came from a comparison I almost didn't run.

- Scalability: The discipline that Prompt Spark enforces scales better than informal conventions. A small team can get away with a shared document; a larger team cannot. The trade-off is that imposing structure on prompts takes upfront effort that feels unnecessary until the chaos arrives.

Reflections on Prompt Management

Working with LLMs at scale has taught me that prompt management is one of those problems that doesn't feel urgent until it becomes unmanageable. When you're running a few experiments, ad hoc prompts work fine. When you're running production workloads across teams, the lack of versioning, comparison tools, and performance tracking becomes a real bottleneck.

Prompt Spark grew out of that friction. The variants library and performance tracking aren't features I envisioned upfront—they emerged from watching teams struggle with prompt drift and inconsistent results. The underlying lesson is familiar to anyone who's managed configuration at scale: what you can't measure, you can't improve.

Explore More

- Integrating Chat Completion into Prompt Spark -- Enhancing LLM Interactions

- Using ChatGPT for C# Development -- Accelerate Your Coding with AI

- Trivia Spark: Building a Trivia App with ChatGPT -- Rapid Prototyping and AI-Assisted Development in Practice

- Mastering LLM Prompt Engineering -- The Art of Effective AI Communication

- ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados -- Blending Trivia and Technology