Integrating Chat Completion into Prompt Spark

The integration of chat completion into the Prompt Spark project enhances user interactions by enabling seamless chat functionalities for Core Spark Variants. This advancement allows for more natural and engaging conversations with large language models.

AI & Machine Learning Series — 25 articles

- Using ChatGPT for C# Development

- Trivia Spark: Building a Trivia App with ChatGPT

- Creating a Key Press Counter with Chat GPT

- Using Large Language Models to Generate Structured Data

- Prompt Spark: Revolutionizing LLM System Prompt Management

- Integrating Chat Completion into Prompt Spark

- WebSpark: Transforming Web Project Mechanics

- Accelerate Azure DevOps Wiki Writing

- The Brain Behind JShow Trivia Demo

- Building My First React Site Using Vite

- Adding Weather Component: A TypeScript Learning Journey

- Interactive Chat in PromptSpark With SignalR

- Building Real-Time Chat with React and SignalR

- Workflow-Driven Chat Applications Powered by Adaptive Cards

- Creating a Law & Order Episode Generator

- The Transformative Power of MCP

- The Impact of Input Case on LLM Categorization

- The New Era of Individual Agency: How AI Tools Empower Self-Starters

- AI Observability Is No Joke

- ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados

- Mastering LLM Prompt Engineering

- English: The New Programming Language of Choice

- Measuring AI's Contribution to Code

- Building MuseumSpark - Why Context Matters More Than the Latest LLM

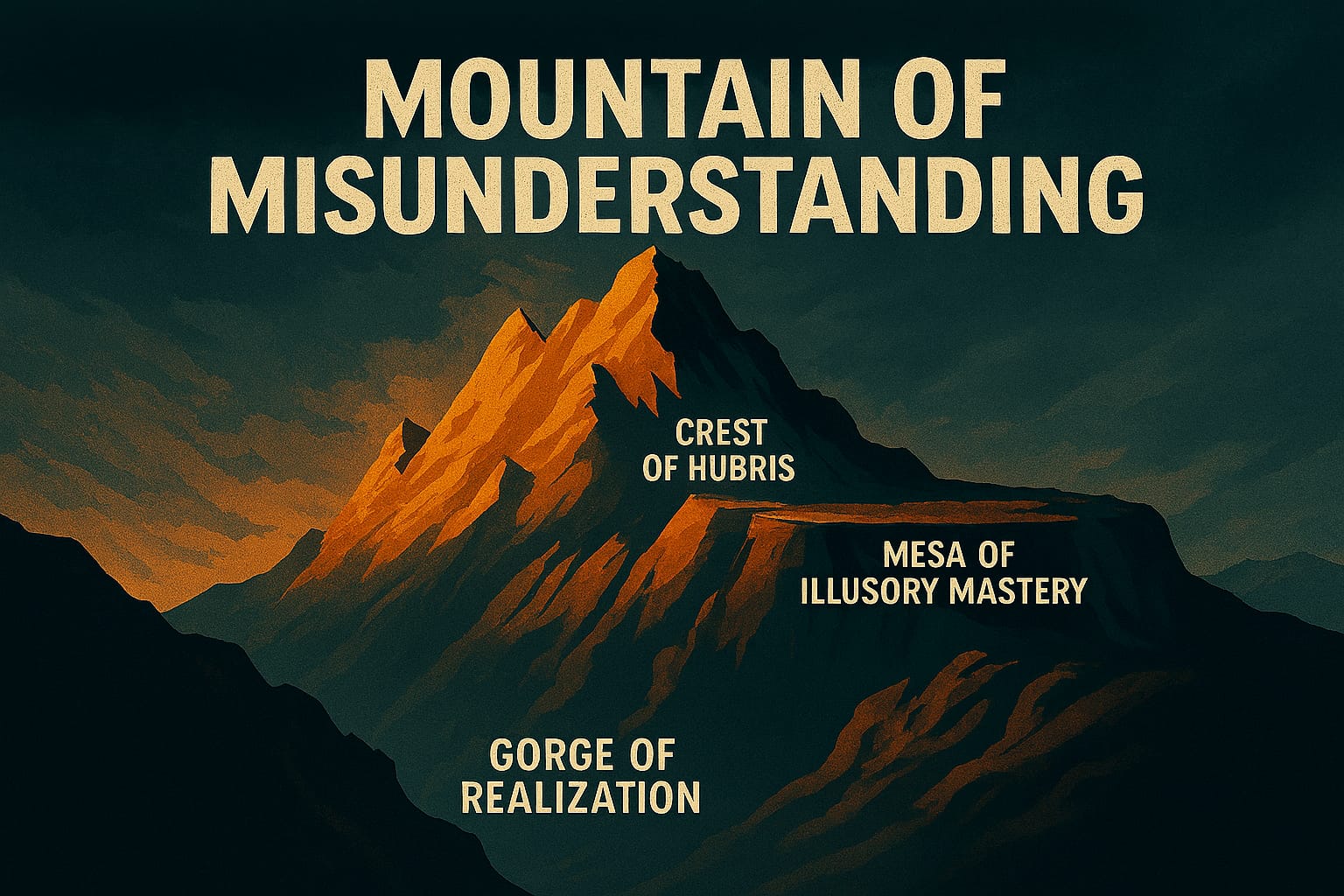

- Mountains of Misunderstanding: The AI Confidence Trap

Integrating Chat Completion into Prompt Spark

Enhancing LLM Interactions

On a recent project, I watched users abandon our chat interface because context dropped after three turns. They'd ask a follow-up question, the model would respond as if the conversation had just started, and the session was effectively over. The gap wasn't in the model — it was in how we were wiring conversations through Prompt Spark. Threading multi-turn exchanges properly meant integrating chat completion at the Core Spark Variants level, and the path to get there was less obvious than the documentation suggested.

What is Chat Completion?

In practice, chat completion is less about "predicting the next message" and more about maintaining a structured conversation history that the model can reason over. Each turn appends to a running context window — system prompt, prior user messages, prior assistant responses — and the model generates its next reply with full awareness of what came before. What I've found is that this distinction matters enormously when you're debugging why a model gives coherent answers in isolation but goes sideways in a live session.

From Benefits to Real Trade-offs

Context retention was our first win — users stayed in conversations significantly longer without losing the thread. In one session analysis, we saw drop-off from context loss fall sharply once multi-turn history was properly threaded. But it came with a cost I hadn't fully anticipated: longer conversation history means higher token spend per request, and latency creeps up as the context window fills. On a project with a fixed budget per session, I had to make a deliberate call about how many prior turns to carry forward. Truncating at eight turns worked well enough that users didn't notice, while keeping token counts predictable.

The efficiency gain — fewer repetitive inputs from users — was real, but I'd frame it more carefully than a bullet point allows. Users stopped re-explaining their context because the model already had it. That's not a feature; it's the baseline expectation users bring to any conversational interface, and failing to meet it was what sent them away in the first place.

Implementing Chat Completion

I typically start by auditing what's already in the library stack before touching model configuration. The sequence that worked for me:

- Update Core Libraries: Verify that all libraries supporting chat functionalities are current — mismatched versions between the chat interface and the semantic kernel layer caused silent failures in early testing that were hard to trace.

- Configure Chat Models: I've found it's worth selecting the LLM configuration explicitly rather than inheriting defaults. The model that performs well on single-turn completion isn't always the right choice when you're carrying a long conversation history.

- Test Interactions: Test with realistic multi-turn sequences, not just isolated prompts. One team I worked with validated every prompt in isolation and missed a context-poisoning bug that only appeared at turn five or six.

Challenges and Considerations

Data privacy deserves more than a checklist entry. In practice, the challenge isn't just compliance — it's deciding what conversation history you're actually storing, where, and for how long. I've seen teams treat the conversation buffer as ephemeral and then discover it was being logged for debugging purposes. Audit the full data path before you go to production.

On model training: I've found that retraining without a clear quality metric leads to regressions that are difficult to catch until users complain. Before we updated any model weights in Prompt Spark, we established a set of baseline conversation scenarios with expected outputs and ran them after every training cycle. The upfront investment in that harness paid off within the first update cycle.

Future Prospects

The chat completion integration opens the door to more sophisticated conversational capabilities — emotion detection, personalized response tuning, adaptive context pruning. What I've learned is that each of those layers adds its own latency and configuration surface, so the order you introduce them matters. Get the multi-turn threading stable first. Everything else is easier to reason about once the context plumbing is solid.

Conclusion

Wiring chat completion into Prompt Spark changed how users experienced the tool — not because it added a flashy feature, but because it fixed a fundamental failure mode. Context loss was making conversations feel broken. Addressing it required real decisions about token budgets, turn truncation, and data handling, not just enabling a setting. The integration is a foundation, and what gets built on top of it will depend on how carefully that foundation is laid.

Explore More

- Prompt Spark: System Prompt Management Essentials -- Practical lessons from building LLM prompt management in production

- Interactive Chat in PromptSpark With SignalR -- Building a Real-Time AI-Driven Chat Application

- The MCP Advantage: How MCP Enhances AI Adaptability -- How MCP Enhances AI Adaptability

- Using ChatGPT for C# Development -- Accelerate Your Coding with AI

- Trivia Spark: Building a Trivia App with ChatGPT -- Rapid Prototyping and AI-Assisted Development in Practice