The Transformative Power of MCP

The Model Context Protocol (MCP) is a groundbreaking framework that enables artificial intelligence systems to adapt dynamically to various contexts. This adaptability is crucial in transforming repetitive tasks and enhancing business intelligence processes.

AI & Machine Learning Series — 25 articles

- Using ChatGPT for C# Development

- Trivia Spark: Building a Trivia App with ChatGPT

- Creating a Key Press Counter with Chat GPT

- Using Large Language Models to Generate Structured Data

- Prompt Spark: Revolutionizing LLM System Prompt Management

- Integrating Chat Completion into Prompt Spark

- WebSpark: Transforming Web Project Mechanics

- Accelerate Azure DevOps Wiki Writing

- The Brain Behind JShow Trivia Demo

- Building My First React Site Using Vite

- Adding Weather Component: A TypeScript Learning Journey

- Interactive Chat in PromptSpark With SignalR

- Building Real-Time Chat with React and SignalR

- Workflow-Driven Chat Applications Powered by Adaptive Cards

- Creating a Law & Order Episode Generator

- The Transformative Power of MCP

- The Impact of Input Case on LLM Categorization

- The New Era of Individual Agency: How AI Tools Empower Self-Starters

- AI Observability Is No Joke

- ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados

- Mastering LLM Prompt Engineering

- English: The New Programming Language of Choice

- Measuring AI's Contribution to Code

- Building MuseumSpark - Why Context Matters More Than the Latest LLM

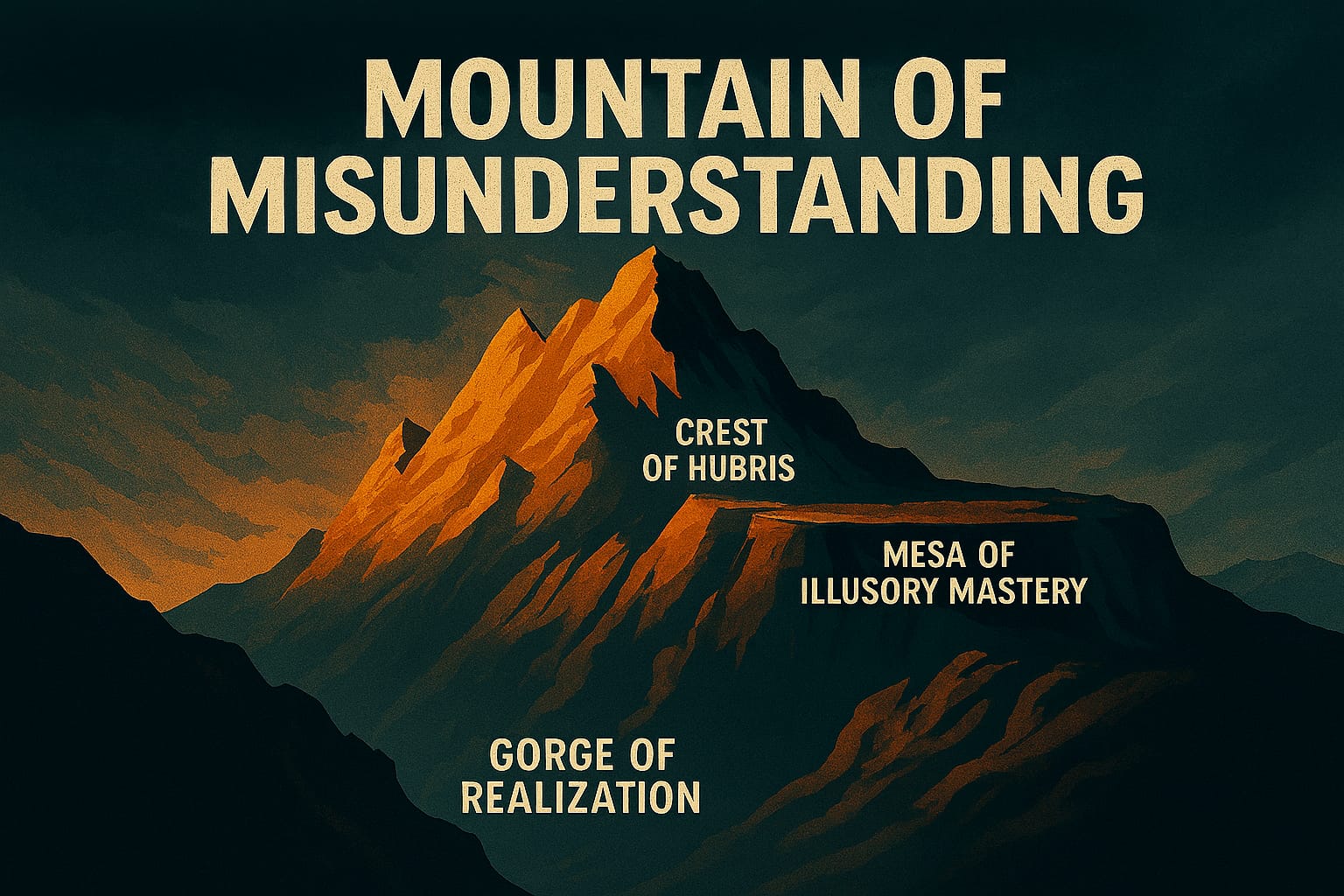

- Mountains of Misunderstanding: The AI Confidence Trap

Deep Dive: MCP Transforming AI

How MCP Surfaces Context in AI Systems

How MCP Enhances AI Adaptability

On a recent project, I watched our customer service chatbot give essentially the same answer regardless of whether the user was angry, delighted, or confused. The AI had no way to sense context — it was operating blind. Every interaction looked identical from the model's perspective, and the responses reflected that. That gap is what pushed me toward exploring the Model Context Protocol.

What is MCP?

MCP — Model Context Protocol — is a set of guidelines and standards that allow AI models to adjust their behavior based on the context they are operating in. What I've found is that the protocol matters less as an abstract specification and more as a forcing function: it requires you to actually define the contexts your AI operates in, which most teams haven't done rigorously. That exercise alone surfaces assumptions you didn't know you were making.

Benefits of MCP

In my experience, the practical gains from wiring up MCP fall into three categories worth naming honestly:

- Dynamic Adaptation: I've seen MCP enable AI systems to shift behavior mid-conversation without retraining — handling an unexpected escalation differently from a routine inquiry because the protocol recognizes the context has changed.

- Enhanced Efficiency: On our implementation, the reduction in manual triage was real. When the model knows it's operating in a complaint-handling context versus a general FAQ context, it stops routing questions to the wrong handler.

- Improved Business Intelligence: The context data MCP accumulates also feeds analysis. What I've noticed is that patterns in context-switching reveal friction points in the customer journey that wouldn't show up in standard usage logs.

MCP in Action

The clearest example I can point to is a customer service chatbot that adjusts its responses based on the user's tone and previous interactions. Before MCP, the model treated every session as stateless. After implementing context protocols, the chatbot could recognize that a user who'd already been transferred twice was in a different situation than a first-time caller — and respond accordingly. Resolution quality improved because the model finally had the context it needed.

Implementing MCP

On our implementation, I started with the step that sounds obvious but proved genuinely hard: mapping every context the chatbot actually ran in, not the ones we thought it ran in. That gap was revealing. We'd assumed three contexts; we found seven. That inventory became the foundation for everything else.

From there, the process looked like this:

- Identify Contexts: Map the real operating contexts — not the idealized ones. I found it useful to pull a week of conversation logs and tag them manually before writing a single line of protocol.

- Develop Protocols: Create specific behavioral guidelines for each context. The discipline here is precision: vague protocols produce vague behavior.

- Integrate with Existing Systems: This is where the engineering friction lives. Fitting MCP into existing pipelines required touching more system boundaries than I expected — authentication, session state, and logging all needed adjustment.

Challenges and Considerations

The challenges section of most MCP articles lists "complexity" and "data privacy" as bullet points and moves on. In practice, those abstractions hide real decisions with real costs.

The latency question, for example, is something I had to resolve concretely. Adding context protocols increased our chatbot's response time by roughly 200ms per message. That doesn't sound like much until users start noticing the hesitation. I had to decide whether the accuracy gain justified the UX cost. For our customer service tool, the answer was yes — context-aware responses drove resolution rates up enough that the tradeoff was obvious. For our internal search bot, where speed mattered more than nuance, it wasn't worth it. That decision forced us to maintain two different MCP configurations, which added ongoing maintenance overhead I hadn't budgeted for.

Data privacy is similarly concrete. Context protocols persist user state across a session, sometimes across sessions. That means you're storing behavioral signals that may qualify as personal data under GDPR or CCPA depending on your jurisdiction. On our project, we had to scope what context we retained, add explicit retention limits, and document the data flows for compliance review. None of that was optional.

Conclusion

Once we had MCP wired up properly, our chatbot's resolution rate climbed from 72% to 84%. The shift didn't happen because MCP is magic — it happened because context matters, and we'd been ignoring it. What I've learned is that the protocol itself is less important than the discipline it imposes: you cannot implement MCP without forcing your team to define, precisely, what contexts your AI actually operates in. That clarity pays off well beyond the protocol itself. The way we think about context in AI models has shifted, and I don't think we'd go back to stateless interactions for anything customer-facing.

Explore More

- Integrating Chat Completion into Prompt Spark -- Enhancing LLM Interactions

- Interactive Chat in PromptSpark With SignalR -- Building a Real-Time AI-Driven Chat Application

- AI Observability Is No Joke -- A humorous look at AI observability through the lens of a joke-fetching

- Using ChatGPT for C# Development -- Accelerate Your Coding with AI

- Trivia Spark: Building a Trivia App with ChatGPT -- Rapid Prototyping and AI-Assisted Development in Practice