English: The New Programming Language of Choice

English has always shaped how we write software, but with LLMs it now directly shapes what software gets produced. This article explores why prompt and context engineering are practical language skills, not just AI buzzwords.

AI & Machine Learning Series — 25 articles

- Using ChatGPT for C# Development

- Trivia Spark: Building a Trivia App with ChatGPT

- Creating a Key Press Counter with Chat GPT

- Using Large Language Models to Generate Structured Data

- Prompt Spark: Revolutionizing LLM System Prompt Management

- Integrating Chat Completion into Prompt Spark

- WebSpark: Transforming Web Project Mechanics

- Accelerate Azure DevOps Wiki Writing

- The Brain Behind JShow Trivia Demo

- Building My First React Site Using Vite

- Adding Weather Component: A TypeScript Learning Journey

- Interactive Chat in PromptSpark With SignalR

- Building Real-Time Chat with React and SignalR

- Workflow-Driven Chat Applications Powered by Adaptive Cards

- Creating a Law & Order Episode Generator

- The Transformative Power of MCP

- The Impact of Input Case on LLM Categorization

- The New Era of Individual Agency: How AI Tools Empower Self-Starters

- AI Observability Is No Joke

- ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados

- Mastering LLM Prompt Engineering

- English: The New Programming Language of Choice

- Measuring AI's Contribution to Code

- Building MuseumSpark - Why Context Matters More Than the Latest LLM

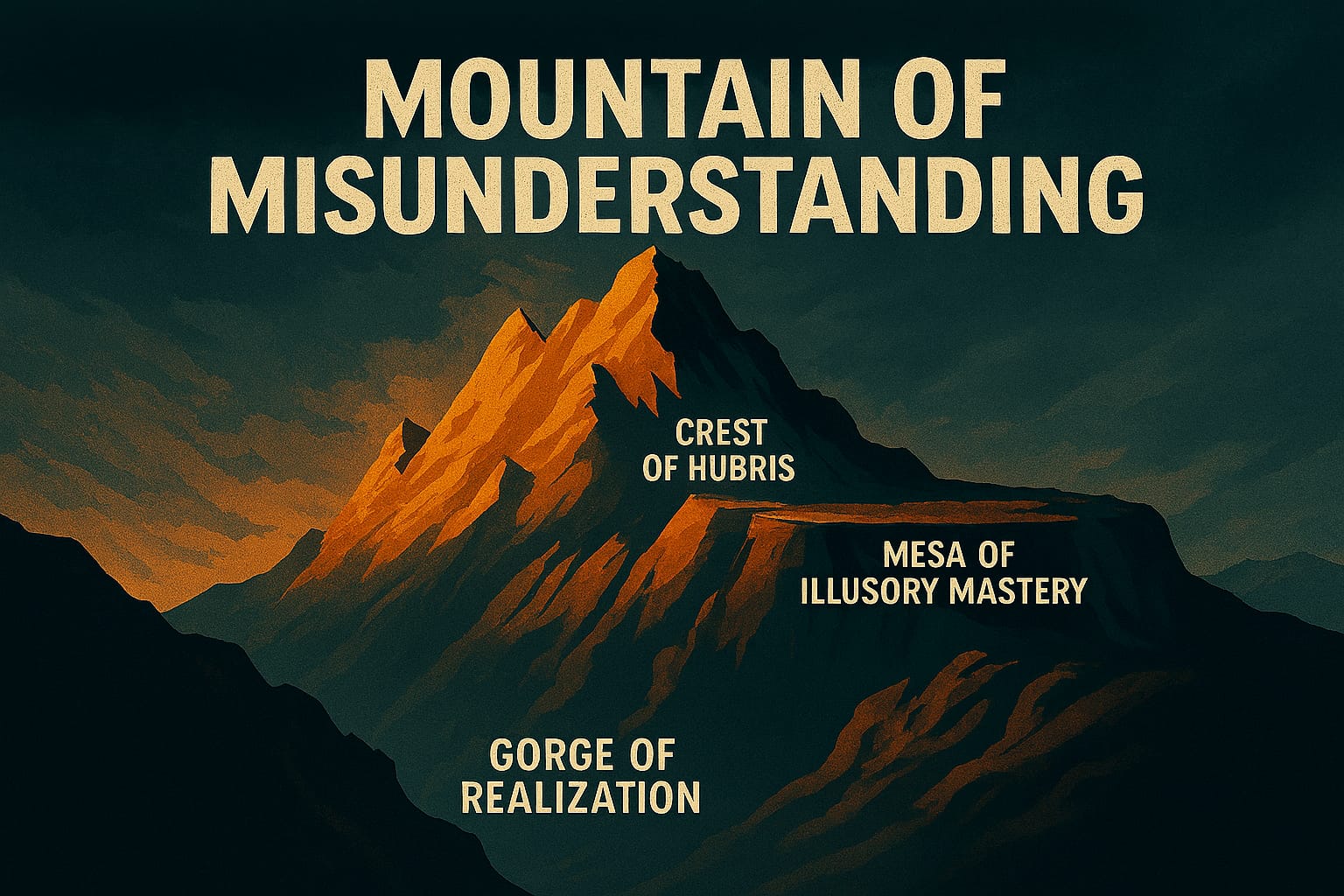

- Mountains of Misunderstanding: The AI Confidence Trap

The Real Shift Is Happening in the Prompt Window

You asked a fair question: why was there so much SQL in an article that should have been about prompt engineering and LLMs?

The short answer is that SQL was meant to be context, not the destination. I was trying to show that English has influenced programming for decades. But today, that influence has moved from naming conventions and query syntax into something much more direct: English now tells models what to build.

That changes the job in a practical way. A few years ago, if your intent was fuzzy, you mostly hurt code readability. Now, fuzzy intent can generate the wrong architecture, the wrong tests, or confidently wrong explanations in seconds. Language quality has become execution quality.

Why SQL Still Matters Here

SQL is still a useful bridge because it illustrates an old pattern we already understand: English-like syntax feels intuitive, but hidden mechanics matter.

SELECT CustomerName, OrderTotal

FROM Orders

WHERE OrderDate > '2023-01-01'

ORDER BY OrderTotal DESC;This reads like plain business language. But anyone who has tuned production queries knows readability is not the same thing as correctness, and it is definitely not the same thing as performance.

That same pattern shows up with LLMs. A prompt can read beautifully and still produce weak outcomes if it is missing constraints, context, or success criteria. SQL was never the core argument. It was the historical example that makes the LLM point easier to see.

Prompt Engineering Is Structured Intent, Not Fancy Wording

Prompt engineering gets dismissed as "just writing good English". In practice, I have found it is closer to requirements engineering compressed into a few paragraphs.

A weak prompt tends to look like this:

Build me an API for orders.A stronger prompt carries intent, constraints, and boundaries:

Build an ASP.NET Core minimal API for order management.

Requirements:

- Endpoints: GET /orders/{id}, POST /orders

- Validation: reject negative totals; currency must be ISO code

- Persistence: EF Core with SQL Server

- Non-functional: idempotent POST using client-provided idempotency key

- Output: production-ready code plus a focused test plan

Do not add authentication in this version.Same language. Very different outcome.

What changed was not grammar polish. What changed was engineering clarity: scope, constraints, and explicit exclusions. The model can only optimize for what you specify.

Context Engineering Is the Bigger Lever

Prompt wording matters, but context quality usually matters more.

When people talk about "context engineering," they are really talking about giving the model the same grounding a senior engineer would ask for before implementing anything meaningful:

- What repository or module are we modifying?

- What architecture constraints are non-negotiable?

- What coding conventions and naming rules already exist?

- What trade-offs are acceptable for this specific change?

In other words, context engineering is about reducing ambiguity before generation starts.

In my own workflow, the most useful prompt improvements are often context payload improvements. I get better results from adding a concise architecture brief and representative examples than from endlessly polishing the first sentence of the prompt.

English Is Now an Operational Skill

This is where the "English as a programming language" claim becomes more than a metaphor.

In classic coding, English influenced naming, comments, and API discoverability. In AI-assisted coding, English influences behavior generation:

- Which components get created

- Which edge cases get tested

- Which assumptions are treated as facts

- Which risks are ignored

That means teams should treat prompts and context as first-class engineering artifacts.

If a prompt generated a flawed migration script, that is not a "tool issue" in isolation. It is a specification issue. If context omitted a critical domain rule, that omission is as consequential as missing a guard clause in code.

Practical Prompt and Context Pattern

When I want reliable output, I tend to structure requests in this order:

- Objective: what we are trying to produce.

- Constraints: what cannot be violated.

- Context: architecture, domain rules, and existing patterns.

- Acceptance criteria: what "done" looks like.

- Exclusions: what should not be built.

That shape consistently outperforms open-ended prompts in real delivery work, especially when multiple engineers iterate on the same task across days.

The Team-Level Implication

The big risk is pretending this is just an individual writing skill.

In reality, it is a team system design problem. If your team has strong code standards but weak prompt and context standards, you create a reliability gap exactly where AI is supposed to help.

I have seen teams move faster once they start versioning prompt patterns, documenting context packages, and reviewing AI instructions with the same seriousness as code reviews. The gains are not only speed. The gains are repeatability.

Reflection

So yes, your instinct is right: this topic is really about prompt engineering, context engineering, and giving precise English instructions to LLMs.

SQL belongs here only as the backstory. It reminds us that English-like interfaces have always made software feel approachable while hiding deeper mechanics. LLMs simply raise the stakes. We are no longer just writing code in English-flavored ecosystems. We are increasingly programming behavior through language itself, and that deserves the same rigor we already apply to architecture and implementation.

Further Reading

Explore More

- Using Large Language Models to Generate Structured Data -- Revolutionizing Data Structuring with AI

- Adapting with Purpose: Lifelong Learning in the AI Age -- Exploring the Role of AI in Continuous Education

- Building MuseumSpark - Why Context Matters More Than the Latest LLM -- A case study in context-first LLM architecture that turned a 71% failure

- Using ChatGPT for C# Development -- Accelerate Your Coding with AI

- Trivia Spark: Building a Trivia App with ChatGPT -- Rapid Prototyping and AI-Assisted Development in Practice