Guide to Redis Local Instance Setup

Setting up a Redis local instance can significantly enhance your application's performance. This guide walks you through the process, ensuring you configure Redis for maximum efficiency and reliability.

Development Series — 23 articles

- Mastering Git Repository Organization

- CancellationToken for Async Programming

- Git Flow Rethink: When Process Stops Paying Rent

- Understanding System Cache: A Comprehensive Guide

- Guide to Redis Local Instance Setup

- Fire and Forget for Enhanced Performance

- Building Resilient .NET Applications with Polly

- The Singleton Advantage: Managing Configurations in .NET

- Troubleshooting and Rebuilding My JS-Dev-Env Project

- Decorator Design Pattern - Adding Telemetry to HttpClient

- Generate Wiki Documentation from Your Code Repository

- TaskListProcessor - Enterprise Async Orchestration for .NET

- Architecting Agentic Services in .NET 9: Semantic Kernel

- NuGet Packages: Benefits and Challenges

- My Journey as a NuGet Gallery Developer and Educator

- Harnessing the Power of Caching in ASP.NET

- The Building of React-native-web-start

- TailwindSpark: Ignite Your Web Development

- Creating a PHP Website with ChatGPT

- Evolving PHP Development

- Modernizing Client Libraries in a .NET 4.8 Framework Application

- Building Git Spark: My First npm Package Journey

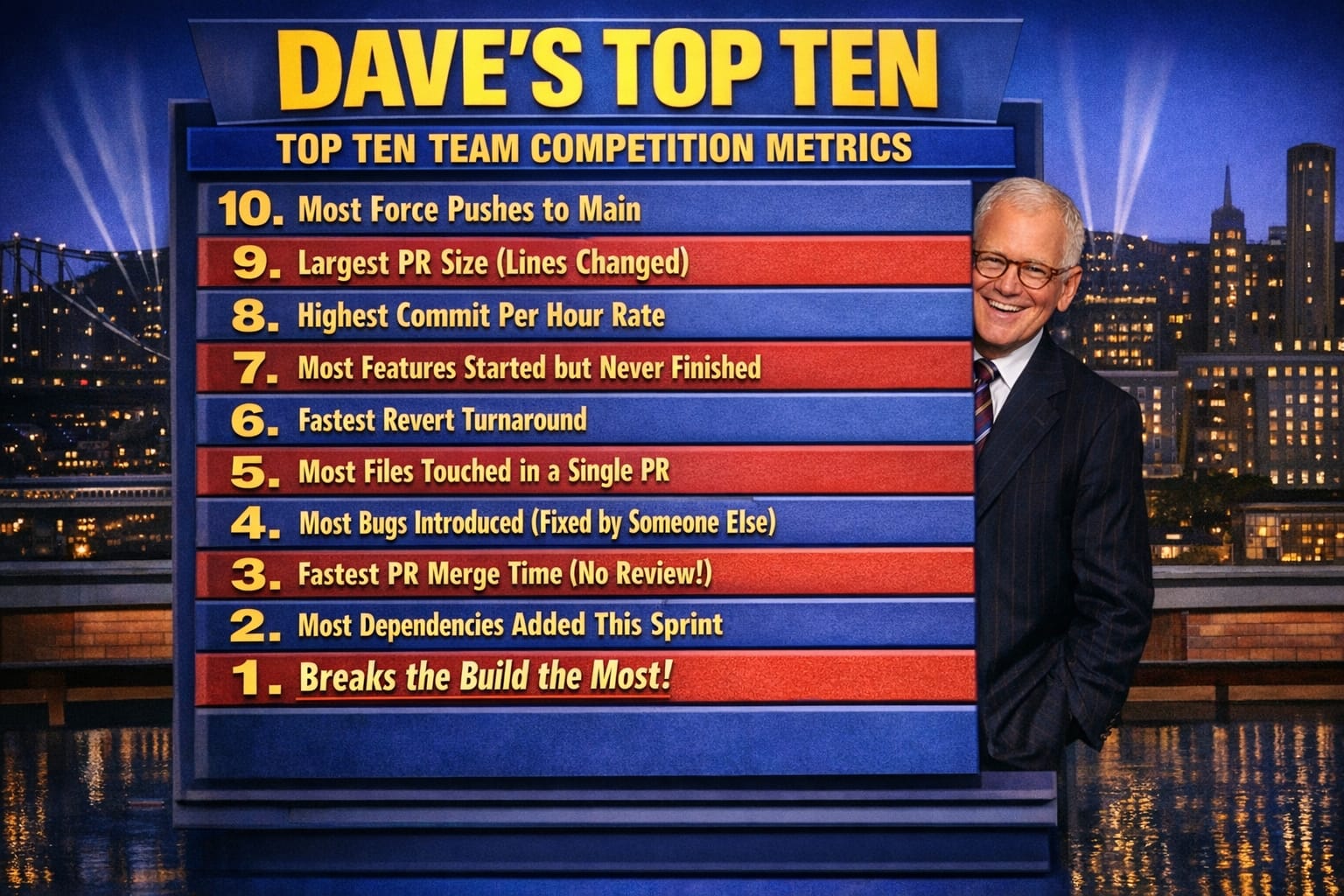

- Dave's Top Ten: Git Stats You Should Never Track

I've watched teams waste days fighting Redis memory limits and eviction policies they never configured — not because Redis is difficult to set up, but because the defaults are quietly wrong for most development scenarios. On a recent project, I spent an afternoon debugging why our local Redis instance was silently dropping cache keys under moderate load. The culprit wasn't the application code. It was maxmemory-policy still sitting at noeviction, meaning Redis was refusing writes once it hit its memory cap rather than evicting old keys. Nobody had touched the config file after install. That's the kind of thing that doesn't show up in "getting started" guides, and it's exactly why I decided to write down what I actually do when I stand up a local Redis instance.

Why Run Redis Locally at All

The trade-off here is real: managed Redis (ElastiCache, Azure Cache for Redis, Upstash) is easier to operate in production, but developing against a remote cache introduces latency, shared-state problems between developers, and a hard dependency on network connectivity. In my experience, running Redis locally during development catches a whole class of bugs — race conditions in key expiry, cache stampede scenarios, serialization issues — that simply don't surface when you're pointing at a shared staging cache that's always warm.

What I've found is that local Redis also forces you to think about eviction and memory limits early. A managed instance hides those decisions behind console sliders. A local instance with a constrained maxmemory setting exposes them immediately, which is where you want to encounter them — not at 2am during a production incident.

Installation and First-Run Gotchas

On macOS, the fastest path is Homebrew:

brew install redis

brew services start redisOn Windows, the practical option is WSL2 with Ubuntu, then:

sudo apt update

sudo apt install redis-server

sudo service redis-server startRunning Redis natively on Windows via the old Microsoft-forked binaries is something I'd steer clear of — those builds stalled at version 3.x years ago and don't reflect anything close to current Redis behavior.

Verify the instance is running:

redis-cli pingYou should get PONG. If you get a connection refused error, check whether the service started cleanly — on WSL2, systemd isn't always running, so you may need to start Redis explicitly with redis-server rather than relying on the service wrapper.

The first gotcha I hit on a new machine is Redis binding only to 127.0.0.1 by default. That's correct for a local dev instance and you should leave it that way. The second gotcha is the config file location — Homebrew puts it at /usr/local/etc/redis.conf on Intel Macs and /opt/homebrew/etc/redis.conf on Apple Silicon. On Ubuntu it's /etc/redis/redis.conf. Know which one your process is actually reading before you start editing:

redis-cli config get * # shows running config, not file configThat distinction matters. I've edited a config file and restarted Redis, only to discover the service was reading a different file entirely. The config get command reads from the live running instance, so it tells you what's actually in effect.

Configuration Trade-offs I've Had to Navigate

The defaults Redis ships with are conservative in some ways and dangerous in others. Here's what I change on every local instance:

Memory limit and eviction policy. Redis has no memory limit by default — it will consume all available RAM until the OS kills it or writes start failing. For local development I set a ceiling:

maxmemory 256mb

maxmemory-policy allkeys-lruallkeys-lru evicts the least recently used keys when the limit is hit, which mimics what a properly configured production cache does. noeviction (the default) causes write errors instead — almost never what you want in a dev environment where you're testing cache behavior under load.

Persistence. By default, Redis writes RDB snapshots to disk. On a development machine this is low-stakes noise — it creates .rdb files you don't need and adds disk I/O. I turn it off locally:

save ""The trade-off here is that a Redis restart loses all cached data. For local dev, that's fine. You want a cold cache on restart to avoid stale test data polluting your sessions.

requirepass vs. no auth. I don't set a password on a local instance. It's bound to loopback, it holds no production data, and adding auth means updating every connection string in local config files. What I've found is that teams who require auth on local Redis inevitably end up with a shared password in plain text in appsettings.Development.json, which achieves nothing and adds friction. Save the auth enforcement for staging and production.

tcp-keepalive. The default is 300 seconds. On a local dev machine where processes start and stop constantly, I drop this to 60:

tcp-keepalive 60This cleans up dead connections faster, which matters when you're restarting your application repeatedly during development.

A Debugging Story Worth Remembering

On a recent project, the team was using Redis to cache API responses with a 10-minute TTL. Everything worked in local testing. In staging, random requests were returning stale data well past the expiration window. We spent two days suspecting the application caching layer before I ran:

redis-cli debug sleep 0

redis-cli object encoding <key>The encoding wasn't the issue, but the debugging process led me to check the Redis slow log:

redis-cli slowlog get 10What I found was that the staging Redis instance was CPU-bound during a batch job that ran every 10 minutes — exactly aligned with the TTL. Redis is single-threaded for command processing. When the batch job hammered the instance with thousands of writes, TTL expiry checks were delayed, causing keys to linger past their expiration time. The fix was setting hz higher on the staging instance to increase the frequency of background tasks:

hz 20The default is 10, meaning Redis runs background housekeeping — expiry, connection cleanup — 10 times per second. Under write-heavy load, that wasn't enough. Raising it to 20 resolved the stale key problem.

I wouldn't have found that without understanding how Redis handles expiry internally. Keys in Redis expire lazily (checked when accessed) and actively (background sweep at the hz interval). If neither check fires in time, you get a briefly stale key. On a lightly loaded local machine this never surfaces. Under staging load, it did.

Connecting from .NET

In .NET applications I use StackExchange.Redis. The basic setup:

var connection = ConnectionMultiplexer.Connect("localhost:6379");

var db = connection.GetDatabase();One configuration I always set explicitly in the connection string for local instances:

localhost:6379,connectTimeout=5000,syncTimeout=5000,abortConnect=falseabortConnect=false means the application starts even if Redis isn't available at startup — useful locally when services come up in different orders. In production you'd want to think harder about that choice, but locally it eliminates the "Redis wasn't up yet when the app started" startup crash that otherwise breaks your flow every time you restart things out of sequence.

What I've Learned from Setting This Up Repeatedly

The gap between "Redis is running" and "Redis is configured correctly for what I'm building" is wider than most people expect the first time through. The defaults get you to PONG in five minutes, but they leave you exposed to silent write failures, unexpected memory exhaustion, and expiry behavior that only misbehaves under load.

What I've learned is to treat the config file as a first-class artifact in the project, not an afterthought. I keep a redis.conf in the repository's local-dev folder with the memory limit, eviction policy, and persistence settings already dialed in. New developers on the project get a working, correctly configured Redis instance from day one, and we don't relitigate the maxmemory-policy conversation every time someone hits a write error they can't explain.

The other thing worth knowing: redis-cli monitor is one of the most useful debugging tools in the stack. It streams every command hitting your Redis instance in real time:

redis-cli monitorRun that while exercising your application and you'll immediately see duplicate keys, unexpected TTLs, commands firing in the wrong order — things that are invisible in application logs. In my experience, five minutes with monitor resolves cache bugs that would otherwise take hours to isolate.

Explore More

- Understanding System Cache: A Comprehensive Guide -- Explore the types, functionality, and benefits of system cache