Building Git Spark: My First npm Package Journey

Creating git-spark, my first npm package, from frustration to published tool. Learn Git analytics limits and the value of honest metrics.

Development Series — 23 articles

- Mastering Git Repository Organization

- CancellationToken for Async Programming

- Git Flow Rethink: When Process Stops Paying Rent

- Understanding System Cache: A Comprehensive Guide

- Guide to Redis Local Instance Setup

- Fire and Forget for Enhanced Performance

- Building Resilient .NET Applications with Polly

- The Singleton Advantage: Managing Configurations in .NET

- Troubleshooting and Rebuilding My JS-Dev-Env Project

- Decorator Design Pattern - Adding Telemetry to HttpClient

- Generate Wiki Documentation from Your Code Repository

- TaskListProcessor - Enterprise Async Orchestration for .NET

- Architecting Agentic Services in .NET 9: Semantic Kernel

- NuGet Packages: Benefits and Challenges

- My Journey as a NuGet Gallery Developer and Educator

- Harnessing the Power of Caching in ASP.NET

- The Building of React-native-web-start

- TailwindSpark: Ignite Your Web Development

- Creating a PHP Website with ChatGPT

- Evolving PHP Development

- Modernizing Client Libraries in a .NET 4.8 Framework Application

- Building Git Spark: My First npm Package Journey

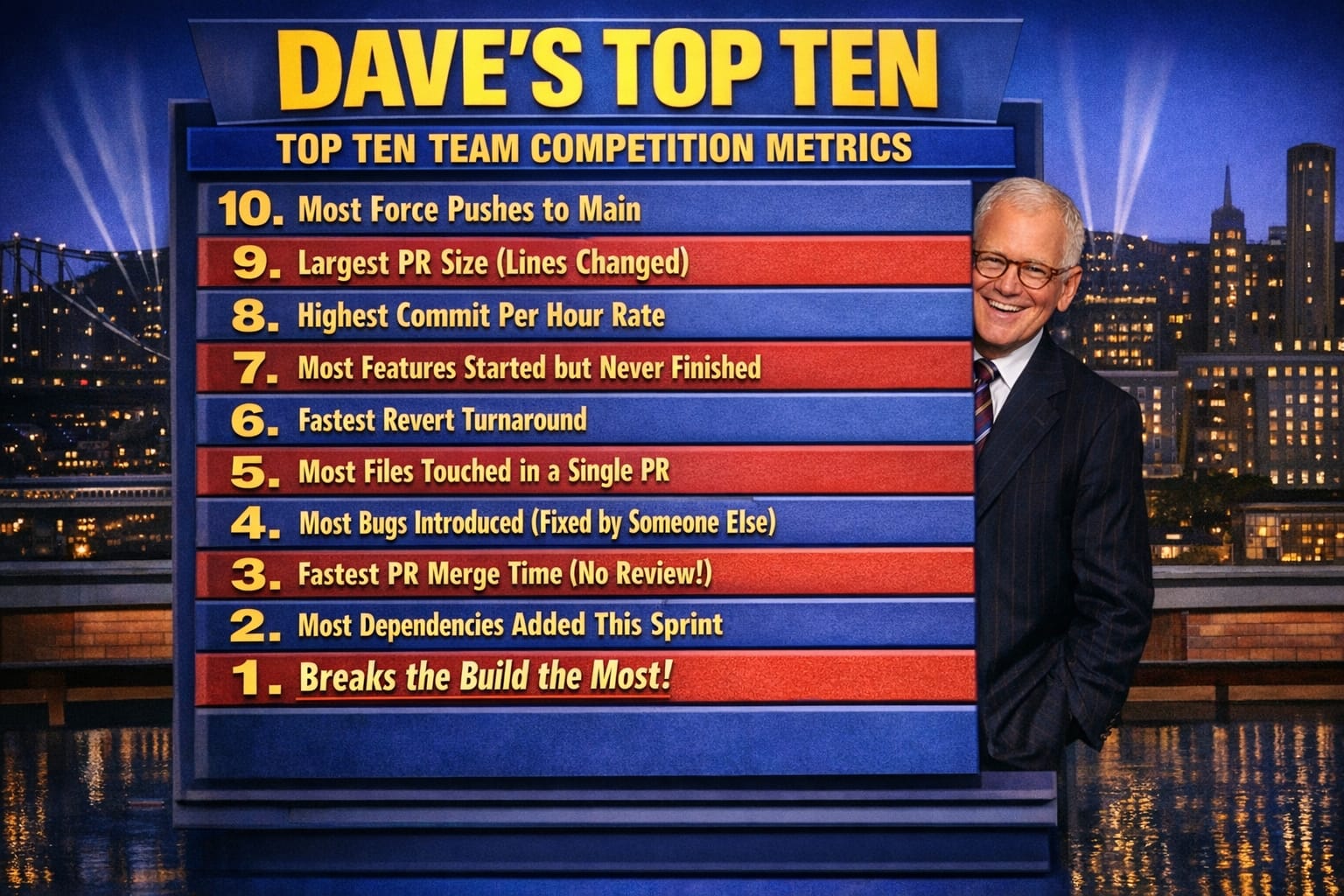

- Dave's Top Ten: Git Stats You Should Never Track

Building Git Spark: My First npm Package Journey

The Problem That Started It All

After writing about measuring AI's contribution to code, I was frustrated. I couldn't quantify how much AI agents were actually helping my development process. Git history seemed like the obvious answer—objective, comprehensive, and already being tracked. That weekend, I decided to build git-spark, my first npm package.

What I Set Out to Measure

My goal was simple: answer the question "How much of my code is generated by AI prompts?" I wanted to understand:

- How my development behavior changed over time

- What patterns existed in my Git repository data

- Whether Git history could reveal AI assistance levels

- Which metrics were actually meaningful vs. misleading

The Weekend Build Reality

Using AI agents (ironically, the same ones I was trying to measure), I got a great first build. The code worked, visualizations looked professional, and metrics seemed authoritative. Then I made a critical mistake: I actually read the formulas behind the numbers.

What I Learned About "Health Scores"

My first version calculated a "Repository Health Score" based on commit frequency, author distribution, and code churn. It looked scientific. It generated impressive charts. And it was complete nonsense. The formula assigned arbitrary weights to metrics we couldn't meaningfully interpret.

Multiple Rewrites for Honesty

This realization forced multiple rewrites. Each iteration stripped away another layer of pretense, another attempt to derive meaning from data that simply didn't contain it. Building an enterprise-worthy package—something I'd put my name on—required brutal honesty about limitations.

What Git History Cannot Tell You

The biggest surprise from building git-spark: the most valuable aspects of software development leave absolutely no trace in commit logs. Despite all the interesting patterns git-spark revealed, I failed to achieve my primary goal: pinpointing AI agent contributions to my codebase.

Why Git Can't Answer the AI Question

- Git records commits, not process

- AI assistance happens before the commit

- No standard way to tag AI-generated code

- Human editing obscures AI origins

- Pair programming (human + AI) is invisible

What Git Spark Does Differently

Instead of fake health scores, git-spark reports observable patterns:

- Commit frequency and temporal trends

- File coupling and change patterns

- Author contribution distributions

- Code structure evolution over time

What it refuses to do:

- Generate productivity scores

- Rank or compare developers

- Measure code quality from lines of code

- Pretend to know AI contribution levels

- Infer anything not directly observable

Lessons Learned

Building my first npm package taught me:

- Testing and validation matter: Enterprise-worthy tools require rigorous testing

- Transparency builds trust: Show your formulas or don't show scores

- Honesty over authority: Admit what you can't measure

- Context is everything: Patterns mean different things for different teams

- Some questions remain unanswered: AI contributions are still invisible in Git

Failing Successfully

I set out to answer "How much of my code is generated by AI prompts?" I built an entire analytics tool, published my first npm package, and learned more about Git internals than I ever expected. And I still can't answer that question.

But that failure taught me something more valuable: the discipline of honest measurement. Not every question has a data-driven answer. Not every metric is meaningful. And tools that claim to measure everything often measure nothing reliably.

Try Git Spark

Git-spark is early (version 0.x) but functional. It's built in the open on GitHub and available on npm. I'm actively seeking feedback on what's valuable and what needs rework. The goal: create an honest, trustworthy reporting tool that adds value without inventing scores from data that isn't there.

Install it: npm install -g git-spark or try with npx git-spark analyze

Conclusion

The best metrics tools don't pretend to have all the answers. They provide honest data and trust you to ask better questions. That's the philosophy behind every line of code in git-spark, and I hope it's useful to others wrestling with these same measurement challenges.

Explore More

- Dave's Top Ten: Git Stats You Should Never Track -- A Friday Afternoon Conversation About the Worst Possible Git Scorecard,

- Decorator Design Pattern - Adding Telemetry to HttpClient -- Adding Telemetry to HttpClient in ASP.NET Core

- NuGet Packages: Benefits and Challenges -- Exploring the Pros and Cons of NuGet Packages

- Mastering Git Repository Organization -- Enhance Collaboration and Project Management with Git

- Guide to Redis Local Instance Setup -- Master the Setup of Redis on Your Local Machine