Mastering LLM Prompt Engineering

Unlock the full potential of Large Language Models like ChatGPT, Claude, and Gemini by mastering prompt engineering, context strategies, and best practices for AI-powered conversations and code generation.

AI & Machine Learning Series — 25 articles

- Using ChatGPT for C# Development

- Trivia Spark: Building a Trivia App with ChatGPT

- Creating a Key Press Counter with Chat GPT

- Using Large Language Models to Generate Structured Data

- Prompt Spark: Revolutionizing LLM System Prompt Management

- Integrating Chat Completion into Prompt Spark

- WebSpark: Transforming Web Project Mechanics

- Accelerate Azure DevOps Wiki Writing

- The Brain Behind JShow Trivia Demo

- Building My First React Site Using Vite

- Adding Weather Component: A TypeScript Learning Journey

- Interactive Chat in PromptSpark With SignalR

- Building Real-Time Chat with React and SignalR

- Workflow-Driven Chat Applications Powered by Adaptive Cards

- Creating a Law & Order Episode Generator

- The Transformative Power of MCP

- The Impact of Input Case on LLM Categorization

- The New Era of Individual Agency: How AI Tools Empower Self-Starters

- AI Observability Is No Joke

- ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados

- Mastering LLM Prompt Engineering

- English: The New Programming Language of Choice

- Measuring AI's Contribution to Code

- Building MuseumSpark - Why Context Matters More Than the Latest LLM

- Mountains of Misunderstanding: The AI Confidence Trap

Mastering LLM Prompt Engineering

The Art of Effective AI Communication

I watched a team spend two weeks tuning prompts for a code-generation task, only to realize they were fighting token limits, not craft. Every prompt was either too thin to give the model real direction, or so bloated with background that the actual request got buried. That experience taught me something about how we talk to these models: context balance isn't about unlocking potential—it's about preventing your LLM from either giving you half-baked answers or running out of room mid-task.

Large Language Models (LLMs) are remarkable tools with impressive capabilities. Since ChatGPT's launch in 2022, the AI landscape has exploded with powerful models like Claude Sonnet, GPT-4, Gemini, and many others, each attracting millions of users. The key to their performance lies in a crucial element: prompt engineering.

Why Prompts Matter

Modern LLMs like ChatGPT, Claude Sonnet, and GPT-4 excel at understanding and mimicking human conversation nuances. They're trained on diverse internet text, enabling them to generate creative responses, navigate complex dialogues, and even exhibit humor. Worth noting, though: these models don't truly understand or have beliefs — they generate responses based on patterns learned during training.

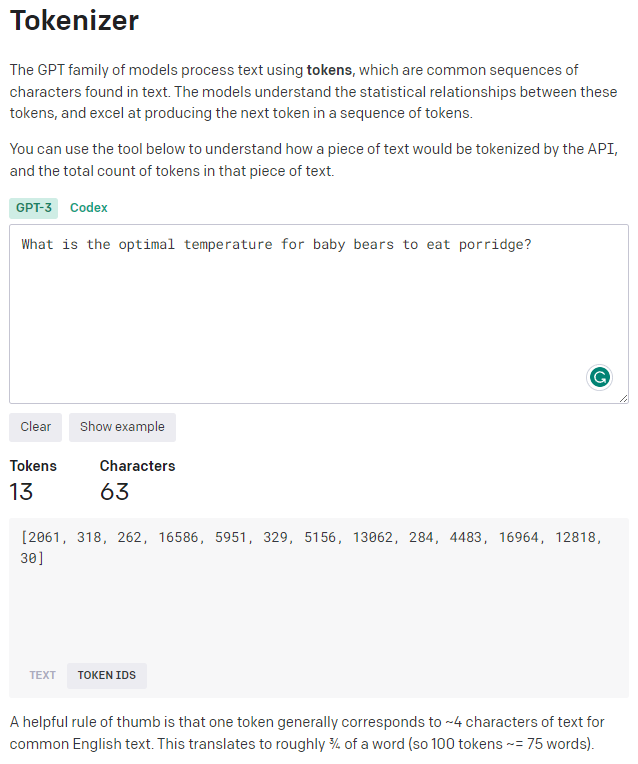

LLMs generate output based on statistical patterns learned from vast amounts of data, without any inherent sense of what is true or false. They operate by analyzing prompts as sequences of tokens representing the input text. You can see this tokenization process in action using tools like OpenAI's Tokenizer or Anthropic's Claude interface.

These models use token sequences to predict the most likely continuation, drawing upon patterns learned during training. They cannot reason or comprehend the deeper meaning behind the information they generate.

Understanding how LLMs work is what makes them useful. Whether you're working with ChatGPT, Claude, Gemini, or other models, they can be valuable tools for generating code, unit tests, boilerplate methods, and various language-related tasks. As with any tool, it's up to the user to cross-check outputs for accuracy and validity, particularly for critical or factual information.

The Three Bears of Prompt Engineering

There's a real pattern I've encountered repeatedly that maps to the Goldilocks problem: too little context leaves LLMs guessing, too much context buries your actual request, and the middle ground produces the focused responses you actually want. The metaphor is cute, but the failure modes are concrete — and I've hit all three.

Papa Bear: Too Little Context

The sparse prompt is the one I see most often from developers who are new to working with LLMs. A brief, vague prompt leaves the model guessing about intent. In practice, I sent a one-line prompt to Claude asking for a data validation function, and got back something that looked right but had no error handling whatsoever. That's the cost of too little — the model fills in gaps with the most statistically probable answer, not the most complete one. Without sufficient context, AI models like ChatGPT, Claude, or Gemini produce irrelevant or generic responses because they have nothing else to work with.

Example of Too Little Context:

"Temperature?"

This prompt is too vague — temperature of what? For what purpose? Any LLM has no context to work with.

Mama Bear: Too Much Context

On a recent project, I watched someone paste three paragraphs of project history into a prompt before asking a simple question about sorting an array. The model dutifully addressed the backstory and nearly missed the actual request. Too much information buries your question. What I've found is that excessive context doesn't make the model smarter — it gives the model more surface area to wander across, and the actual request gets proportionally less attention. This applies whether you're using ChatGPT, Claude, or another model.

Example of Too Much Context:

"In the cozy little cottage on the hill, where the fireplace crackles and the night is chilly, surrounded by memories of childhood and the warmth of family gatherings, what is the optimal temperature for the porridge to warm our souls and tummies while we sit around the wooden table that's been in the family for generations?"

This prompt is buried in unnecessary details that distract from the core question.

Baby Bear: Just the Right Context

In my experience, the balance point depends entirely on the task — code generation can absorb five times more context than a quick brainstorm, and a reasoning task like debugging benefits from a precise description of what went wrong rather than a full architectural overview. The goal is giving the model exactly what it needs to understand intent without overwhelming it.

Example of Just Right Context:

"What is the optimal serving temperature for porridge to ensure it's safe to eat but not too hot for a child?"

This prompt is specific, clear, and provides just enough context for a focused, useful response.

The principle applies every time you write a prompt: avoid being too vague or too exhaustive. Strike the balance — not too little, not too much, but just enough context to get the result you need.

Improving LLM Prompt Crafting

Here are some prompt engineering approaches that apply across all LLM platforms:

- Use clear language — be explicit about your requirements and expectations

- Be concise — avoid overly complex or lengthy prompts that work poorly on any model

- Iterate and experiment — refine prompts to fine-tune outputs for your specific LLM

- Be specific to your domain (e.g., Python, JavaScript) when needed

- Include code samples for programming-related queries

- Clarify the objective and desired output format

- Ask for best practices or step-by-step guidance

- Pose real-world problem-solving scenarios with context

- Request comparisons between different approaches or technologies

- Ask for debugging help with specific error messages

- Explore advanced features and design patterns in your field

- Consider the strengths of different models (e.g., Claude for reasoning, GPT for creativity)

What I've learned is that mastering prompt engineering is less about memorizing rules and more about developing an instinct for what a model needs to do its job well. By providing clear instructions and relevant context, you can use these tools — whether ChatGPT, Claude Sonnet, Gemini, or others — to produce genuinely useful output rather than plausible-sounding noise.

Prompt Engineering as a Discipline

Prompt engineering is the art of crafting precise, effective prompts to guide AI models like ChatGPT, Claude Sonnet, Gemini, and other LLMs toward generating cost-effective, accurate, useful, and safe outputs. It's not confined to text generation — it has wide-ranging applications across the AI domain, and it's becoming a distinct technical skill with real career weight behind it.

Prompt engineers play a vital role in optimizing AI models' efficiency and cost-effectiveness. They can access various models through different APIs — OpenAI's GPT models, Anthropic's Claude, Google's Gemini, and others — each with unique cost structures and capabilities. Parameter tuning and prompt optimization are essential for improving response quality and accuracy across different platforms.

Prompt design involves creating the right prompt for a language model to achieve a stated goal. It considers each model's unique nuances, domain knowledge, and quality measurement criteria. Modern prompt engineering extends this to include designing prompts at scale, tool integration, workflow planning, prompt management, evaluation, and optimization across multiple LLM platforms.

LLM-Specific Considerations

Not all LLMs are created equal. Each model family has its own strengths, and understanding these differences helps you tailor your prompts for better results.

ChatGPT and GPT Models

- Excellent for creative writing and brainstorming

- Strong code generation capabilities

- Responds well to conversational prompts

- Custom instructions for personalization

Claude Sonnet and Opus

- Superior reasoning and analysis

- Excellent for complex problem-solving

- Great at following detailed instructions

- Handles long-form content exceptionally well

Google Gemini

- Strong multimodal capabilities

- Excellent for factual information

- Good at structured data tasks

- Integrates well with Google services

Open Source Models

- Llama, Mistral, and others offer customizability for specific domains

- Cost-effective for high-volume use

- Full control over deployment and data

- Growing ecosystem of fine-tuned variants

Custom Instructions and System Prompts

Most modern LLMs support some form of custom instructions or system prompts that allow you to set persistent guidelines for your interactions. These personalized directives help the AI understand your preferences and produce responses that align with your specific requirements.

Custom instructions are personalized guidelines you can set to influence AI responses across different platforms. They serve as directives to help models understand your preferences — whether you're using ChatGPT's Custom Instructions, Claude's system prompts, or similar features in other models.

These instructions are especially useful when you have specific expectations for the content, style, or format of responses. By using custom instructions, you can shape how AI models respond in ways that carry across an entire session or project, rather than re-explaining your context every time.

Here's how custom instructions work across major platforms:

- ChatGPT: Use Custom Instructions in settings to set persistent preferences

- Claude: Begin conversations with system prompts or use project-specific instructions

- API Usage: Include system messages in your API calls for consistent behavior

- Open Source: Configure system prompts during model initialization

Whether you're looking for code solutions, explanations, or engaging conversations, custom instructions are one of the most underused tools for getting consistent, targeted output from any LLM platform.

The Power of Well-Crafted Prompts

The difference between a mediocre AI interaction and a genuinely useful one usually comes down to how well the prompt is crafted. That's not a platitude — I've seen the same model produce useless output and genuinely impressive output on the same task, depending entirely on how the request was framed.

Well-crafted prompts are the foundation of productive AI-powered work, regardless of which LLM you choose. Start with your next prompt: tighten the context, clarify the objective, and see what changes — whether you're using ChatGPT, Claude Sonnet, Gemini, or any other model.

"The key to effective AI interaction lies in the art of crafting the perfect prompt — giving models just the right context to do their best work."

Explore More

- Using ChatGPT for C# Development -- Accelerate Your Coding with AI

- Trivia Spark: Building a Trivia App with ChatGPT -- Rapid Prototyping and AI-Assisted Development in Practice

- ChatGPT Meets Jeopardy: C# Solution for Trivia Aficionados -- Blending Trivia and Technology

- English: The New Programming Language of Choice -- How English is Transforming Software Development

- Using Large Language Models to Generate Structured Data -- Structured Data Generation with AI