Stop Digging Through Logs. Start Designing for Learning.

Analytics and development teams operate on the same data with different goals, different time horizons, and different definitions of what matters. This is about that structural gap — and the analytic contract that makes both teams' assumptions visible before something breaks.

Software Engineering Series — 3 articles

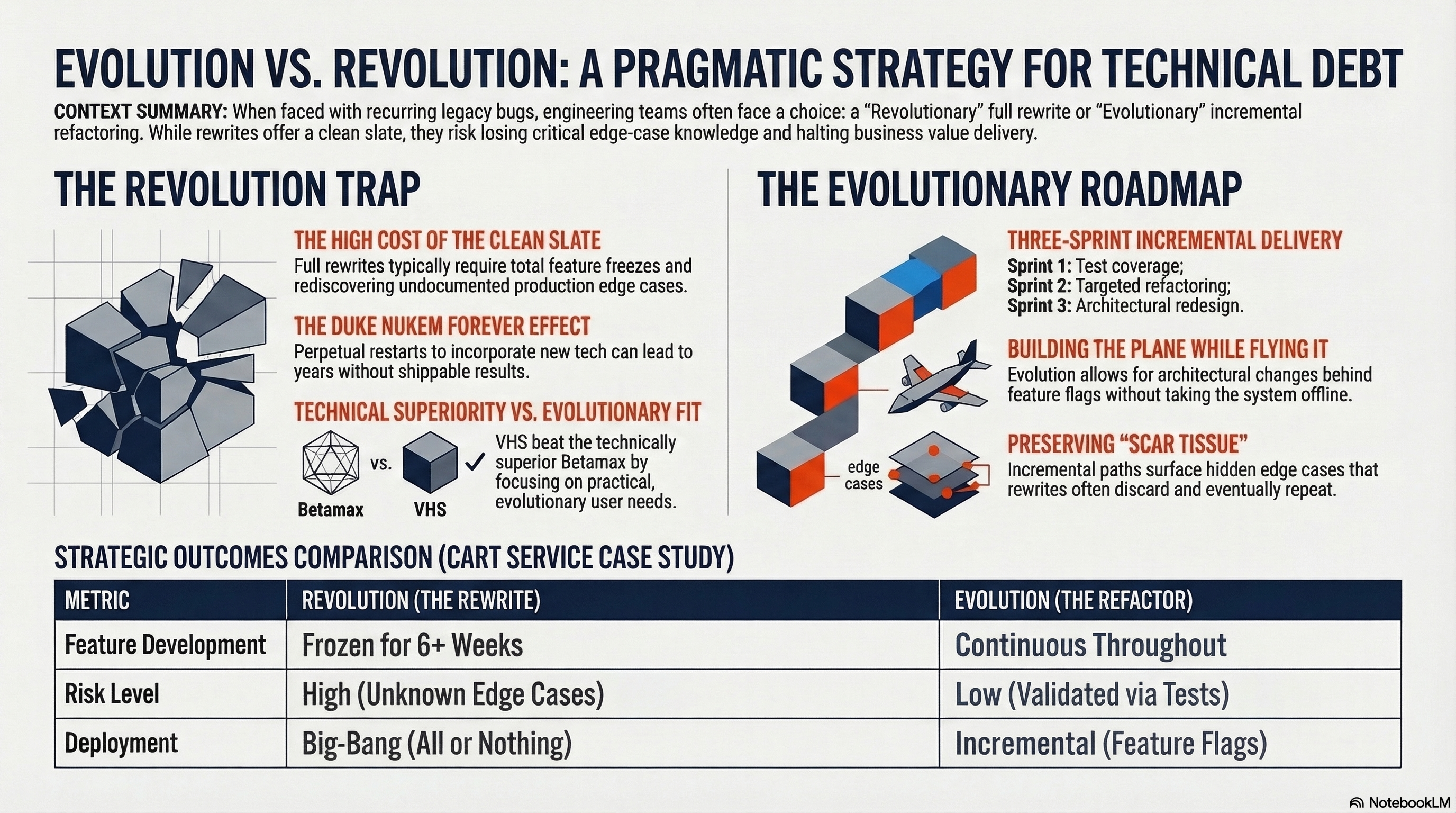

- Evolution over Revolution: A Pragmatic Approach

- RESTRunner: Building a DIY API Load Testing Tool

- Stop Digging Through Logs. Start Designing for Learning.

Indiana Jones Had It Easy

Indiana Jones built a career reading dead civilizations. Artifacts, ruins, things people left behind without knowing anyone would come looking for them. The methodology works when the civilization is gone. The problem with analytics is that the civilization is still running — and it changes while you are reading last quarter's map.

One afternoon I got a call from an executive asking why a trusted report had stopped showing data. Not slowed down. Gone to zero.

The answer was not a data lake outage or a dashboard bug. In the previous sprint, the development team had changed how a transaction moved through its final workflow stages. The field the analytics team had been reading as the transaction-completion indicator shifted. From development's perspective, it was an implementation detail — in-memory workflow tracking, not a business record. From analytics' perspective, it was the most deterministic signal they had.

That gap between what development built and what analytics read from it is what I want to talk about. Not as a failure story — the people involved were competent and acting reasonably — but as a structural dynamic that shows up in almost every organization with a development team on one side and a reporting function on the other.

Most organizations treat analytics like archaeology: digging through what the system left behind, inferring meaning from residue. Archaeology studies dead cultures — civilizations that no longer exist, reconstructed from the artifacts they happened to leave behind. The data is always a lagging record of something that already ended, in a system that may have already moved on.

Sociology is different. It studies living, vibrant cultures — in motion, in the present. A sociologist is not reconstructing what happened; they are observing what is happening now, which is the only vantage point from which you can spot where things are going. The same distinction applies to analytics. Reading logs after the fact tells you about the past. Observing a system that was designed to be observed tells you about the present — and gives you something to say about the future.

That shift is not a tool change. It is a relationship change.

Two Teams Reading the Same Data Differently

Development teams build systems. They make decisions about data structures, field names, object graphs, and state management based on what makes the code work. A field exists because it serves a purpose in the application's logic, not because someone decided it should be readable by a reporting tool.

Analytics teams read systems. They make decisions about which fields to trust, which events to count, and which data points are stable enough to build reports on. They look at what the application produces and infer meaning from the structure they find.

Those two activities have different goals, different time horizons, and fundamentally different definitions of "what matters." A developer thinking about a field asks: does this serve the application correctly? An analyst thinking about the same field asks: does this reliably represent what I'm trying to measure?

Both questions are reasonable. Neither team is doing anything wrong. But when they go unanswered in each other's presence, the data gets interpreted in two parallel ways that only become visible when something breaks.

Development experiences this as: "We changed an implementation detail and something broke that we didn't know was depending on it."

Analytics experiences this as: "The data we were relying on disappeared — not because the business changed, but because the system did."

The asymmetry is structural. Development naturally operates at the level of sessions, requests, and code paths. Analytics naturally operates at the level of transactions, outcomes, and trends. The same field means something different depending on which level you are reading from. Neither interpretation is wrong — they are just not the same interpretation, and most organizations have no mechanism to make that visible until it causes a problem.

What Makes This a Relationship Problem

Most organizations respond to this with documentation. A data catalog. A data dictionary. A wiki entry someone writes when the field is created and nobody updates when it changes.

That approach treats the problem as an information gap — analytics just does not know what the field means. But the real problem is a relationship gap: analytics and development do not have a mechanism to tell each other what they need from the data the other team is producing or consuming.

The analytics team that built reporting logic on that transaction field was not uninformed. They looked at the available data and made the most reasonable inference they could. What they did not know was that the field had no durability guarantee from development's side. Development did not withhold that information deliberately — they did not know anyone was depending on it at the report level.

Neither team could see the other's assumptions. They were making separate, reasonable decisions that accumulated into a structural risk.

The conversation when the source was traced had the structure of a classic Indiana Jones scene — specifically, the idol swap in the first ten minutes of Raiders of the Lost Ark.

Development explained it first: the old way of tracking closed transactions was storing state in memory across the workflow, which was messy and technically wrong. The new way wrote the final status directly to the record and cleaned everything up. An improvement by every measurable standard.

Analytics explained what they had been reading: a field that had been the most reliable indicator of transaction completion for eighteen months. Consistent. Deterministic. Gone.

The developer's response was some version of: "That field? That was just a workflow flag. I had no idea anyone was reporting on it."

The analytics lead's response was some version of: "It was the cleanest signal we had."

In Raiders, Indy carefully swaps the golden idol for a bag of sand — a meticulous, well-reasoned substitution — and immediately triggers every trap in the temple. The idol was load-bearing in ways the map did not indicate. Development had done the same thing: replaced a messy, technically incorrect field with a clean, correct implementation, and in doing so set off every alarm in the reporting layer. The swap was an improvement. The boulder was the report going to zero.

The difference between Indy and the average development team: Indy at least suspected a trap existed.

The analytic contract is the mechanism that makes those assumptions visible — not as documentation, but as a conversation that happens at delivery time. It is less about writing things down and more about establishing shared understanding before a feature ships rather than reconstructing meaning after something breaks.

What the Conversation Changes

The core shift is in when analytics becomes a stakeholder in a feature. In most organizations, analytics is post-delivery: the team ships, the data appears in the warehouse, and analytics figures out what to do with it. That sequence is why reporting logic ends up built on implementation details — those details are all that is available at the point analytics arrives.

The analytic contract moves analytics into the delivery conversation. Not as a reviewer or a gatekeeper, but as someone whose question — "how will we know this feature is working, from the data it produces?" — is worth asking before the sprint closes.

That question is different from "what does this feature do?" It asks: what behavior needs to be observable after this ships? Which fields or events will carry that signal? What would we need to change if we wanted to answer a different business question from the same system?

When development hears that question before shipping, a few things change. Fields that were going to be ephemeral get a durability decision made explicitly rather than by default. Events that were going to be implicit get considered as reporting surfaces. And occasionally, the conversation reveals that the analytics team's reporting question cannot actually be answered from the data the feature was about to produce — which is much easier to address before the sprint closes than after the report breaks.

For analytics, the shift is from reading whatever exists to participating in what gets designed. That changes the relationship from consumer to collaborator — and it changes what analytics is able to build, because the signals are designed rather than inferred.

What I've observed in teams that make this shift: the reports become more stable, not because the development team got more careful, but because both teams started having the right conversation earlier. Development does not need to change how they code. They need to know which of their decisions have reporting consequences so they can make those decisions deliberately rather than by accident.

If a field drives an executive report, it is no longer just a field. It is part of the product contract. The analytic contract is just a way of saying that out loud before something breaks.

When analytics starts after delivery, it is doing archaeology — always looking backward, always reconstructing meaning from a culture that has already changed. When analytics is designed into delivery, it becomes sociology: a living system observed in motion, with evidence built to capture not just what happened but what is happening now. That is the only position from which you can ask where things are going — and have data worth trusting when you try to answer.

Explore More

Related articles that extend this thinking:

- Measuring AI's Contribution to Code — When the question "how much did AI write?" surfaces a fundamental gap in how we define what we are measuring.

- From Features to Outcomes: Keeping Your Eye on the Prize — Why outcome-focused thinking changes how you define success before you ship, not after.

- AI Observability Is No Joke — What intentional observability requires when the system you are watching is an AI agent making decisions.

- Mountains of Misunderstanding: The AI Confidence Trap — On the gap between reported confidence and actual reliability — a familiar pattern in analytics too.