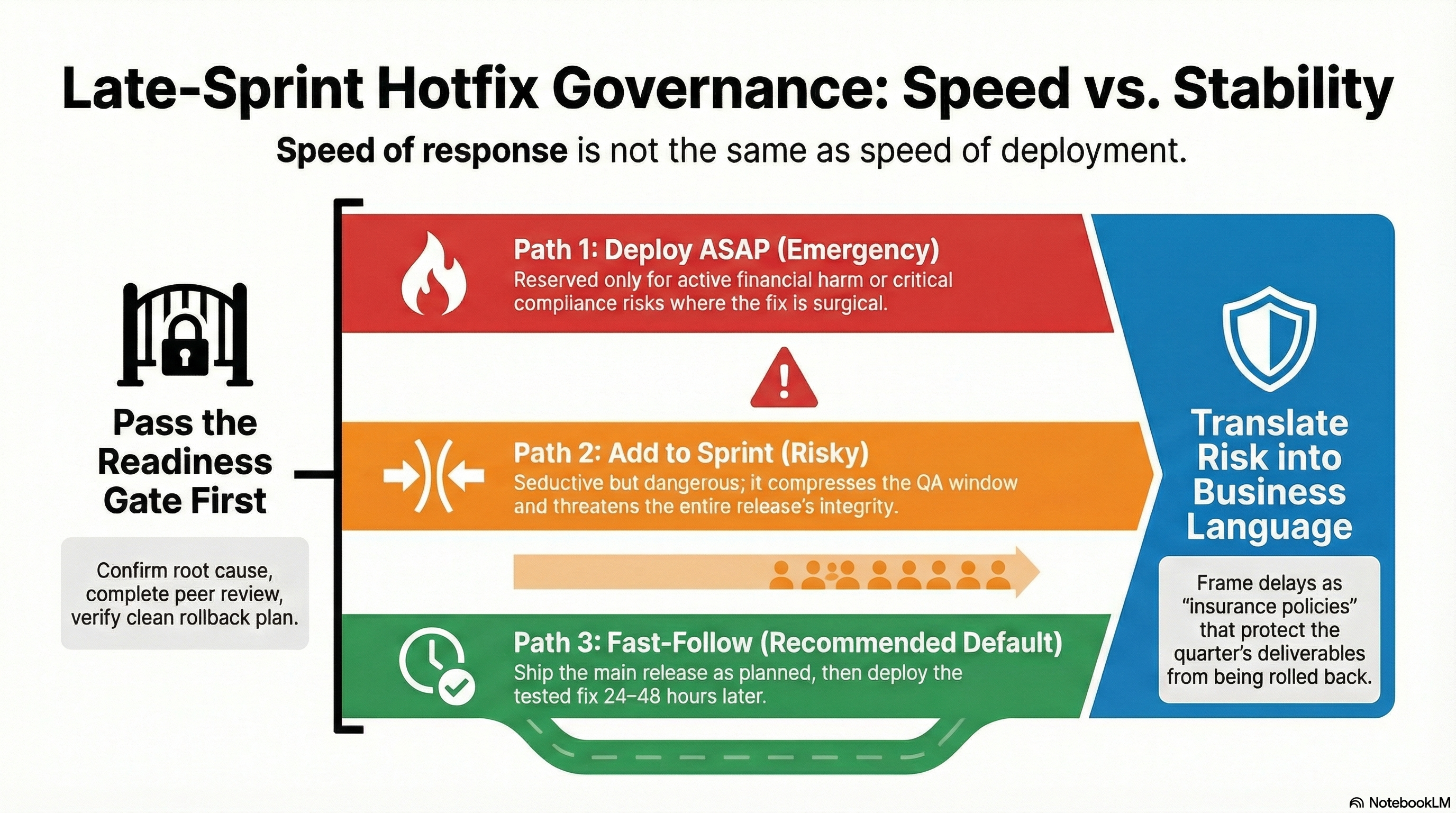

When the Pressure is On - Late Sprint Hotfix Governance

Late-sprint defects create intense pressure to rush fixes into production. But speed of response isn't the same as speed of deployment. This article explores a governance framework that balances customer impact, release stability, and team sanity—introducing the various drivers and gates that make late-sprint hotfix decisions defensible, repeatable, and rational.

Leadership Philosophy Series — 6 articles

- The Power of Lifelong Learning

- AI and Critical Thinking in Software Development

- Sidetracked by Sizzle: Staying Focused on True Value

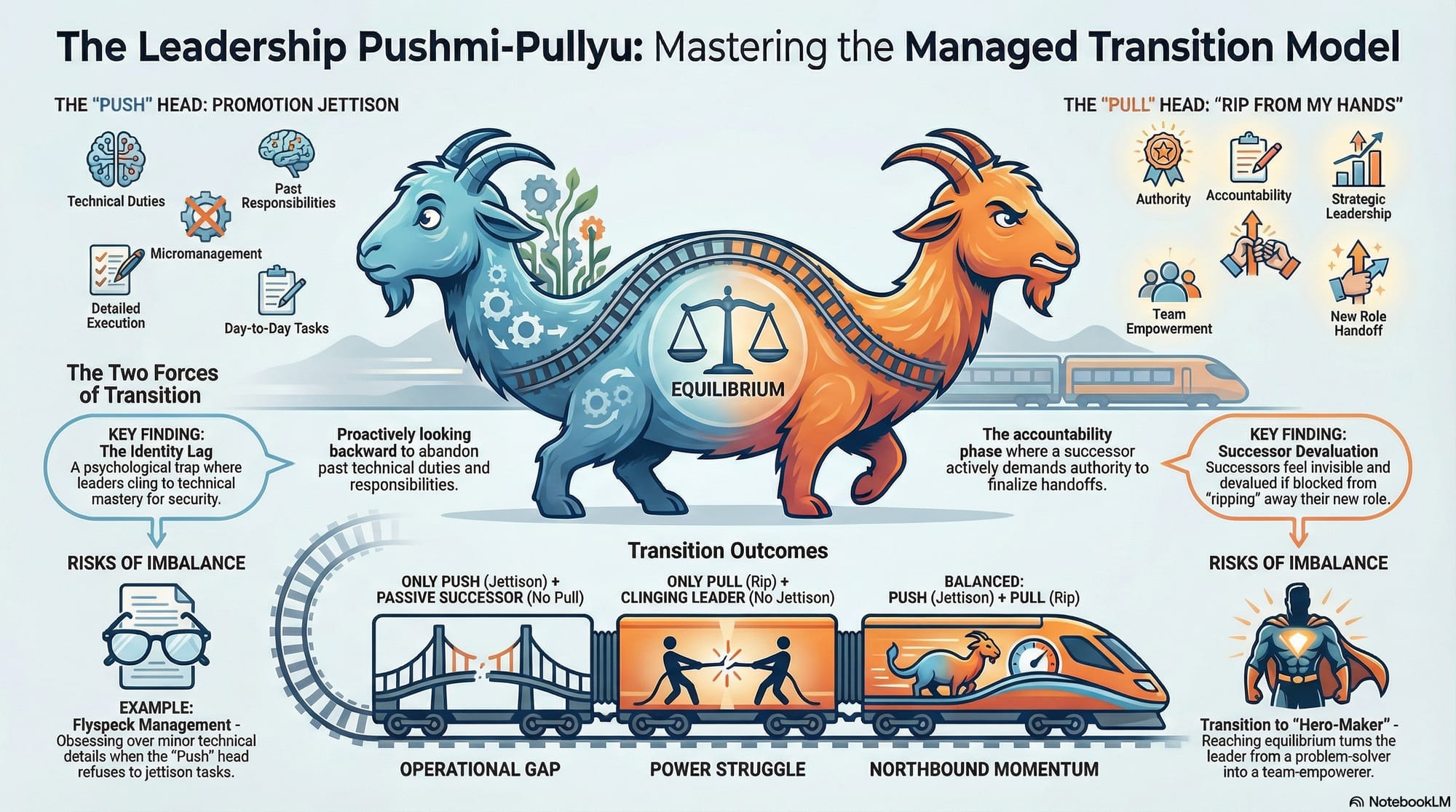

- The Managed Transition Model: Leadership Promotion as Power Exchange

- When the Pressure is On - Late Sprint Hotfix Governance

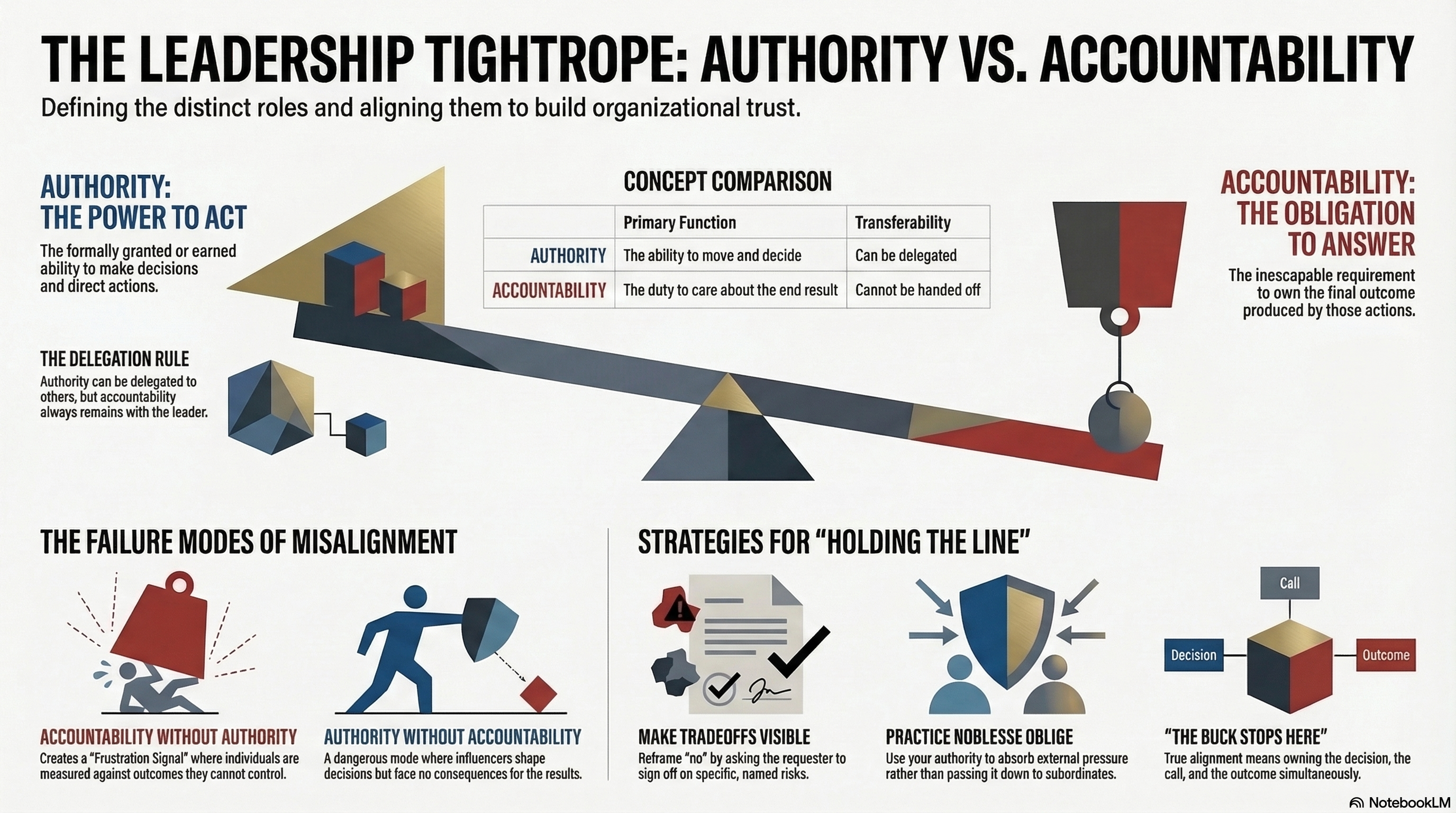

- Accountability and Authority: Walking the Tightrope

The Friday Afternoon Scenario

It's 3:47 PM on a Friday. Your sprint ends Monday. You've got a release scheduled for Tuesday morning. And Dave, one of your senior engineers, just posted in Slack: "Found a bug in the payment confirmation flow. Customers are seeing stale order totals after applying a discount code. I can fix this in 20 minutes."

The product owner sees the message immediately. "This is customer-facing. We need to fix it before Tuesday." The customer success lead chimes in: "We've had four tickets on this today." Your VP of Sales forwards a screenshot from a key account: "They're asking if we're aware."

And just like that, at 3:47 PM on a Friday, you're making a decision that could rescue Tuesday's release—or blow it up entirely.

I've been on both sides of this exact scenario—as the Dave who was confident I could fix it quickly, and as the leader who had to decide whether to let that fix anywhere near our release train. What I've learned is that the speed of response is not the same as the speed of deployment. And understanding that distinction changes everything.

The Invisible Forces Pulling at Dave's Fix

Before we follow Dave's fix through the decision framework, it's worth naming the forces already pulling on this situation—because they're pulling on everyone in that Slack channel, whether they realize it or not.

Customer Impact — Real people are seeing wrong totals right now. Support tickets are climbing. This creates emotional pressure that's genuinely hard to ignore, and it should be hard to ignore. Customers matter.

Engineering Confidence — Dave has been in this codebase for two years. He knows the discount calculation path cold. He genuinely believes 20 minutes is enough. And he might be right. But "can fix it" and "should deploy it right now" are two very different propositions.

Release Momentum — Your team has spent two weeks building toward Tuesday. QA has signed off on 14 features. Stakeholders are expecting delivery. A last-minute injection threatens all of that careful work.

Business Optics — Nobody in that Slack channel wants to be the person who "blocked the fix that would have saved the customer." The fear of looking obstructionist can override rational risk assessment faster than you'd think.

Team Fatigue — It's Friday afternoon. The team has been pushing hard all sprint. Adding emergency hotfix pressure on top of normal sprint crunch is exactly when mistakes happen.

Every one of these forces is legitimate. None of them are wrong. But they can't all win simultaneously. So you need a framework that makes the tradeoffs explicit rather than emotional—and you need it before 4 PM, because Dave is already opening his IDE.

Phase 1: The Fix Readiness Gate (Does Dave Actually Have Something Deployable?)

Here's the first thing I'd say to Dave: "I appreciate you jumping on this. Before we talk about when to deploy, let's make sure we have something ready to deploy."

This is the step teams skip under pressure. Dave says "I can fix this," and everyone immediately jumps to "when can we release it?" without validating that the fix is actually safe, understood, and reversible. The most common anti-pattern I've seen in this phase is skipping root cause analysis—the instinct to say "We'll fix it now and figure out why later." This almost always leads to incomplete fixes or the same bug resurfacing next sprint.

So at 4:15 PM, when Dave pushes his commit, I'd walk through the readiness gate with him:

"Dave, have we confirmed the root cause?" It's a discount calculation caching issue. The stale total persists when a promo code is applied after the cart is already rendered. Dave traced it to a missing cache invalidation call. Good—that's a confirmed root cause, not just symptom suppression.

"Has someone else reviewed the code?" Dave pulls in Maria for a quick review. She spots that the fix is a single function call addition in the cart service. Isolated. Clean. No side effects she can see.

"What's the change scope? Does this touch anything beyond the discount path?" Dave walks through it: one file changed, one function modified, no shared state mutations. The blast radius is small.

"Are the tests passing?" Unit tests green. The integration suite covers the discount flow. Dave adds a regression test for the specific stale-total scenario. All passing.

"What's the rollback plan if this goes sideways?" This is critical. If Dave can't answer this question clearly, the gate stays closed—period. Fortunately, the change is additive. Reverting the commit restores the previous behavior. The rollback is clean.

"Do we have a build artifact?" Dave's change is through CI. A deployable package exists. It's not "code on someone's laptop."

"How will we know this is working in production?" Dave confirms the cart service emits telemetry on discount applications. They can monitor for stale-total errors in real time.

Every box checked. Dave's fix passes the readiness gate at 4:45 PM. Now—and only now—we have a release timing discussion.

But here's the thing: if even one of those boxes was unchecked, the conversation would have been different. If Dave had said "I think it's a caching issue but I'm not 100% sure"—that's the anti-pattern of shipping a partially understood fix. Hope is not a deployment strategy. The gate stays closed, and we keep investigating over the weekend.

Phase 2: The Release Decision (What Happens to Dave's Fix?)

Dave's fix is ready. The question is no longer whether to release it, but how and when. There are three paths, and each carries a different risk profile.

Let's walk through them using Dave's actual situation.

Path 1: Deploy ASAP (Out-of-Band Hotfix)

This is the "break glass" option. You deploy Dave's fix tonight, outside the normal release cycle, and accept elevated risk in exchange for immediate customer relief.

Back to Dave's situation: Is the Friday defect causing active customer harm? Customers are seeing incorrect totals, yes—but are they being charged incorrectly? If the display is wrong but the actual charge is correct, this is a bad experience but not a financial harm. If customers are actually being overcharged or undercharged, that's a different severity level entirely.

Let's say Dave confirms: "The display is stale, but the actual charge is calculated correctly at checkout." That changes the calculus significantly. This is annoying, not catastrophic.

The specific danger with this path is the anti-pattern of releasing without rollback confidence. "We can't roll it back" is a terrifying sentence to hear at 11 PM on a Friday. Even when the fix looks surgical, out-of-band deployments carry more risk than teams initially believe. The pressure to act fast can make you miss edge cases that a normal QA cycle would catch.

When Deploy ASAP is the right call: Customers are experiencing real financial harm. Compliance exposure is escalating. The fix is surgical, well-understood, and you've confirmed rollback capability. This requires sign-off from engineering lead, QA acknowledgment, product/business risk acceptance, and a confirmed rollback plan.

How to make the case to stakeholders: If the VP of Sales pushes back on waiting, frame it clearly: "Deploying tonight means we've validated this fix with one engineer's review and limited regression testing. If the fix introduces a new problem, we could be rolling back at 2 AM with a worse customer experience than we have now. Are we comfortable with that risk for a display issue?"

Path 2: Add to Sprint Release

This is the path that feels efficient but can be dangerously seductive. The logic goes: "We're releasing Tuesday anyway. Dave's fix is ready. Why not just include it?"

Back to Dave's situation: It's Friday evening. The sprint release is Tuesday. That gives QA roughly two working days to absorb an additional change into their regression testing. Can they?

Here's where a particular anti-pattern lurks: normalizing late sprint injection. This path works occasionally for truly isolated changes. But if you find yourself slipping fixes into sprint releases every cycle, you're eroding release discipline. Each "just one more tiny thing" compresses the QA window and expands the regression surface. Eventually, the sprint release becomes a grab bag of last-minute additions that nobody has fully tested together.

When this path might work: The fix is truly isolated (Dave's single function call qualifies). You still have meaningful time for regression testing. The sprint release isn't already high-risk or overloaded. QA has capacity to absorb it.

Why it's risky even for Dave: It compresses the QA window when the team is already time-constrained. If Dave's fix introduces a subtle interaction with one of the 14 other features in the release, you can't separate it. The whole release is now suspect.

Approval threshold: This should be an explicit exception requiring QA lead, product owner, engineering lead, and release manager sign-off.

How to make the case to stakeholders: When someone says "just slip it into Tuesday's release," respond with: "We can include it, but it compresses QA from two full days to one. Are you willing to sign off on reduced regression coverage for the entire release—all 14 features—to include this display fix?" That reframe puts the risk where it belongs: on the person requesting the shortcut.

Path 3: Fast-Follow Hotfix Release (The Recommended Default)

This is the path most teams resist because it feels slower. But for Dave's situation—a display bug with no financial impact—it's almost certainly the right call.

The model: Let Tuesday's sprint release go out as planned with its 14 tested features. Deploy Dave's fix as a separate, focused release on Wednesday or Thursday after it's had proper QA attention.

Back to Dave's situation: The stale discount display is annoying, but customers are being charged correctly. The Tuesday release contains two weeks of carefully tested work. Is it worth risking that entire release to save customers 24-48 hours of a cosmetic issue?

When I frame it that way, the answer is almost always no.

Why this works:

- Protects the sprint release from last-minute destabilization

- Allows proper regression testing without time pressure

- Gives you a clean rollback boundary—if Dave's fix has a problem, it's completely separate from Tuesday's release

- Maintains the team's delivery predictability, which matters more than any single bug fix

What you're trading: Customer impact persists for an extra day or two. You need an additional deployment cycle. There's slight operational overhead.

Teams resist this approach initially because it feels like you're "not taking the problem seriously." But over time, as you build a track record of stable releases and fewer hotfix-induced outages, stakeholders start to trust the process.

How to make the case to stakeholders: Don't talk about "predictability"—a VP of Sales hears that word and thinks you're being slow and rigid. Instead, say: "By waiting 24 hours for a fast-follow, we protect the 99% of features in Tuesday's release from being rolled back because of a rushed patch. We're trading one day of delay on a display bug for the safety of the entire quarter's deliverables." Frame it as an insurance policy for their main asset, not an engineering preference.

What Happened to Dave?

So what actually happens at 5 PM on that Friday? Dave's fix passes the readiness gate. The team reviews the situation: a display-only bug with no financial impact, a clean fix, and a Tuesday release carrying 14 features.

The decision: fast-follow. Dave's fix goes into a separate branch. QA picks it up Monday morning with dedicated focus. Tuesday's release ships clean. Wednesday morning, Dave's fix deploys with full regression coverage. No drama, no 2 AM rollbacks, no weekend Slack emergencies.

Dave goes home at 5:30. The customers see the fix by Wednesday. And Tuesday's release—the one with two weeks of carefully tested work—ships without a single issue.

That's the quiet victory of good governance. It's not exciting. Nobody writes a war story about the Friday where nothing went wrong. But that's exactly the point.

Decision Heuristics (A Quick-Reference Lens)

When you're in the heat of the moment, here are the questions that cut through the noise:

Is there active customer harm happening right now? If yes and it's severe → lean toward Deploy ASAP. If it's a display or cosmetic issue → lean toward Fast-Follow.

Is this a compliance or financial exposure issue? If yes → Deploy ASAP is often justified. Business risk outweighs technical risk.

Does the fix touch multiple components or have unclear boundaries? If yes → Fast-Follow. Complexity equals regression risk. Don't let urgency override readiness.

Are we late in the hardening window already? If yes → Fast-Follow. You don't have time to properly test an expansion of scope.

Is QA capacity already strained? If yes → Fast-Follow. Don't overload your testing capacity at the worst possible time.

Is the planned release already carrying elevated risk? If yes → Fast-Follow. Don't compound risk on risk.

Is this a true one-line, isolated fix with no dependencies? If yes and you have high confidence → consider Deploy ASAP or Add to Sprint, but verify readiness gate first. Even one-line changes deserve the gate.

What Good Governance Actually Looks Like

Governance isn't bureaucracy. It's making implicit tradeoffs explicit and defensible—so you can explain your reasoning to stakeholders, to your team, and to yourself at 2 AM if something goes sideways.

Before any hotfix path:

- Verify telemetry coverage (how will you know if it's working?)

- Confirm feature flags where available (can you limit blast radius?)

- Validate rollback procedure (do you know how to undo this?)

- Notify support teams (they need to be ready for deployment or continued impact)

After release (regardless of path):

- Mandatory monitoring window with defined success metrics

- Check error rate, performance metrics, customer support signals, business KPIs

- Document what happened and what you learned

- Review metrics monthly to identify patterns

Metrics worth tracking:

- Hotfix frequency (trending up is a bad sign)

- Change failure rate (what % of hotfixes introduce new problems?)

- Mean time to restore (how fast do you recover from hotfix failures?)

- Percentage of releases with late scope changes (should be low)

- Post-release defect escape rate (how many bugs make it past your process?)

These metrics tell you whether your governance model is working or whether you're creating technical debt faster than you're paying it down.

The Maturity Curve (and How to Actually Level Up)

Teams evolve through predictable stages with hotfix governance. But knowing which stage you're in is only half the value—the other half is knowing what specific habit gets you to the next one.

Reactive — Rush every fix directly to production, firefight constantly, high failure rate.

Transition trigger to Managed: Implement the readiness gate as a simple checklist. Don't worry about release paths or decision frameworks yet. Just stop deploying code that hasn't been reviewed. Tape the checklist to the wall if you have to. Even if the release is late, fill out the checklist first. That single habit breaks the cycle of blind rushing.

Managed — Basic triage exists, but inconsistent application. Still lots of late-sprint scrambles.

Transition trigger to Disciplined: Exercise the power to say no. You graduate to Disciplined when you first refuse a release that fails the readiness gate. That first refusal is terrifying—stakeholders will push back, the team might be frustrated. But it proves the system works. One "no" is worth more than a hundred checklists.

Disciplined — Fix readiness gate enforced, release decisions follow the framework, exceptions are rare.

Transition trigger to Predictable: Make fast-follow the mandatory default for one month. Every non-critical late-sprint bug goes through fast-follow, no exceptions. This builds the muscle memory of waiting, demonstrates to stakeholders that the sky doesn't fall, and generates data showing that your release stability improves.

Predictable — Fast-follow is the default, release train stability is high, stakeholders trust the process.

Transition trigger to Optimized: Invest in feature flags and continuous delivery infrastructure. When you can deploy any time with confidence and limit blast radius through flags, the entire concept of a "late-sprint hotfix" starts to dissolve. The emergency goes away because deployment is no longer an event.

Optimized — Continuous delivery practices and feature flags reduce the need for hotfixes altogether.

Most teams I've worked with start at Reactive or Managed. Getting to Disciplined requires consistent leadership and willingness to absorb short-term pain—telling stakeholders "not yet" so that Tuesday's release ships clean. But each transition is achievable. You don't have to fix everything at once. Focus on one habit, prove it works, and build from there.

What Leadership Actually Means Here

If you're in a position to influence these decisions, your job is to protect the team from both external pressure and their own optimism.

Navigating external pressure: Stakeholders will push for speed. That's their job—they care about customer impact. Your job is to translate technical risk into business language they can act on. Don't say "we need more regression testing time." Say: "We can deploy this now with one engineer's review, which gives us maybe 80% confidence. Or we can deploy Wednesday after full regression, which gives us 99% confidence. The difference is whether we're risking Tuesday's entire release—your quarter's deliverables—for a 24-hour head start on a display bug."

Navigating internal optimism: Engineers are problem-solvers by nature. They want to help. Dave genuinely believed he could fix it safely in 20 minutes—and he was right about the fix. But the fix isn't the whole story. Your job is to ask the questions the team hasn't considered yet: "What else could this break? How will we know? What's the rollback plan? Is anyone willing to be on call tonight if this goes wrong?"

The hardest part of this role is sometimes saying "not yet" when everyone—including yourself—wants to say "yes." But mature teams move fast to understand, and deliberately to release.

Putting It Into Practice

If you want to operationalize this in your team:

- Publish a decision framework — Make it visible in your team wiki or documentation. Print it if you have to. It needs to be accessible at 3:47 PM on a Friday without searching for it.

- Add a readiness checklist to work items — Make the gate explicit, not something people have to remember.

- Train your triage participants — Product owners, QA leads, engineering leads all need to understand the model—and the business scripts for negotiating each path.

- Tag and track hotfix releases — You can't improve what you don't measure. Track which path you take and what the outcome was.

- Review metrics monthly — Are you getting better or worse? Is hotfix frequency trending down?

- Reinforce exception discipline — Late sprint injection should feel rare and uncomfortable, not routine. If it's routine, your planning process needs work.

The first few times you apply this framework, it will feel slow. But over time, as you build muscle memory and see the reduction in release failures, it becomes second nature. And the first time you ship a clean Tuesday release while calmly deploying a focused fix on Wednesday, you'll understand why the framework exists.

Final Thought

Late-sprint defects are inevitable. Pressure to rush is inevitable. What's not inevitable is letting that pressure dictate your decisions.

Dave found the bug at 3:47 PM on a Friday. By 5 PM, the team had validated the fix, assessed the risk, and made a deliberate choice. No drama. No weekend firefight. No broken release.

That's not being slow. That's being deliberate. And deliberate teams ship faster in the long run because they spend less time cleaning up the messes created by rushed decisions.

So next time it's 3:47 PM on a Friday and someone says "I can fix this in 20 minutes"—take them seriously. Validate the fix. Walk through the gate. And then decide which risk you're actually willing to take.

Further Reading

- Accountability and Authority: Walking the Tightrope — the hotfix decision is ultimately an authority question: who owns it, and who's accountable for the outcome?

- Evolution over Revolution: A Pragmatic Approach — the same instinct that drives reckless revolution drives the 20-minute hotfix that bypasses the gate

- From Features to Outcomes: Keeping Your Eye on the Prize — what outcome are you actually protecting? The answer shapes which risk is worth taking

- The Managed Transition Model: Leadership Promotion as Power Exchange — pressure decisions reveal how clearly authority has been transferred in new leadership roles

Explore More

- Accountability and Authority: Walking the Tightrope -- A continuation of Dave's story — what happened Monday morning

- Sidetracked by Sizzle: Staying Focused on True Value -- A Personal Reminder to Stay Anchored to What Actually Matters

- The Power of Lifelong Learning -- The Ongoing Choice to Stay Curious and Capable

- Evolution over Revolution: A Pragmatic Approach -- What the caching bug was really telling Dave — and the whiteboard conver

- AI and Critical Thinking in Software Development -- What AI Handles and What Humans Must Still Own