Understanding System Cache: A Comprehensive Guide

System cache is crucial for speeding up processes and improving system performance. This guide explores its types, functionality, and benefits, along with management tips.

Development Series — 23 articles

- Mastering Git Repository Organization

- CancellationToken for Async Programming

- Git Flow Rethink: When Process Stops Paying Rent

- Understanding System Cache: A Comprehensive Guide

- Guide to Redis Local Instance Setup

- Fire and Forget for Enhanced Performance

- Building Resilient .NET Applications with Polly

- The Singleton Advantage: Managing Configurations in .NET

- Troubleshooting and Rebuilding My JS-Dev-Env Project

- Decorator Design Pattern - Adding Telemetry to HttpClient

- Generate Wiki Documentation from Your Code Repository

- TaskListProcessor - Enterprise Async Orchestration for .NET

- Architecting Agentic Services in .NET 9: Semantic Kernel

- NuGet Packages: Benefits and Challenges

- My Journey as a NuGet Gallery Developer and Educator

- Harnessing the Power of Caching in ASP.NET

- The Building of React-native-web-start

- TailwindSpark: Ignite Your Web Development

- Creating a PHP Website with ChatGPT

- Evolving PHP Development

- Modernizing Client Libraries in a .NET 4.8 Framework Application

- Building Git Spark: My First npm Package Journey

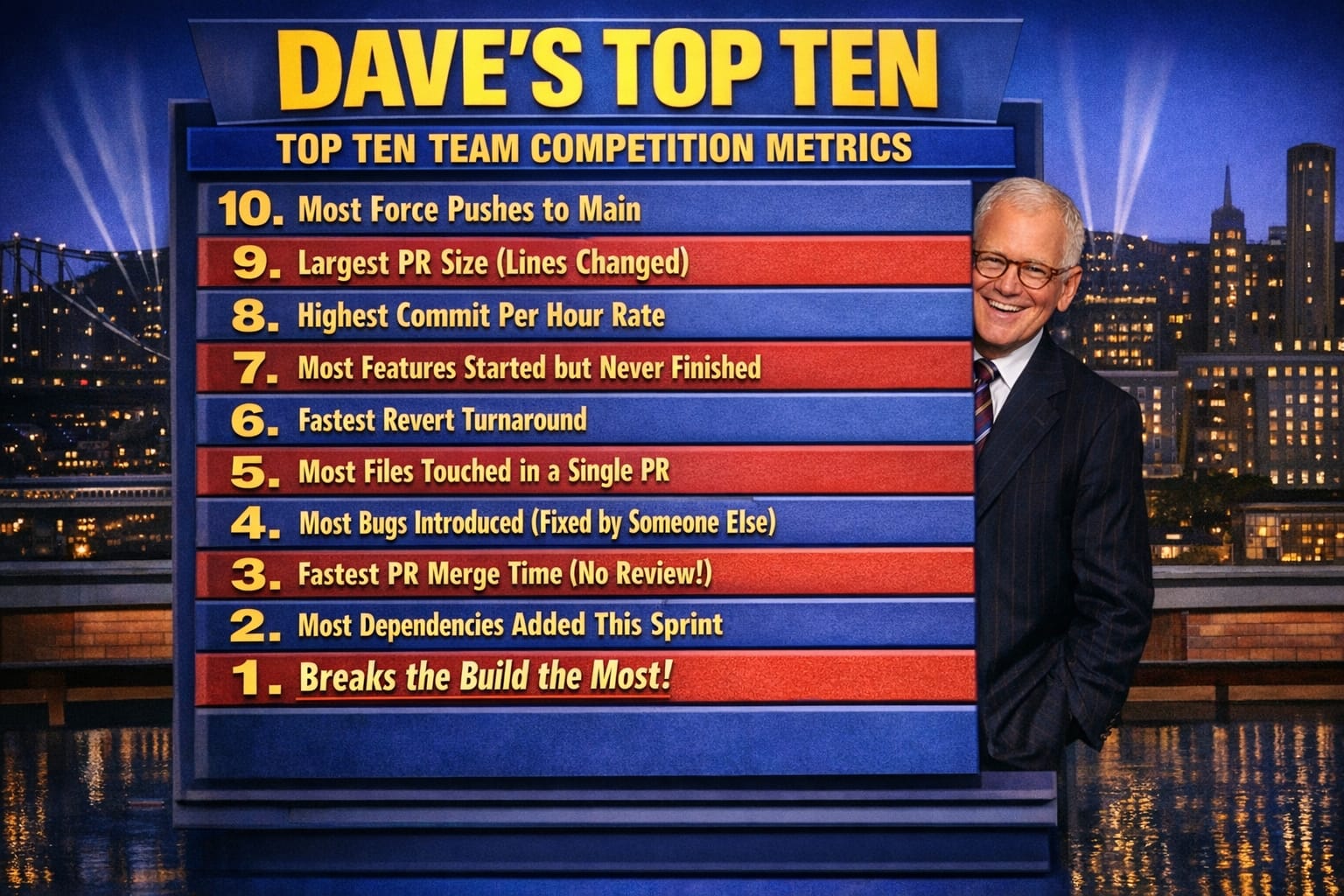

- Dave's Top Ten: Git Stats You Should Never Track

Understanding System Cache: A Comprehensive Guide

What is System Cache?

I watched a cache coherency bug take down an entire microservice cluster before anyone realised the L3 eviction policy was silently dropping the wrong entries. The hardware was fine. The code was fine. We were just evicting the wrong data at the wrong moment, and the result looked like random corruption under load. That experience taught me more about system cache than any documentation ever did — specifically, that cache is not a passive performance win. It is a contract you sign with the hardware, and the penalty clauses only appear under pressure.

System cache is a layer of high-speed memory that stores copies of frequently accessed data so the processor or application does not have to fetch it from a slower source on every request. When a request arrives, the system checks the cache first. A cache hit returns the data immediately. A cache miss falls back to main memory or disk, then stores a copy for next time. That basic mechanism is simple. What is not simple is what happens when you have multiple layers of cache operating simultaneously, each with its own eviction policy and coherency rules.

Types of System Cache

In my experience, the three you actually need to understand are CPU, disk, and application-level cache — and most performance disasters happen at the boundary between them:

- CPU Cache: A small-sized volatile memory layer that provides high-speed data storage and access directly to the processor. It is divided into levels (L1, L2, and L3), with L1 being the fastest and smallest. I've spent hours debugging subtle race conditions in L3 cache invalidation that looked like random data corruption until I understood the eviction policy. When L1 misses spike, you feel it immediately in latency; when L3 coherency breaks down across cores, you feel it in ways that are much harder to trace.

- Disk Cache: Stores data that is frequently read from or written to the disk, improving the speed of data retrieval and storage operations. On a recent project, a disk cache misconfiguration meant we were caching cold data aggressively while hot data kept falling out — the throughput numbers looked fine until we measured actual read latency under concurrent load.

- Web Cache: Used by browsers and CDN layers to store web pages, images, and other media, reducing bandwidth usage and load times on subsequent visits. The coherency problem here is different — stale content served from a CDN edge node can be invisible until a user reports seeing outdated data.

How System Cache Works

System cache works by storing copies of frequently accessed data in a location that can be accessed more quickly than the original source. When a request for data is made, the system first checks the cache. If the data is found (a cache hit), it is retrieved from the cache, saving time. If not (a cache miss), the data is retrieved from the main memory or disk, and a copy is stored in the cache for future requests.

What I've found is that this description makes caching sound frictionless. In practice, the moment you have multiple caches operating in layers — L1, L2, L3, disk, CDN, application-level — you inherit a coherency problem that can quietly corrupt state. Every layer that holds a copy of your data is a layer that can hold a stale copy.

The Cache Bargain

Cache is not a free lunch, and I've watched teams treat it as one until something breaks. Here is what you actually get and what you pay:

What you gain: Reduced data access latency is real and measurable. CPU cache alone can make the difference between a tight loop running in nanoseconds versus microseconds. Disk cache reduces I/O pressure on storage hardware. Application-level cache can absorb enormous database read load. These are genuine performance wins.

What you pay: Every byte of cache is a byte you are not using for application memory. Cache adds implementation and operational complexity — you now have to reason about invalidation, eviction policies, and coherency across every layer. Phil Karlton's observation that there are only two hard things in computer science — cache invalidation and naming things — is funny because it is accurate. In practice, invalidation bugs are among the most subtle production failures I've encountered: the system appears healthy, the data looks right most of the time, and the corruption only surfaces under specific access patterns or load conditions.

The efficiency gain from caching also has a ceiling. A cache sized too small thrashes constantly, spending more time on eviction and repopulation than it saves on retrieval. A cache sized too large eats memory that your application needs for actual work. Neither extreme shows up cleanly in high-level monitoring until the problem is already serious.

Managing System Cache

The trick I've learned is that cache management is not about blindly clearing or resizing — it's about understanding your hit ratio, eviction latency, and coherency cost.

Static cache-clear intervals backfire in my experience. I've seen scheduled cache flushes that ran every hour cause predictable latency spikes as the system repopulated from cold state, while doing nothing to address the actual problem, which was stale entries from a misconfigured TTL. What works instead:

- Monitor hit ratio continuously: A hit ratio above 80% generally indicates the cache is sized appropriately for the working set. When it drops below that threshold, the question is whether the working set has grown or whether you are caching the wrong data.

- Measure eviction cost, not just eviction count: High eviction counts with low eviction latency are usually fine. High eviction latency means your eviction policy is doing expensive work — coherency flushes, write-back operations — that will show up in application response times.

- Watch for L1 miss spikes in CPU-bound workloads: When L1 misses spike, it almost always means a hot data structure has grown beyond the cache's capacity, or a new access pattern is defeating spatial locality. We use

perfcounters for this, not guesswork. - Adjust cache size based on observed miss cost, not headroom: The question is not "do I have memory available?" but "what is a cache miss costing me in latency, and is that cost worth the memory I would use to reduce it?"

Cache configuration changes should be treated like any other infrastructure change — tested against realistic load, measured before and after, and rolled back if the hit ratio or latency profile does not improve.

Conclusion

System cache plays a vital role in modern computing, providing faster access to data and improving overall system efficiency. The hard part is not understanding what cache is — it is accepting that every performance win from caching comes with a hidden coherency or memory-fragmentation cost you will have to pay eventually. The teams I've seen get this right are the ones who instrument their cache behavior from the start, treat coherency failures as expected rather than surprising, and resist the temptation to add another caching layer before understanding the ones they already have.

Explore More

- Guide to Redis Local Instance Setup -- Master the Setup of Redis on Your Local Machine

- Modernizing Client Libraries in a .NET 4.8 Framework Application -- A Guide to Enhancing Your .NET 4.8 Application

- Decorator Design Pattern - Adding Telemetry to HttpClient -- Adding Telemetry to HttpClient in ASP.NET Core

- NuGet Packages: Benefits and Challenges -- Exploring the Pros and Cons of NuGet Packages

- Mastering Git Repository Organization -- Enhance Collaboration and Project Management with Git