Building Resilient .NET Applications with Polly

Network communication is inherently unreliable — timeouts, transient faults, downstream services that hiccup at exactly the wrong moment. Polly with HttpClient turns retries, timeouts, and circuit breakers from one-off code into a composable resilience pattern.

Development Series — 23 articles

- Mastering Git Repository Organization

- CancellationToken for Async Programming

- Git Flow Rethink: When Process Stops Paying Rent

- Understanding System Cache: A Comprehensive Guide

- Guide to Redis Local Instance Setup

- Fire and Forget for Enhanced Performance

- Building Resilient .NET Applications with Polly

- The Singleton Advantage: Managing Configurations in .NET

- Troubleshooting and Rebuilding My JS-Dev-Env Project

- Decorator Design Pattern - Adding Telemetry to HttpClient

- Generate Wiki Documentation from Your Code Repository

- TaskListProcessor - Enterprise Async Orchestration for .NET

- Architecting Agentic Services in .NET 9: Semantic Kernel

- NuGet Packages: Benefits and Challenges

- My Journey as a NuGet Gallery Developer and Educator

- Harnessing the Power of Caching in ASP.NET

- The Building of React-native-web-start

- TailwindSpark: Ignite Your Web Development

- Creating a PHP Website with ChatGPT

- Evolving PHP Development

- Modernizing Client Libraries in a .NET 4.8 Framework Application

- Building Git Spark: My First npm Package Journey

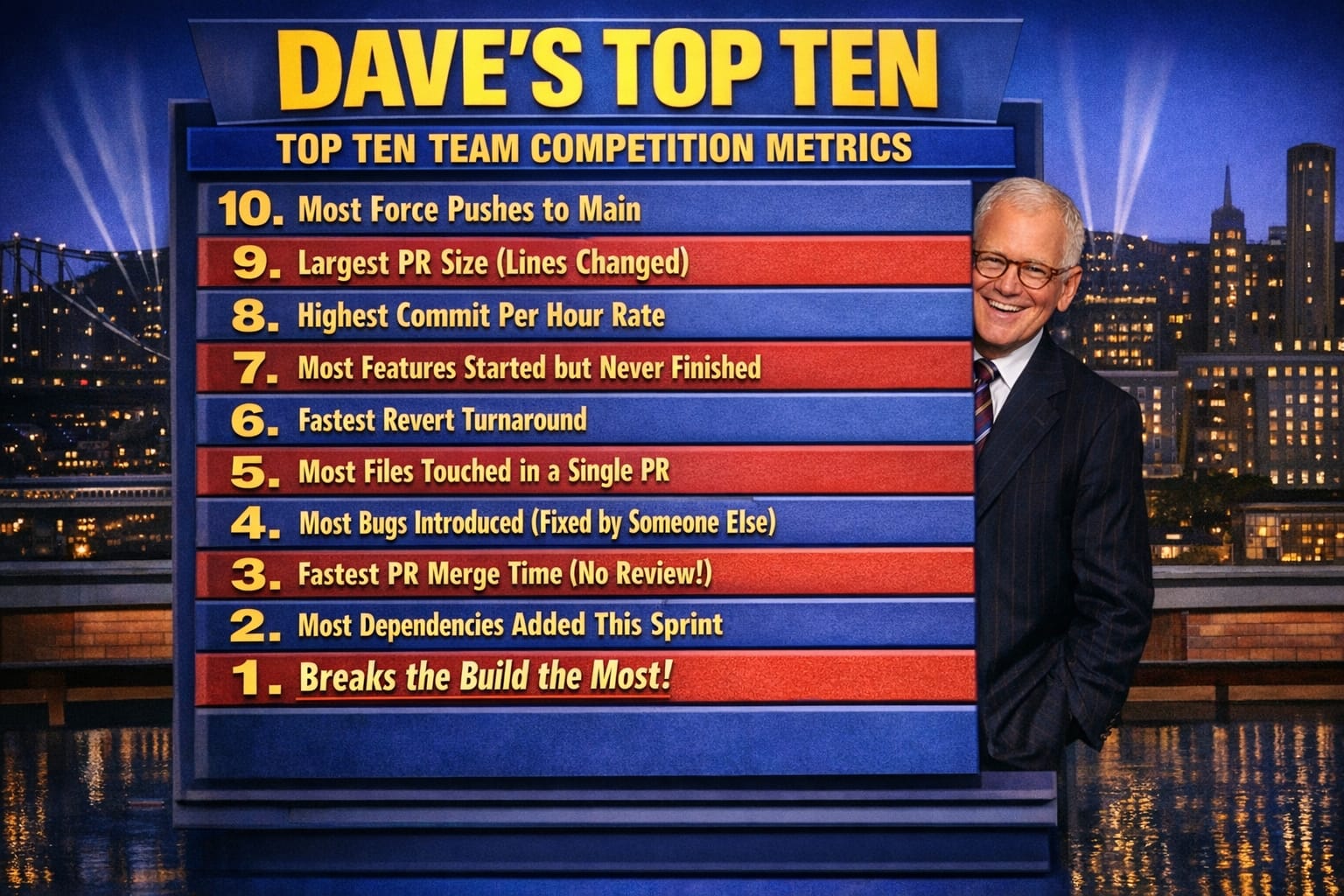

- Dave's Top Ten: Git Stats You Should Never Track

Building Resilient .NET Applications with Polly

Introduction

On a recent project, I watched a critical API integration fail silently during peak traffic. The service kept hammering a failing endpoint instead of backing off, and the repeated failures cascaded through the system until other services that depended on the same infrastructure started timing out too. That's when I understood why a circuit breaker isn't optional in a distributed system — it's the difference between a contained failure and a full outage.

When I started using Polly with HttpClient, I discovered that stacking policies requires careful thought. A circuit breaker that opens too quickly can mask the real issue a retry would have fixed. I've learned to apply Polly in three ways that have solved real problems in my systems: retries with exponential backoff, circuit breaking to prevent cascade failures, and timeouts to kill hung requests before they consume thread pool resources.

What is Polly?

Polly is a .NET resilience library that lets you define policies — retry, circuit breaker, timeout, bulkhead isolation, and fallback — and wrap your operations in them. What I've found useful about Polly is that the policy definitions are composable and testable independently of the actual HTTP calls, which matters when you're trying to verify behavior in unit tests without spinning up a real server.

Why Use Polly with HttpClient?

Network communication fails. In my experience, the failure modes that cause the most damage aren't the obvious ones — a hard 500 or a connection refused — but the subtle ones: a service that accepts the connection, starts processing, then drops it after 30 seconds. Without a timeout policy, that single slow dependency can exhaust your HttpClient thread pool and bring down an otherwise healthy service.

By integrating Polly with HttpClient, you get three concrete capabilities:

- Retry failed requests: Automatically retry requests that fail due to transient faults, with backoff intervals that give the downstream service time to recover.

- Implement timeouts: Ensure that requests don't hang indefinitely. I've found 10 seconds is often too generous — knowing your p99 latency for a given endpoint lets you set a tighter bound.

- Handle circuit breaking: Once a downstream service starts failing consistently, stop calling it. Give it time to recover rather than compounding the problem with continued load.

Setting Up Polly with HttpClient

To get started with Polly, install the Polly NuGet package via the Package Manager Console:

Install-Package PollyOnce installed, you can define your resilience policies. I typically start with a retry policy because it's the simplest win — but I've learned the hard way that without exponential backoff, you just hammer a broken service faster. Here's a retry that backs off properly:

var retryPolicy = Policy

.Handle<HttpRequestException>()

.WaitAndRetryAsync(3, retryAttempt => TimeSpan.FromSeconds(Math.Pow(2, retryAttempt)));

var httpClient = new HttpClient();

await retryPolicy.ExecuteAsync(async () =>

{

var response = await httpClient.GetAsync("https://api.example.com/data");

response.EnsureSuccessStatusCode();

});The Math.Pow(2, retryAttempt) gives you 2, 4, and 8 second delays across the three attempts. That breathing room makes a real difference when the downstream service is recovering from a transient overload.

Implementing Advanced Policies

Circuit Breaker

A circuit breaker policy stops an application from performing an operation that's likely to fail. Here's the basic implementation:

var circuitBreakerPolicy = Policy

.Handle<HttpRequestException>()

.CircuitBreakerAsync(2, TimeSpan.FromMinutes(1));This opens the circuit after 2 consecutive failures and holds it open for a minute before allowing a probe request through. The trade-off here is calibration: too low a threshold and you trip the circuit on noise; too high and you're still flooding a struggling service before it opens.

Timeout

Timeout policies ensure that operations don't run indefinitely:

var timeoutPolicy = Policy

.TimeoutAsync<HttpResponseMessage>(10); // 10 seconds timeoutThe Trade-Off I Discovered: Policy Ordering Matters

I initially stacked all three policies together — retry, circuit breaker, and timeout — and hit a subtle issue: the circuit breaker was opening before the retries had a chance to exhaust, which made it look like the circuit breaker was misfiring when the real problem was that my retry count was too high relative to the circuit breaker threshold.

The order in which you compose Polly policies is significant. The outermost policy executes first. What I've found works in practice is: timeout wraps the individual request, retry wraps the timeout, and circuit breaker wraps the retry. That way, a single hung request times out cleanly, the retry decides whether to try again, and the circuit breaker sees the outcome of the full retry sequence — not individual attempt failures.

A policy chain that I've found reliable looks like this:

var resilientPolicy = Policy.WrapAsync(circuitBreakerPolicy, retryPolicy, timeoutPolicy);The circuit breaker sits outermost, retries are in the middle, and timeout governs each individual attempt. Getting this order wrong in production led me to a situation where a single failed request triggered three retries, each timing out at 10 seconds — adding 30 seconds of latency before the circuit breaker finally saw enough failures to open. Understanding that interaction changed how I design resilience pipelines.

I've also noticed that testing these policies in isolation gives you false confidence. What you want to test is the composed behavior — how the circuit breaker responds when retries are exhausted, or whether a timeout during the second retry attempt counts toward the circuit breaker threshold. Those edge cases only surface under load, which is why I pair Polly configuration with realistic load tests before shipping.

Conclusion

Using Polly with HttpClient meaningfully improves the resilience of .NET applications, but the real value comes from understanding how the policies interact rather than just applying each one individually. In my experience, the retry policy alone handles the majority of transient failure cases, but it's the circuit breaker that prevents a bad situation from becoming a catastrophic one. The timeout policy is the safety net that keeps a slow downstream dependency from consuming your thread pool. Together, ordered correctly, they give you a resilience pipeline you can reason about — and that's what matters when something goes wrong at 2am.

Further Reading

Explore More

- Decorator Design Pattern - Adding Telemetry to HttpClient -- Adding Telemetry to HttpClient in ASP.NET Core

- RESTRunner: Building a DIY API Load Testing Tool -- Conventional wisdom says never build your own load tester. Here are the

- NuGet Packages: Benefits and Challenges -- Exploring the Pros and Cons of NuGet Packages

- Mastering Git Repository Organization -- Enhance Collaboration and Project Management with Git

- Guide to Redis Local Instance Setup -- Master the Setup of Redis on Your Local Machine