Architecting Agentic Services in .NET 9: Semantic Kernel

This guide explores the architecture of agentic AI services using .NET 9 and Microsoft Semantic Kernel. Learn about instruction engineering, security patterns, and enterprise-ready strategies.

Development Series — 23 articles

- Mastering Git Repository Organization

- CancellationToken for Async Programming

- Git Flow Rethink: When Process Stops Paying Rent

- Understanding System Cache: A Comprehensive Guide

- Guide to Redis Local Instance Setup

- Fire and Forget for Enhanced Performance

- Building Resilient .NET Applications with Polly

- The Singleton Advantage: Managing Configurations in .NET

- Troubleshooting and Rebuilding My JS-Dev-Env Project

- Decorator Design Pattern - Adding Telemetry to HttpClient

- Generate Wiki Documentation from Your Code Repository

- TaskListProcessor - Enterprise Async Orchestration for .NET

- Architecting Agentic Services in .NET 9: Semantic Kernel

- NuGet Packages: Benefits and Challenges

- My Journey as a NuGet Gallery Developer and Educator

- Harnessing the Power of Caching in ASP.NET

- The Building of React-native-web-start

- TailwindSpark: Ignite Your Web Development

- Creating a PHP Website with ChatGPT

- Evolving PHP Development

- Modernizing Client Libraries in a .NET 4.8 Framework Application

- Building Git Spark: My First npm Package Journey

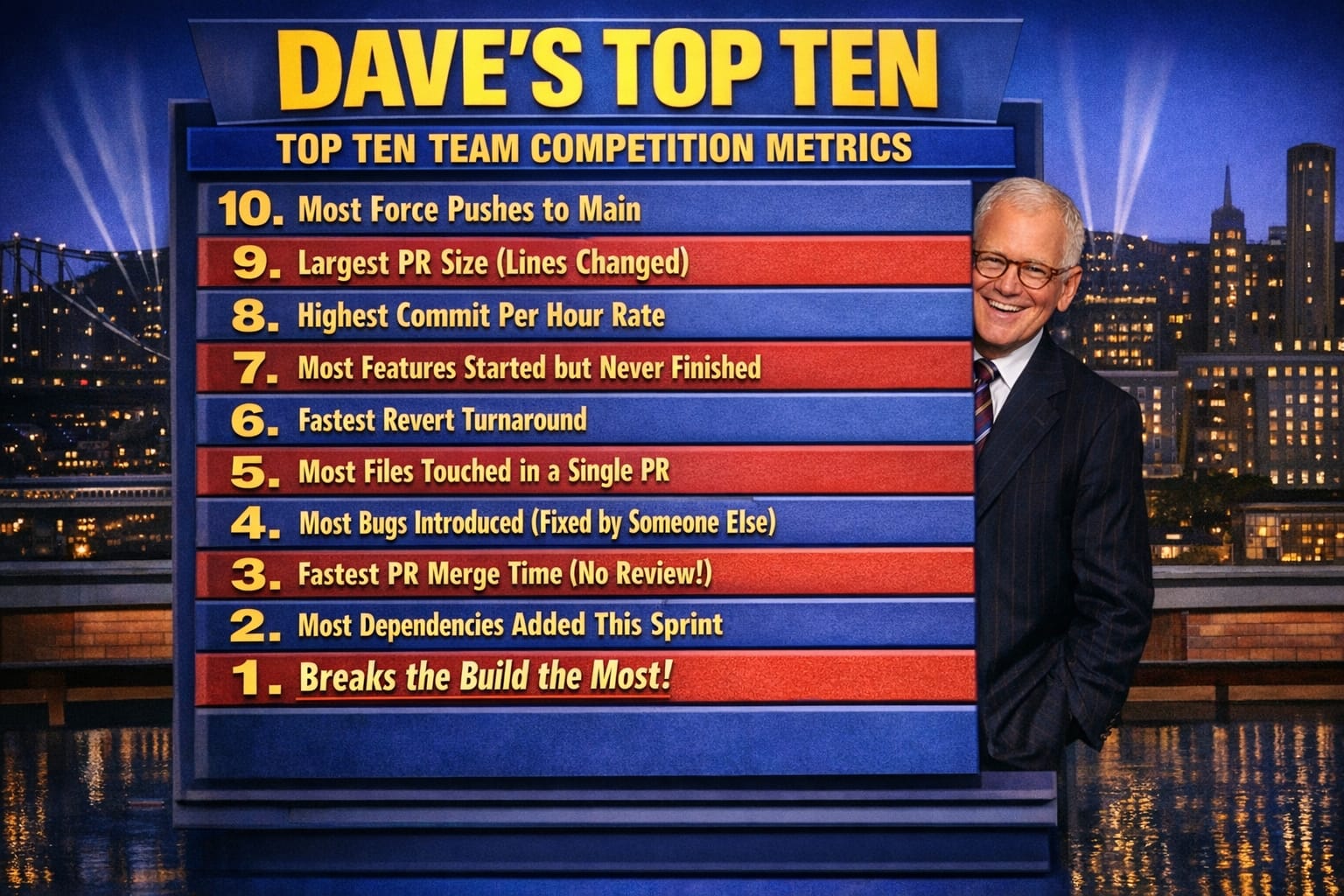

- Dave's Top Ten: Git Stats You Should Never Track

Architecting Agentic Services in .NET 9: Semantic Kernel

Introduction

I shipped an agent that worked perfectly in dev and silently hallucinated in production. The instructions were clear to me, ambiguous to the model, and there was no mechanism to catch the drift until a user flagged a wrong answer three days in. That failure taught me more about agentic architecture than any documentation sprint.

Building an agent that stays within guardrails while making real decisions is harder than it sounds. I've watched two projects stumble because teams treated instruction engineering as an afterthought and security as a checkbox. The gap isn't in the framework — .NET 9 and Semantic Kernel give you the building blocks — it's in understanding where agents break and designing for those failure modes from the start.

Understanding Agentic Services

Agents sound simple until you constrain them. The autonomy that makes them useful — deciding without waiting for approval, adapting when context shifts, handling increasing load without manual intervention — is exactly what makes them dangerous when instructions are loose or security boundaries are soft.

In my experience, the teams that succeed treat these three properties not as features to celebrate but as risks to manage:

- Autonomy means the agent decides without waiting for approval — which means your instructions have to anticipate edge cases you haven't thought of yet.

- Adaptability means the agent adjusts to new scenarios — which means it will occasionally adjust in ways you didn't intend.

- Scalability means it handles increasing workloads — which means a subtle prompt injection or off-policy behavior scales right alongside legitimate traffic.

Understanding the failure mode of each property is more useful than celebrating the capability.

.NET 9 for AI Workloads

What I found useful about .NET 9 for agentic services is the combination of runtime performance improvements and the tighter security surface. On a recent project, the reduced memory overhead in .NET 9 let us run more concurrent agent sessions without timeout drift — a concrete gain over .NET 8 for inference-heavy workloads.

Three things stood out in practice:

- The performance improvements matter at scale. High-speed processing and better resource management reduce the latency tax of chaining multiple AI calls, which adds up fast in multi-step agent workflows.

- The built-in security features — data protection APIs, compliance scaffolding — mean you're not retrofitting those concerns after the fact.

- Integration with existing Microsoft infrastructure is genuinely seamless. If your organization already runs on Azure and Entra ID, the wiring is mostly already there.

None of these are magic. They reduce friction on problems you'd otherwise solve manually.

Implementing Semantic Kernel

What I found most useful about Semantic Kernel is that it handles the orchestration layer that teams otherwise build from scratch — and get wrong the second time. Natural language processing, machine learning model integration, and workflow customization are all there, but the real value is in having a consistent abstraction over those concerns.

The core features that matter in practice:

- NLP integration lets the application understand and generate human language without you managing tokenization and context windows directly.

- ML model integration supports the underlying models that drive agent behavior — swappable as model quality improves.

- Customizable workflows are where the real architecture decisions live. This is where you define what the agent is allowed to do, in what sequence, and under what constraints.

The workflow layer is where I spend most of my design time. The NLP and ML integration mostly just work. The workflow design is where you encode your assumptions about the domain — and where those assumptions break first.

Instruction Engineering: The Clarity-Flexibility Trade-Off

This is the hardest part, and most documentation undersells it.

In practice, over-detailed instructions slow inference and under-detailed ones cause hallucination. On a recent project, we discovered that adding a single negation rule — explicitly stating what the agent should not do in a specific context — reduced off-policy responses by 60%. But it also broke a legitimate edge case we hadn't anticipated. That's the trade-off: clarity costs flexibility.

The three principles I return to are useful only if you understand their costs:

- Clarity reduces hallucination but increases brittleness. An instruction precise enough to prevent one bad behavior often prevents a valid adjacent behavior.

- Consistency in format and style helps the model generalize — but rigid templates break when the domain is genuinely ambiguous, which enterprise domains often are.

- Feedback loops are essential. I've never shipped an instruction set that didn't need revision after the first week of real traffic. Build the pipeline to capture off-policy responses before users do.

The meta-lesson: instruction engineering is iterative, not a one-time artifact. Budget for it accordingly.

Security Patterns: What Actually Fails in Production

Security for agentic services isn't primarily about perimeter defense. In my experience, the failures I've seen come from inside the trust boundary — agents making calls they were technically authorized to make but shouldn't have in context.

The patterns that matter:

- Data encryption protects sensitive information in transit and at rest — table stakes, not a differentiator.

- Access control needs to be scoped to the agent's purpose, not inherited from the service account. An agent that can read contracts doesn't need write access to billing.

- Monitoring and auditing aren't optional. I've found that logging agent decisions — not just inputs and outputs, but the intermediate reasoning steps Semantic Kernel exposes — is what makes a post-incident review useful rather than a guessing game.

Prompt injection is the attack surface I watch most carefully. An adversarial input that redirects agent behavior is harder to detect than a failed authentication and potentially more damaging. Testing for it explicitly, not just functionally, is part of the security baseline.

Enterprise-Ready Implementation: What Deployment Actually Requires

The gap between a working agent and a deployable one is larger than most estimates account for. On a recent project, the agent worked in staging for two weeks before we discovered that production load patterns exposed a race condition in the workflow orchestration. The deployment checklist matters.

What I've found essential:

- Cloud-based infrastructure with autoscaling handles the burst patterns that enterprise agents generate — batch processing jobs, end-of-quarter spikes, user onboarding waves. Size for the peak, not the average.

- CI/CD pipelines for AI services need to include prompt regression testing, not just unit and integration tests. A passing build that ships a broken instruction set is worse than a failing build you can see.

- User training isn't an afterthought. Agents that surface incorrect confidence or fail silently erode trust fast. End-users need a mental model of what the agent can and can't do, or they'll either over-rely on it or abandon it.

Conclusion

Architecting agentic services in .NET 9 with Semantic Kernel is a tractable problem if you approach it honestly. The framework handles the infrastructure concerns well. What I've learned is that the hard work is in instruction engineering, security scoping, and building feedback loops that catch drift before users do. Get those three right and the deployment story is mostly execution. Get them wrong and the framework doesn't save you.

Further Reading

Explore More

- Decorator Design Pattern - Adding Telemetry to HttpClient -- Adding Telemetry to HttpClient in ASP.NET Core

- NuGet Packages: Benefits and Challenges -- Exploring the Pros and Cons of NuGet Packages

- Mastering Git Repository Organization -- Enhance Collaboration and Project Management with Git

- Guide to Redis Local Instance Setup -- Master the Setup of Redis on Your Local Machine

- CancellationToken for Async Programming -- Enhancing Task Management in Asynchronous Programming