AI and Critical Thinking in Software Development

The most useful thing about AI tooling in software development is also the most worth watching carefully — it makes the work feel easier. But easier isn't always the same as better, and the cognitive habits that produce good judgment don't stay sharp on their own. This article explores the paradox at the center of AI-augmented development and what intentional augmentation actually looks like in practice.

Leadership Philosophy Series — 6 articles

- The Power of Lifelong Learning

- AI and Critical Thinking in Software Development

- Sidetracked by Sizzle: Staying Focused on True Value

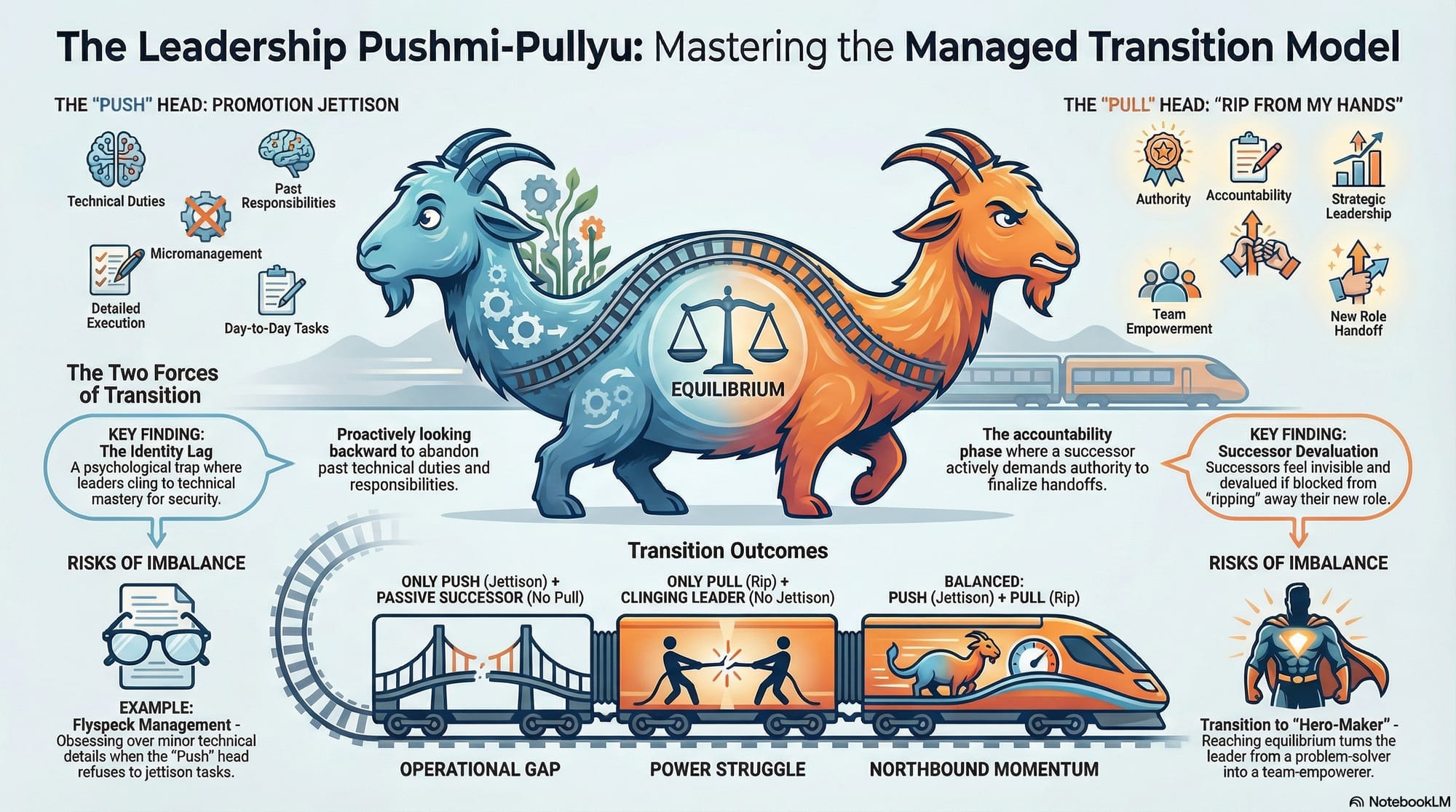

- The Managed Transition Model: Leadership Promotion as Power Exchange

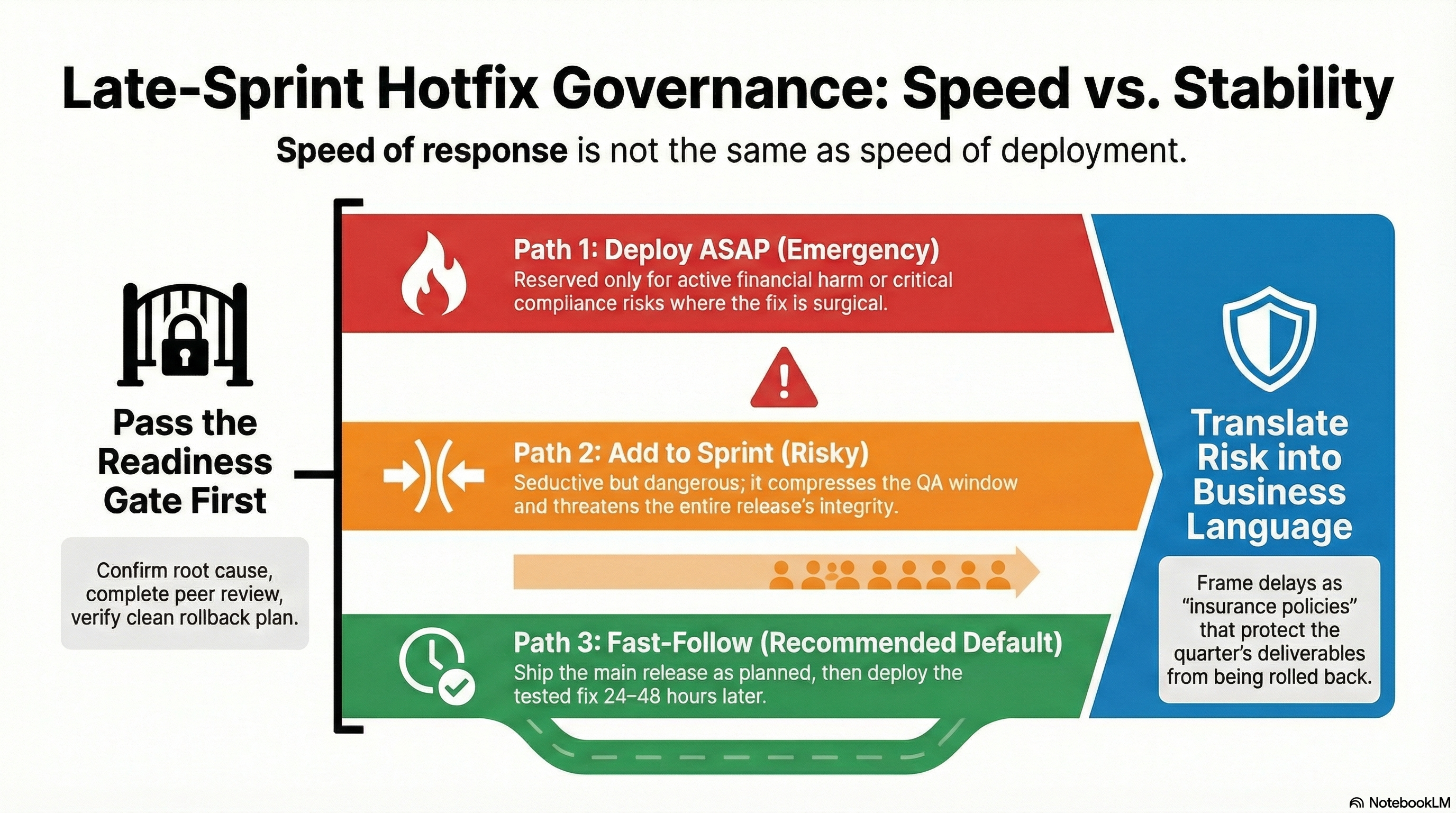

- When the Pressure is On - Late Sprint Hotfix Governance

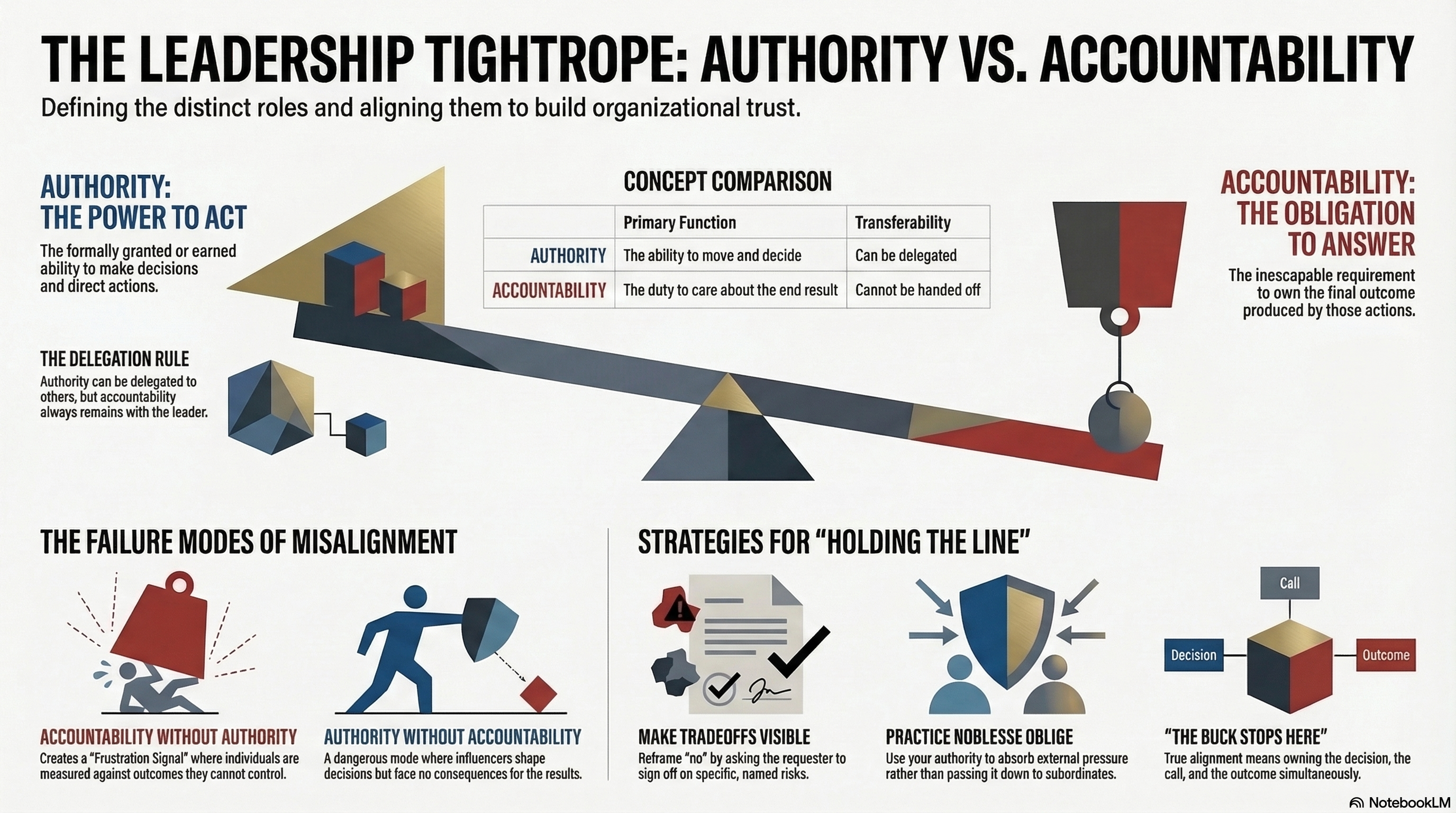

- Accountability and Authority: Walking the Tightrope

Deep Dive: AI Impact On Critical Thinking

The Paradox at the Center

There is a particular kind of help that makes you better while you're using it and subtly worse when it's taken away. AI tooling in software development is starting to look like it might be that kind of help.

The case for AI assistance is real and substantial. Code completion, automated testing suggestions, documentation generation, pattern recognition across large codebases — these capabilities reduce the friction on tasks that consumed significant developer time without requiring deep judgment. When that friction goes away, there's more cognitive space for the problems that actually need it. That's genuinely valuable.

But there's a different effect worth examining alongside the efficiency gains. The cognitive habits that produce good judgment — following a problem from symptom to root cause, holding uncertainty while exploring multiple hypotheses, recognizing when a solution is technically correct but contextually wrong — don't stay sharp automatically. They stay sharp through practice. And AI tooling, used in certain ways, substitutes for exactly the kind of practice that builds those habits.

The paradox is that the same tool that frees you to think more deeply about complex problems can, in its easier-to-use configurations, quietly encourage you to stop thinking as carefully about straightforward ones.

What Quiet Outsourcing Looks Like

The skill-erosion concern is sometimes framed as a dramatic scenario — developers who can no longer write code without AI assistance. That version of the story probably overstates things. But the subtler version is worth taking seriously.

Consider debugging. A developer working through a problem manually has to build a mental model of what the system is doing — trace the execution path, form and test hypotheses, narrow the search space through reasoning. That process is frustrating when it's slow and satisfying when it works. It also builds something durable: a clearer understanding of how the system behaves, and a sharper instinct for where to look next time.

A developer who immediately reaches for an AI assistant and follows its first suggestion to resolution gets to the answer faster. But the mental model doesn't form in the same way. Over time, if the pattern holds, the instinct for where to look can fade — because it hasn't been exercised.

This isn't an argument for making debugging artificially hard. It's an observation that the efficiency of AI assistance has a distribution effect: it tends to compress the time spent on the parts of the work that build the judgment used on the harder parts.

The Bias Problem Is Harder Than It Looks

There's a related concern that often gets listed alongside "dependency" and "skill erosion" in discussions of AI risk — that AI systems can reflect the biases present in their training data. This gets acknowledged and then often left there, as if noting the risk is sufficient.

It's worth sitting with it longer. When an AI tool suggests an architectural approach, or generates documentation, or proposes a test strategy, it's drawing on patterns that represent a particular slice of practice. That slice may not include the specific constraints, organizational context, or failure modes that matter most for the problem at hand. A developer who evaluates the suggestion critically — who asks "does this actually fit our situation?" — can catch that mismatch. A developer who treats the output as a starting point to be refined is in a much better position than one who treats it as an answer to be implemented.

The skill required to evaluate AI output well is, in many ways, the same skill that AI assistance can gradually erode if used without intentionality. Which is the sharper version of the paradox.

What Intentional Augmentation Looks Like

The frame that seems most useful isn't "how do you protect yourself from AI?" — that frames the tool as adversarial, which doesn't serve anyone. A better frame is: what does it look like to use AI in a way that keeps your judgment sharp rather than one that substitutes for it?

In practice, this tends to look like maintaining active engagement with the reasoning behind what AI produces. Not just accepting a suggested implementation, but understanding why it's structured the way it is — and whether that structure actually fits the problem. Using AI output as a first draft that you critique rather than a solution you apply.

It also looks like preserving, deliberately, some space for unassisted problem-solving. Not as a test of willpower, but as maintenance of a skill. The way a craftsperson might occasionally work with hand tools even when power tools are available — not because it's faster, but because the tactile feedback of the slower process maintains an understanding that the faster process doesn't provide.

And it looks like treating the evaluation of AI tools as a first-class professional responsibility. Not just "does this tool help me ship faster?" but "what am I not doing anymore because this tool does it for me, and should I care about that?"

These aren't easy habits to maintain, especially in environments where velocity is measured and cognitive overhead is visible in the wrong ways. But the developers and leaders who are thinking about this are the ones most likely to use AI in ways that compound their capabilities rather than gradually hollow them out.

Further Reading

- The Power of Lifelong Learning — skill maintenance as a deliberate practice, not a passive outcome

- Accountability and Authority: Walking the Tightrope — judgment is the one thing that can't be delegated, to AI or anyone else

- From Features to Outcomes: Keeping Your Eye on the Prize — AI efficiency means nothing if pointed at the wrong goal