Bring Your Own AI: DevSpark Unlocks Multi-Agent Collaboration

DevSpark's latest release rebuilds the framework's core to be completely AI-agnostic. The new Centralized Agent Registry — a single agents-registry.json file — strips every hardcoded 'if Copilot do this, if Claude do that' decision out of the framework scripts and replaces it with dynamic configuration. Adding support for tomorrow's newest AI tool is now a one-line registry entry. More practically: the same Markdown spec that one developer refines with Copilot in VS Code can be picked up and implemented by a colleague using Claude Code in the terminal, then reviewed by a tech lead in Cursor — without the framework skipping a beat.

DevSpark Series — 26 articles

- DevSpark: Constitution-Driven AI for Software Development

- Getting Started with DevSpark: Requirements Quality Matters

- DevSpark: Constitution-Based Pull Request Reviews

- Why I Built DevSpark

- Taking DevSpark to the Next Level

- From Oracle CASE to Spec-Driven AI Development

- Fork Management: Automating Upstream Integration

- DevSpark: The Evolution of AI-Assisted Software Development

- DevSpark: Months Later, Lessons Learned

- DevSpark in Practice: A NuGet Package Case Study

- DevSpark: From Fork to Framework — What the Commits Reveal

- DevSpark v0.1.0: Agent-Agnostic, Multi-User, and Built for Teams

- DevSpark Monorepo Support: Governing Multiple Apps in One Repository

- The DevSpark Tiered Prompt Model: Resolving Context at Scale

- A Governed Contribution Model for DevSpark Prompts

- Prompt Metadata: Enforcing the DevSpark Constitution

- Bring Your Own AI: DevSpark Unlocks Multi-Agent Collaboration

- Workflows as First-Class Artifacts: Defining Operations for AI

- Observability in AI Workflows: Exposing the Black Box

- Autonomy Guardrails: Bounding Agent Action Safely

- Dogfooding DevSpark: Building the Plane While Flying It

- Closing the Loop: Automating Feedback with Suggest-Improvement

- Designing the DevSpark CLI UX: Commands vs Prompts

- The Alias Layer: Masking Complexity in Agent Invocations

- DevSpark Blogging Workflow: How I Built Better Articles

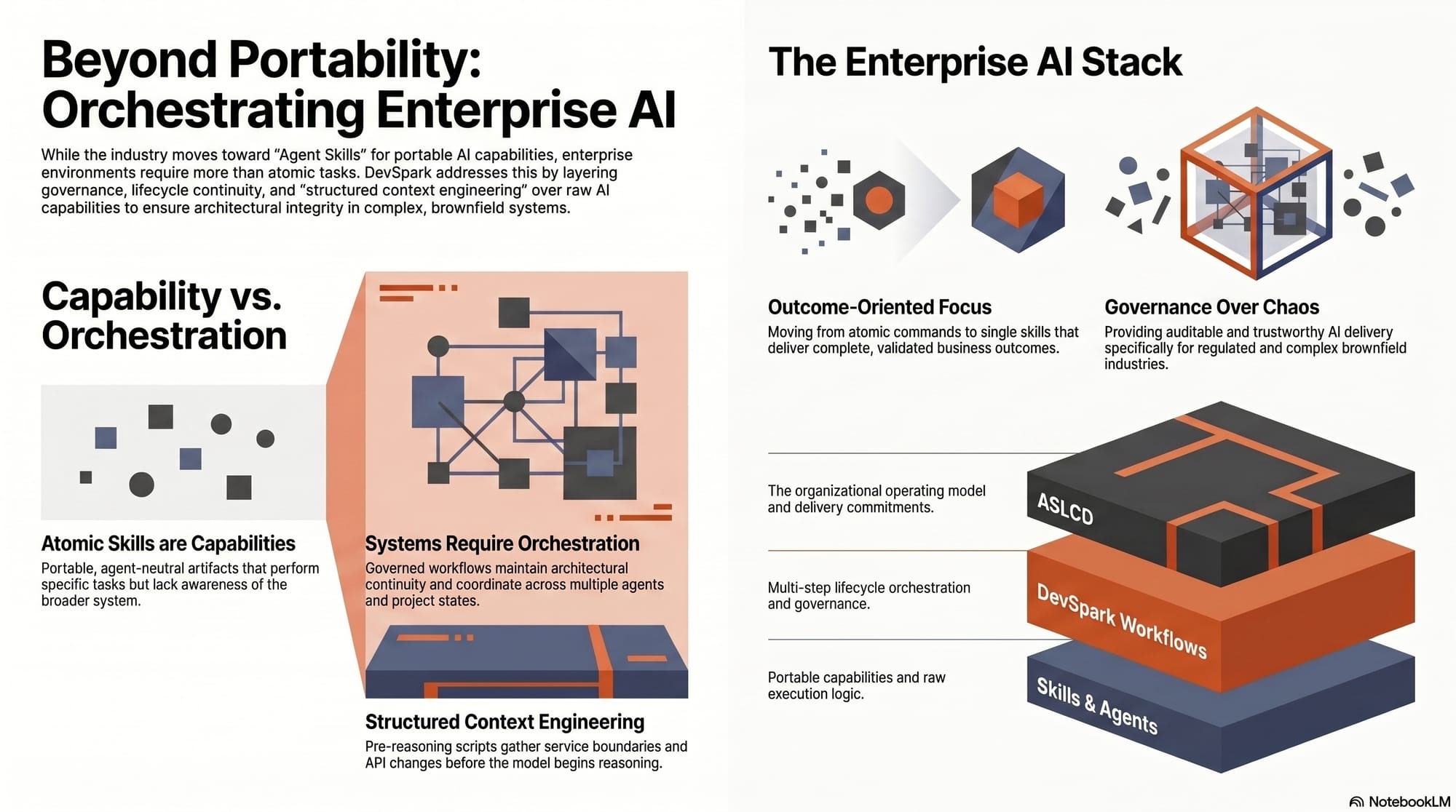

- DevSpark and Agent Skills: Beyond Portable AI Capabilities

Deep Dive: DevSpark 1.5 — Bring Your Own AI

The AI Fragmentation Problem

Every engineering team I talk to has the same story, with minor variations.

They adopted AI coding tools fast — because the productivity gains were real and the pressure to adopt was real. But they adopted in different directions. One developer landed on GitHub Copilot because it lives inside VS Code and they've used VS Code for a decade. Another pulled Claude Code because they live in the terminal and the reasoning quality is exceptional for complex architectural problems. A third evaluated Cursor during a trial period and never left, because the codebase-wide context it maintains changed how they navigate unfamiliar code.

Three capable developers. Three AI tools. One shared codebase.

The conflict usually surfaces when someone tries to build a team workflow. "Let's all use /devspark.specify to standardize our requirements." Great idea in principle. Painful in practice. Because before this release, running /devspark.specify from Claude Code meant Claude read one set of agent instructions. Running it from Copilot meant Copilot read a different set. The output looked similar but wasn't identical. And the scripts that wired everything together had conditionals scattered across them: if Copilot, format the shim this way; if Claude, write the file to that directory; if a new agent shows up that nobody anticipated, update every relevant script and hope you didn't miss one.

The framework was "multi-agent capable" in the same way that a restaurant is "vegetarian friendly" when it can remove the chicken from an existing dish. Technically true. Not really the point.

This release changes that.

One Principle, One File

The core architecture of DevSpark's ASLCD framework has been rebuilt around a single design principle: no AI agent should be privileged in the framework's own code.

The implementation is almost comically simple. A single JSON file — agents-registry.json — describes every agent the framework knows about: where its shim files live, what format they use, and how to name its commands. When a DevSpark script needs to generate or validate anything agent-specific, it reads the registry instead of checking which tool you happen to be running.

{

"id": "copilot",

"displayName": "GitHub Copilot",

"shimDir": ".github/agents"

}Three lines per agent. No code changes to add the next one.

What this replaced was the kind of creeping complexity that's easy to justify in the moment and painful to maintain at scale — hardcoded paths, branching logic per agent, format-specific templates embedded in scripts. Every new agent meant auditing every script. For a framework that takes AI tooling evolution seriously, that maintenance burden was self-defeating. The refactor moved all of that knowledge into configuration, and the framework got simpler.

The Handover Nobody Noticed

The best way to understand what this enables is to follow a single ticket through a team where three developers use three different AI tools — and the framework never notices the difference.

A senior developer working in VS Code with GitHub Copilot runs /devspark.specify. Copilot reads the shim from .github/agents/, which points to the canonical prompt in .documentation/commands/. They work through the clarification loops, refine the requirements, capture failure modes and acceptance criteria. When they're done, SPEC.md lives in the repository — plain Markdown, version-controlled, readable by anything.

They commit it and move on to their next ticket.

A different developer picks it up. They work in the terminal with Claude Code. They run /devspark.implement. Claude reads its shim from .claude/commands/, which points to the same canonical prompt. It reads the same spec. It reads the same constitution. Code gets written. Tests pass.

The tech lead opens Cursor for the PR review. They run /devspark.pr-review. Cursor reads its shim, the canonical prompt injects the constitution, and the review checks the implementation against the documented, version-controlled standards the whole team agreed to.

Three tools. One spec. One constitution. Nobody had to coordinate which tool to use — because the governance made that question irrelevant.

The reason this works isn't magic. It's a deliberate architectural choice: the framework's source of truth is plain Markdown, and plain Markdown is readable by every AI tool on every platform. The registry knows where each agent's shims live. The shims point to canonical prompts. The prompts are the same for everyone. Everything between the developer and the content is a thin, replaceable adapter.

The Conversation That Changed

Most engineering leaders I talk to are running experiments — a team here using one AI assistant, a team there using another, watching productivity metrics, trying to figure out which bet to make. That's a reasonable strategy in an uncertain market. But it creates a hidden cost: every team that builds workflow tooling around a specific AI agent is building something that breaks when that agent changes, when the team changes, or when management decides to standardize on something different.

The Centralized Agent Registry changes the shape of that conversation. Instead of "which AI tool should we standardize on?" the question becomes "what governance should we standardize on?" — and then each developer brings the tool they're most effective with. The specs your team wrote last year still work under a new agent. The constitution your tech leads ratified still governs. Only the shim format changes, and the registry handles that.

There's something deeper here than tool flexibility, though. Developers are most productive with tools they trust. Forcing a team to standardize on a single AI assistant because the governance framework only supports that one is an organizational own-goal — you standardize the tool while fragmenting the productivity. "Bring Your Own AI" shouldn't be a compliance workaround. It should be a first-class capability.

Selling Governance to the Boardroom

I'll be direct about something. The shift from "which tool" to "what governance" sounds natural on paper and lands like a grenade in a leadership meeting. A CISO doesn't care about an elegant JSON registry if they think corporate IP is leaking to four different AI models. Procurement wants a volume discount on a single vendor, not a spreadsheet of mixed subscriptions. And every engineering VP I've spoken with has an instinctive allergic reaction to the phrase "let developers use whatever they want."

These objections aren't irrational. They're the immune system of an organization doing its job. The way to earn buy-in isn't to dismiss those concerns — it's to reframe what "standardization" means. You're not standardizing the tool. You're standardizing the governance: the constitution, the spec format, the review process, the acceptance criteria. The tool becomes an implementation detail — the same way your team doesn't mandate that every developer use the same text editor for writing code, because the CI pipeline enforces the standards that matter.

The argument that lands in budget meetings is data, not philosophy. Track spec-to-PR cycle time — the elapsed time between a finalized spec in your issue tracker and the corresponding pull request opening in GitHub. You don't need unified AI telemetry to measure this; you timestamp the spec approval in JIRA or GitHub Issues, timestamp the PR, and cross-reference a required field in your PR template where the developer declares which AI tool they used. Over a few sprints, that data tells a clear story. If multi-agent governance maintains or improves cycle time without increasing defect rates, the case makes itself. If a particular agent consistently underperforms, you have evidence to guide the team — not a mandate, but data.

For security concerns specifically: the constitution is the right place to codify data-handling policies. "No proprietary source code may be sent to AI models without an approved enterprise license" is a governance rule, not a tool restriction. It applies equally whether the developer is using Copilot, Claude Code, or something that doesn't exist yet. And because the constitution is version-controlled, auditable, and enforced through the review process, it gives your security team something concrete to point at during compliance reviews.

What I'm Still Watching

I don't want to oversell this. The registry solves the agent-coupling problem cleanly, but multi-agent collaboration raises questions that stretch beyond configuration.

When two developers use different AI tools against the same spec, the outputs won't be identical. The reasoning styles are different. The code patterns are different. The places where each tool asks for clarification versus makes assumptions are different. The spec provides a shared contract, and the constitution establishes shared standards, but there's still a layer of interpretation that varies by tool. So far, the PR review process has caught the cases where that variation matters — but I'm watching closely to see whether that holds as teams scale.

Relying solely on PR review to catch architectural divergence is, frankly, the most expensive possible place to catch it. You're putting the entire burden of consistency enforcement on the tech lead reviewing the pull request — sifting through a 50-file diff to flag that the AI chose a service locator pattern when the team uses constructor injection. That turns your senior engineers into human linters, which defeats the whole point of AI acceleration.

The better approach is to pull alignment as far forward as possible. The constitution already defines project standards — but those standards need teeth. Instead of general guidance like "follow SOLID principles," the constitution should include explicit, unambiguous constraints: "All dependency injection must be constructor-based. Do not use service locators." "All API responses must use the shared ApiResult<T> wrapper. Do not return raw entities." These bright-line rules strip away the AI's freedom to choose a conflicting pattern before any code is written.

Beyond static rules, there's a workflow pattern worth watching: a clarification loop that forces the AI agent to output its structural assumptions for human approval before it begins generating implementation code. In practice, this looks like the developer reviewing a 10-line architectural summary — "I'll create three services, use the repository pattern, inject via constructor" — and hitting approve before the agent writes to any source files. Ten seconds of local friction saves two hours of arguments in a PR review. A pre-commit hook or wrapper script can enforce this mechanically: if the agent tries to write implementation files before a blueprint approval token is logged, the script blocks execution. The overhead is minimal, and the alignment guarantee is significant.

I'm still refining how prescriptive the framework itself should be about these guardrails versus leaving them to individual teams. But the direction is clear: proactive alignment beats reactive review.

There's also the question of what happens when AI tools start to diverge in capability rather than just format. Today, every major agent can read Markdown and follow structured prompts. If that assumption breaks — if a future tool requires a fundamentally different interaction model — the registry's three-line-per-agent elegance might need to evolve. But "might need to evolve" isn't a weakness when the architecture has a plan for it.

The registry schema is deliberately minimal today, but it's designed with an extension point in mind. Consider what a fourth field per agent entry could look like:

{

"id": "future-agent",

"displayName": "Future Agent",

"shimDir": ".future/agents",

"preprocessor": "scripts/compile-spec-to-future-format.sh"

}A preprocessor script path would intercept the plain Markdown spec and compile it into whatever proprietary, binary, or multimodal format a future tool demands — without changing the framework's core, the spec format, or any other agent's workflow. The Markdown remains the canonical source of truth. The preprocessor is a thin adapter, the same pattern that has kept software systems extensible for decades.

The natural objection is latency: if a preprocessor has to parse and transform every spec before the AI can read it, the development loop slows down. The mitigation is straightforward — cache the intermediate representation. If the Markdown hasn't changed since the last transformation, the preprocessor returns the cached output. You aren't building a perfect compiler; you're proving that the architecture has a designated socket for format evolution. That socket exists today, even if nothing is plugged into it yet.

The current three-line simplicity isn't accidental, and it isn't fragile. It reflects the current reality where every serious AI tool reads plain text. When that reality shifts — and it will — the framework won't need to be rebuilt. It will need a new adapter. That's a meaningful difference.

The AI development tool market is moving fast. A framework that hardcodes its agent assumptions will need constant maintenance just to stay current. The registry inverts that problem: the framework stays stable, and each new agent requires only configuration, not code. That feels right. But "feels right" is the beginning of a hypothesis, not the end of one. The real test is what happens when teams I've never talked to start using this in workflows I never anticipated.

If you want to see the registry schema, the full command reference, and the installation details, the DevSpark repository and documentation site have everything you need to get started. The specs are plain Markdown. The constitution is version-controlled. The registry is three lines per agent. And the governance doesn't care which tool reads it.

Explore More

- DevSpark v0.1.0: Agent-Agnostic, Multi-User, and Built for Teams -- How canonical prompts, thin shims, and per-user personalization let team

- DevSpark: Constitution-Driven AI for Software Development -- DevSpark aligns AI coding agents with project architecture and governanc

- DevSpark: The Evolution of AI-Assisted Software Development -- From requirements discipline to continuous governance — a complete frame

- Why I Built DevSpark -- Building the tool I needed to survive the reality of brownfield developm

- Getting Started with DevSpark: Requirements Quality Matters -- Enforcing requirements quality before code generation